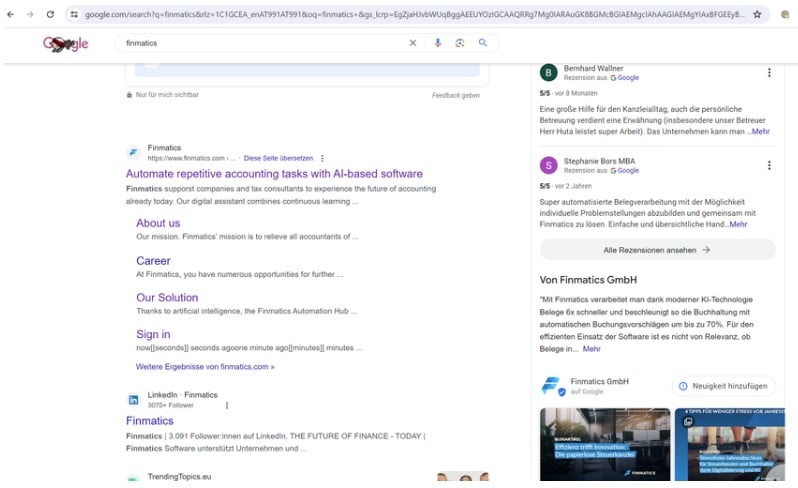

A technical SEO audit will reveal hidden roadblocks that might be hurting your rankings. Many websites neglect to even check for technical SEO issues, with only 54.6% of them meeting Core Web Vitals based on Google’s guidelines.

In this blog, Seth Kluver, AVP SEO/GEO, will describe what technical SEO is and provide you with a list of factors that could be negatively impacting your rankings.

What You’ll Learn

- What is Technical SEO?

- How Does Technical SEO Work?

- 40 Common Technical SEO Mistakes

- Monitoring and Maintaining Technical SEO

- FAQs About Technical SEO

My Expert Opinion on Technical SEO

There’s a lot that goes into SEO, but marketers often overlook the technical side of things. There are many common technical SEO issues that can hurt your ability to rank, making a regular technical SEO audit crucial in identifying any shortcomings that might be impacting your visibility online.

At the same time, there are a lot of technical SEO elements that can factor into your rankings, which is why it’s important to develop a complete technical SEO audit checklist to help you identify every potential issue and determine how to address it.

A technical website audit will cover everything from slow page loading times and broken links to a lack of mobile-responsiveness and overall site structure issues, all of which could hold you back if left unaddressed.

Now, let’s look deeper into how technical SEO site audits work, including the key technical SEO factors to measure and optimize for better performance.

What is Technical SEO?

Technical SEO is the way you’ve configured certain parts of your website so that it can be indexed for the web. It covers all the nitty-gritty, behind-the-scenes aspects, such as crawling, indexing, site structure, migrations, page speeds, core web vitals, and more.

Why is Technical SEO Important?

Technical SEO can be easy to overlook, especially if you aren’t well-versed in all of the aspects of it, but if you’re at all concerned with how your site is ranking (and if you’re here, you probably are) it’s something that simply can’t be overlooked.

What you do or don’t do when it comes to technical SEO plays a pivotal role in shaping your website’s online visibility and search engine rankings. It can also severely affect user experience. Oftentimes, improving user experience lies in improving many technical SEO factors.

Keep in mind that poor technical SEO elements can nullify even great, well-optimized content if it doesn’t have the right structure or fails to create the ideal user experience.

Key Elements of Technical SEO

Search engines need various components to crawl, index, and rank pages. Some of these technical SEO factors include:

- A logical site structure that’s easy to crawl, with an XML sitemap and robots.txt file

- Secure connections using HTTPS instead of unsecured HTTP

- Mobile-friendly designs that help ensure mobile-first indexing

- User-friendly design that leads to a good customer experience

- Structured data, including schema.org markup, for more context regarding a site’s content

- Proper handling of canonical tags to prevent confusion with duplicate content

- Resolved broken 404 pages with effective 302 redirects where needed

- Reliable rendering of HTML code and JavaScript

How Does Technical SEO Work?

Ever wondered how search engines like Google understand and rank your website? Mastering technical SEO is key to unlocking online success.

Let’s break down how to conduct a technical SEO analysis or audit.

Conducting a Technical SEO Audit

First, let’s walk through the basic steps of a technical SEO audit:

1. Plan

To best determine how to do a technical site audit, you must effectively plan the audit.

To start with, you’ll want to define your objectives and whether you want to conduct a complete or partial technical SEO site audit.

Planning will also involve setting up the tools you need for the audit, including Google Search Console and SEO crawlers.

2. Scan

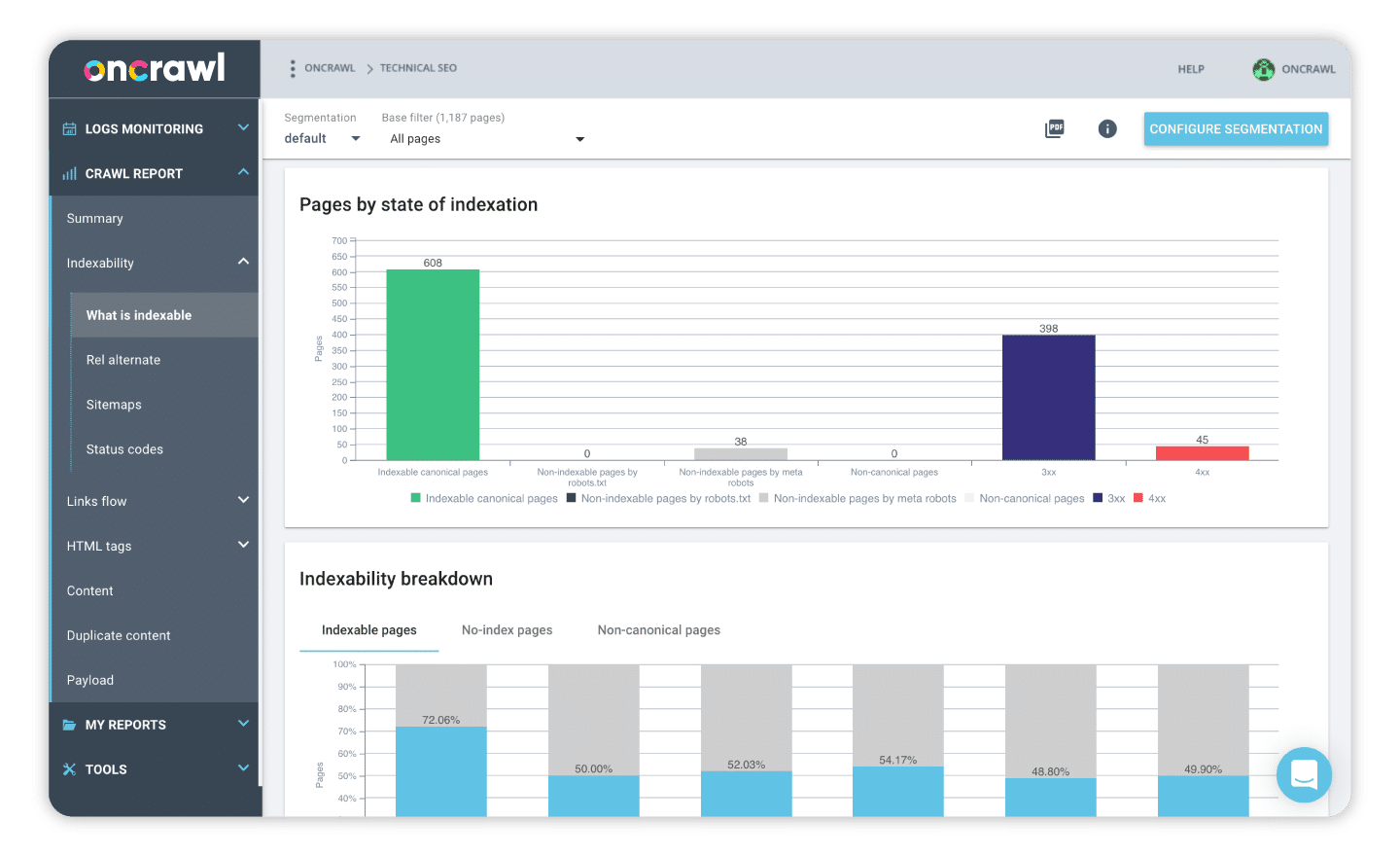

Next, using your crawling tools, you can scan your website to identify any technical issues.

However, make sure all of your target pages are crawlable with a valid robots.txt.

3. Analyze

Once you’ve crawled your site for any issues, it’s time to analyze the data and look for any issues with technical SEO elements.

Here are some SEO audit factors and their definitions to give you an idea of what to look for:

- Crawlability: Indicates search engine bots’ ability to crawl a website and all pages you want to index and rank.

- Indexability: Determines whether search engine bots can index pages in search results, preparing them for ranking.

- Core Web Vitals: Measures certain metrics that gauge a site’s page loading speed and its ability to properly load all page elements to create a cohesive and reliable user experience.

- Mobile-first Optimization: Confirms whether a website is designed for proper viewing on mobile devices, including smartphones and tablets, along with mobile-first rankings.

- Sitemap/Robots.txt: An XML sitemap can make it easier for bots to crawl a website, while robots.txt is a file enabling bots to crawl each page.

- Structured Data: Schema and other structured data tell Google what a page is specifically about, providing more context for more precise ranking.

4. Fix

After conducting your analysis, you can determine the specific fixes needed for common technical SEO issues.

For example, you might implement the following fixes for the above technical SEO factors:

- Making your site more crawlable with a proper XML sitemap and robots.txt

- Finding any pages with 4xx or 5xx errors and managing 302 redirects

- Optimizing your website for better Core Web Vitals metrics

- Checking for responsiveness on mobile devices for mobile-first optimization

- Incorporating internal links to ensure there are no orphaned pages

- Eliminating duplicate content and prioritizing pages with canonical tags

- Using schema markup and Google’s Rich Results Test to validate all structured data

5. Monitor

With your fixes implemented, continually monitor your website’s performance to keep a lookout for any other issues.

Regular technical SEO auditing on a website will be able to help you identify and address any issues before they compromise your site’s rankings.

There are a lot of technical SEO elements to be aware of, but luckily, there are also a lot of tools and resources that make the job easier!

Tools and Resources for Your Technical SEO Analysis

The first thing you need for your analysis is access to the Google Search Console.

This free tool will give you insights into how Google sees and indexes your pages, how to fix issues on your site, and how you optimize your content. You can also monitor your AMP pages and your Core Web Vitals.

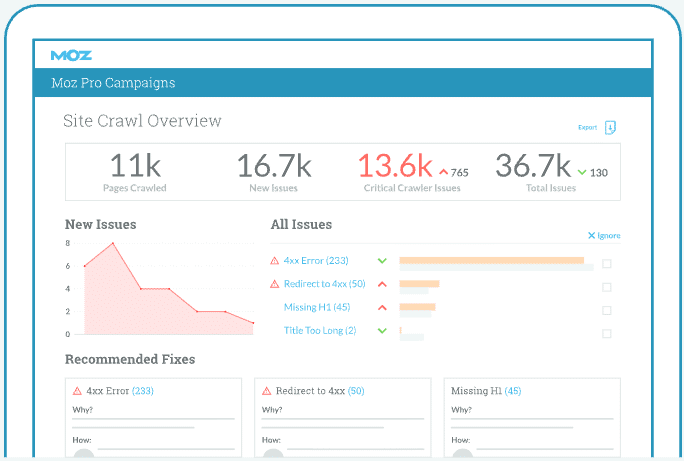

You’ll also need access to a web crawler tool. Some of my favorites include Semrush’s Site Audit, Moz Pro, or Screaming Frog. There are pros and cons to each tool, so don’t hesitate to play around with a few to see which one you like most.

Process of Indexing and Crawling the Web

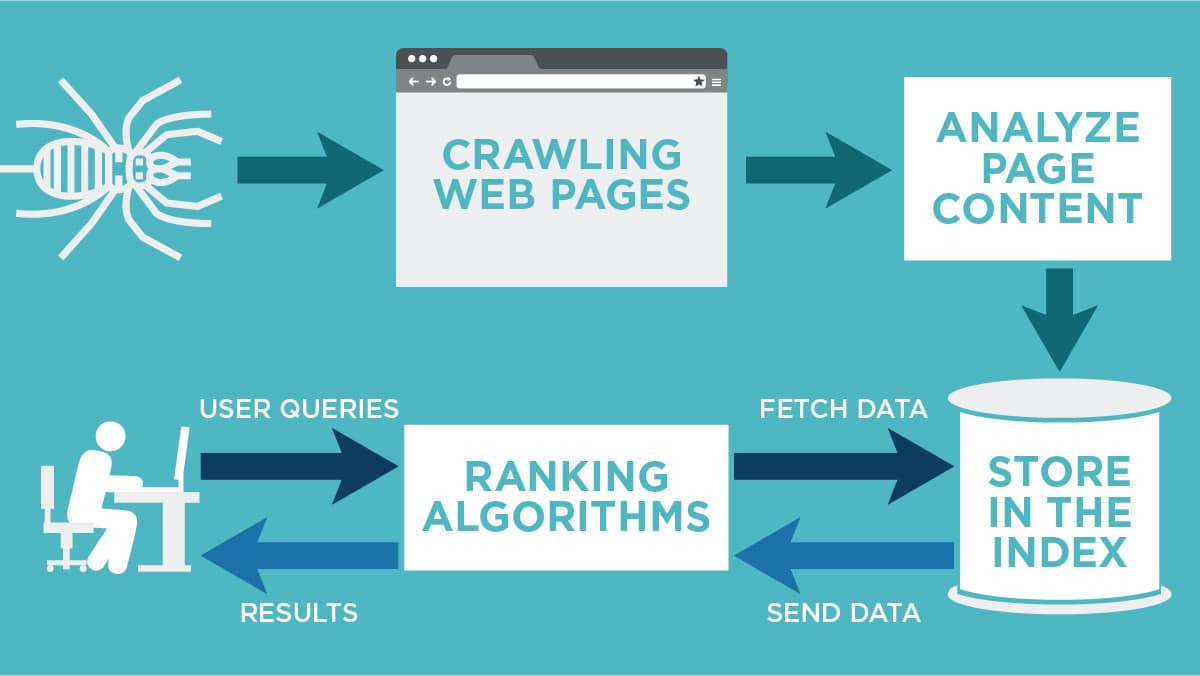

Indexing

Imagine indexing as the library card catalog for search engines. It’s essentially a massive database where search engines store information about web pages they’ve encountered. This determines whether your web pages even show up in search results.

Here’s how it works: search engines send out special bots (like automated researchers) that crawl your site (which we’ll cover next). These bots gather information about your content, categorize it by topic, and add it to the search engine’s index. This allows search engines to find the right pages to display when users search for relevant terms.

Crawling

Crawling is how search engines find and explore all the pages on your website. Back to our library analogy: Think of it as the bots following every hallway and room in a library. These bots navigate your site by following the links between your pages.

The more efficiently your site is structured, the easier it is for search engine bots to crawl everything. This is why technical SEO practices like clear URLs and well-organized internal linking are important.

Google typically crawls websites based on various factors, including their size and how often content is updated. While the exact frequency can vary, it’s important to optimize your site for crawling to ensure all of your valuable content gets noticed.

Factors Influencing Search Behavior

Multiple core search engine ranking signals will be affected by technical SEO elements. The following are some of those key SEO audit factors that can influence search behavior:

Accessibility

Tracking accessibility can help ensure everyone can use your website with ease, including people with impaired vision or other disabilities.

Here are some accessibility metrics to track in your audit:

| Metric | Impact |

| Accessibility Errors | Track the number of accessibility issues through auditing, including missing image alt text and color contrast issues |

| Semantic HTML Usage | Ensure the correct use of HTML tags like H1 and H2 tags to gauge readability and skimmability |

| Keyboard Navigation | Confirm whether all links, buttons, and other interactive elements are accessible using only the keyboard |

| Metadata Completeness | Track pages to determine whether pages contain complete title tags and meta descriptions to assist both search engine results and screen reading capabilities |

Speed and Experience

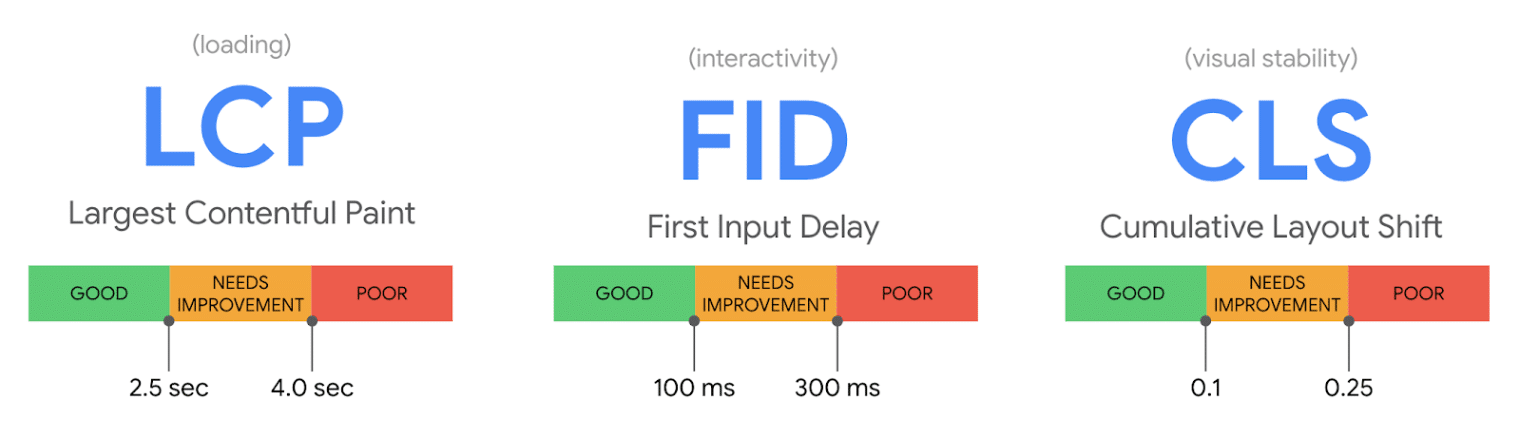

You can primarily use Core Web Vitals to measure site speed and the user experience.

Metrics to track here include:

| Metric | Impact |

| Largest Contentful Paint (LCP) | Measures the time it takes for the largest content elements on a page to appear in people’s browsers; should be under 2.5 seconds |

| Interaction to Next Paint (INP) | Tracks the responsiveness of a page to different user interactions, like clicks and taps; aim for under 200 milliseconds |

| Cumulative Layout Shift (CLS) | Gives the total sum of unexpected layout shifts that could occur as the page loads; try to keep this under 0.1 |

| Time to First Byte (TTFB) | The total amount of time it takes for a browser to receive the first byte of content from a server |

| Page Load Time | The total amount of time it takes for a page to fully render and load |

Mobile Usability

Many search engine users today rely on their mobile devices to conduct searches, making mobile optimization a priority to ensure more people find you online.

Track the following to gauge mobile usability:

| Metric | Impact |

| Mobile Usability Errors | Track errors in Google Search Console’s Mobile Usability Report, such as “Clickable elements too close together” and “Text too small to read” |

| Viewport Configuration | Measures the percentage of pages with meta tags featuring proper viewport meta tags indicating responsive design |

| Touch Target Size and Spacing | Tracks the sizing and spacing of links and buttons for touch input on mobile screens |

| Content Parity | Ensures that all features and content accessible on desktop devices translate to mobile versions |

| Mobile Organic Traffic and Engagement | Bounce rate, conversions, and session duration on mobile might differ from those on desktop sites |

Structured Data Visibility

Search engines can better “understand” a page’s content with this to more appropriately match it with people’s search queries.

Metrics to track here include:

| Metric | Impact |

| Rich Results Eligibility | Using the Google Rich Results Test tool, you can see the number or percentage of pages that qualify for rich results like FAQs and product information |

| Structured Data Errors and Warnings | Use Google Search Console’s Enhancement Reports or Google’s Schema Markup Validator to identify any issues with structured data |

| Organic Impressions | Google Search Console’s Performance report and Search Appearance filter can indicate impressions from rich results |

| Click-Through Rate (CTR) | Check to see the CTR for rich results instead of standard listings |

Duplicate Content

Google could have a difficult time selecting the right content to rank in results if multiple pages feature duplicate content.

To prevent duplicate content from impacting technical SEO, measure:

| Metric | Impact |

| Number of Duplicate Pages | Counts the total number of pages that appear to have nearly or entirely identical content |

| Canonical Tag Issues | The number of pages with incorrect, missing, or conflicting rel=”canonical” tags indicating the preferred version for Google to rank |

| Pages Excluded/Not Indexed | Google Search Console’s Coverage report’s “Excluded” section can display pages not indexing for various reasons |

| Parameter URL Issues | Identifies the number of duplicate URLs that tracking parameters produce and indicates how they are handled |

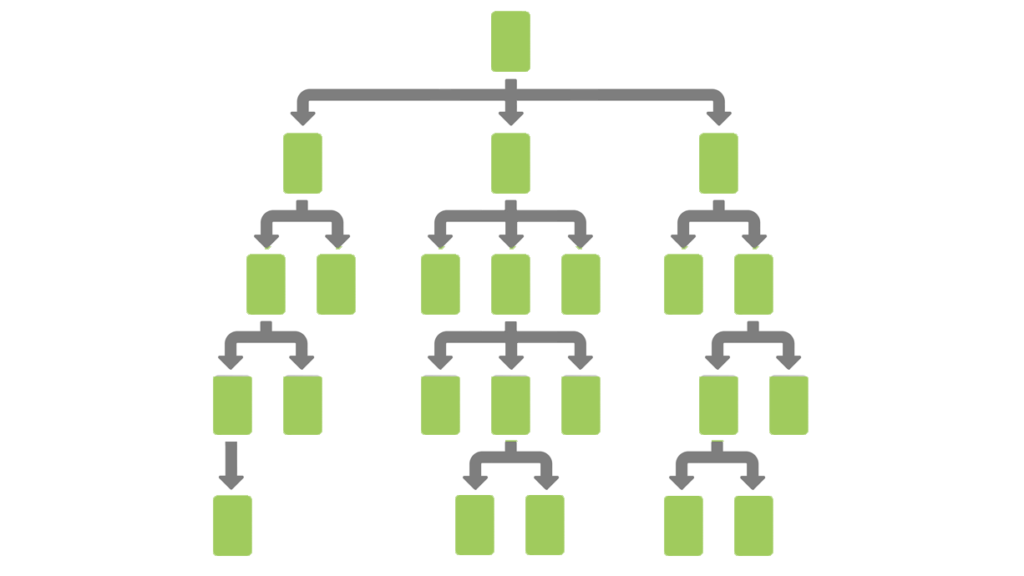

Site Architecture

Your technical SEO audit checklist should also cover site architecture, which determines how page structure and linking can affect crawlability and indexability, as well as the user experience.

Measure the following for this element:

| Metric | Impact |

| Crawl Depth | Tracks the number of clicks needed to reach core pages via the homepage, gauging navigability |

| Crawl Errors | Measures the number of client-side 4xx errors and server-side 5xx errors through Google Search Console |

| Indexed vs. Total Pages | Indicates the percentage of valuable pages that Google has indexed out of all pages |

| Internal Link Count and Distribution | Provides metrics on the methods used to distribute internal links on a site |

| Orphan Pages | Reveals the number of pages without internal links leading back to them, which could limit discoverability |

| Sitemaps and Robots.txt | Checks for robots.txt errors and XML sitemaps to enable crawling |

One of the reasons why it can be so difficult to figure out how to boost your SERP ranking is because it doesn’t just depend on your SEO strategy. There are several other factors that influence search behavior.

User Intent and Query Types

User intent and query types can really affect your SEO. If you want to successfully optimize your content for search engines, you have to understand the goal the searcher has in mind when typing in a query. Once you do, it will empower you to create more relevant content, improve user satisfaction and engagement, and ultimately drive higher conversions.

Voice Search is Integral

Voice search is another factor to consider. More and more people are using voice search technology like Siri, Alexa, or Google Assistant to search the web for them. That’s why using natural language in your content is so important. Focus on using conversational keywords, optimizing for question-based phrases, and ensuring that your content is mobile-friendly.

The Impact of AI on Search Patterns

Of course, the rise of AI is also playing a role in search patterns. The more it evolves the more intuitive and efficient it becomes at improving search and user experiences.

AI-driven features also play a role in how Google crawls and indexes content. If you want your site to succeed in the world of AI, you need to focus on high-quality, contextually relevant content that will satisfy search engines and end-users alike.

40 Common Technical SEO Mistakes

In a technical SEO audit, there are many potential mistakes to avoid.

Here are some things to watch for in your technical SEO audit checklist, according to category and priority:

Crawlability and Indexing

Critical Priority

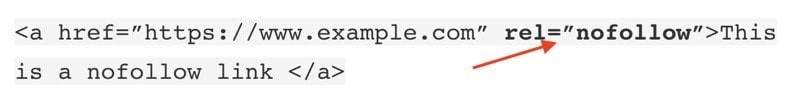

Mistake #1: There are Too Many Nofollow Links

Formerly, Google treated Nofollow links as commands, refraining from crawling or indexing them. Now, Google considers Nofollow as a hint.

These links, marked with a rel=”nofollow” HTML tag, instruct search engines to disregard them and not pass PageRank, potentially not affecting rankings.

Common scenarios for Nofollow links include:

- Not endorsing a linked page (use rel=”nofollow”)

- Sponsored or paid links (use rel=”sponsored”)

- Affiliate links (use rel=”sponsored”)

- User-generated content (use rel=”ugc”)

Too many nofollow links can prevent search engines from following important links on your site.

Mistake #2: There are Too Many Nofollow Exit Links

Two incorrect uses of nofollow include applying it to all external links (ineffective and possibly detrimental) and using it on internal links (inferior to other methods like robots meta tags for controlling crawling and indexing).

Overall, it can negatively impact SEO. Unnecessary nofollow tags can signal to search engines that you don’t want them to crawl or index valuable pages.

In learning how to do a technical site audit, you can check for nofollow tags by inspecting the HTML code. For instance, you can right-click on a link and choose “Inspect” or “Inspect Element” in a browser and see if there is a rel=”nofollow” tag for that page.

High Priority

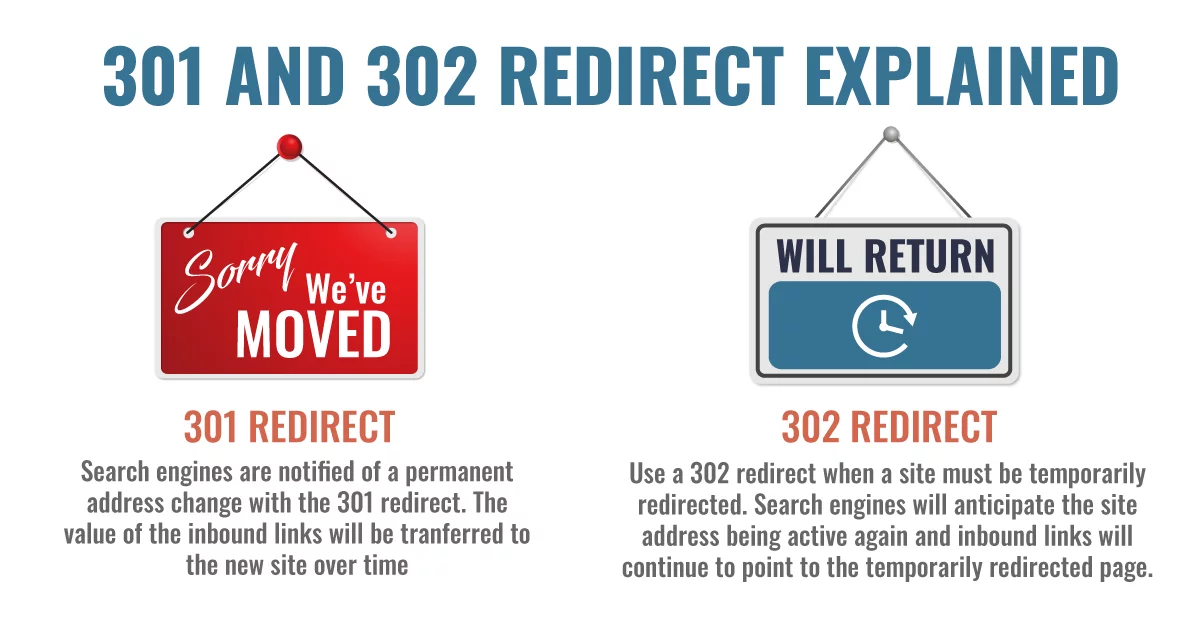

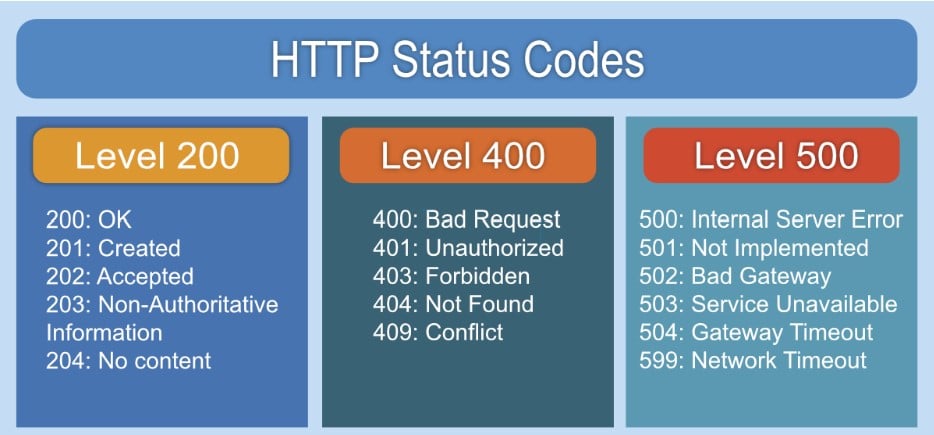

Mistake #3: Incorrect Use of 301 & 302 Redirects

Know the difference between a 301 redirect and a 302 redirect and when to use each of them. Incorrect redirects can confuse search engines and lead to indexing issues.

- Use 301 redirects for permanent page replacements, redirecting a page to another location, and letting search engines know they can stop crawling or indexing this page.

- Use 302 redirects for temporary situations, letting the indexers know this page is undergoing some changes but it will be back online soon.

301 Redirects vs 302 Redirects

Mistake #4: Your Server Header Has the Wrong Code

While you’re performing your technical SEO audit, be sure to check your Server Header. Multiple tools on the internet will serve as a Server Header Checker.

The server header code sends information to search engines about your website, so ensure it’s accurate.

These tools will tell you what status code is being returned for your website. Pages with a 4xx or 5xx status code are marked as problem sites and search engines will shy away from indexing them.

If you find out that your server header is returning a problem code, you’ll want to go into the backend of your site and fix it so that your URL status code is a positive one.

Medium Priority

Mistake #5: You’re Using Meta Refresh

Meta refresh is an outdated way of redirecting users to another page.

Google does not recommend using the meta refresh and notes that it will not have the same benefits as a 301. Use proper 301 redirects instead for a smoother user experience and SEO benefits.

Moz has this to say about them: “They are usually slower, and not a recommended SEO technique. They are most commonly associated with a five-second countdown with the text “If you are not redirected in five seconds, click here.” Meta refreshes do pass some link juice, but are not recommended as an SEO tactic due to poor usability and the loss of link juice passed.”

Mistake #6: You’re Not Using Custom 404 Pages

Someone might link to your site with an invalid URL. It happens to the best of us, and unfortunately, causes SEO problems in the process.

When that does happen, don’t show the visitor a generic 404 error message with a white background.

Instead, deliver a user-friendly 404 error message. Consider your audience. If they’re tech-savvy, keep 404 explanations concise. For others, explain the error in a friendly manner.

Graphics are great but include essential info in copy or alt-text. Provide homepage links, a search bar, and a clear CTA. You can also provide a link to your home page so users can search for the article or page they were hoping to access.

Mistake #7: Using Soft 404 Errors

When a search engine sees a 404 redirect, it knows to stop crawling and indexing that specific page.

However, if you use soft 404 errors, a code 200 is returned to the indexer. This code tells the search engine that this page is working as it should. Since it thinks that the page is working correctly, it will continue to index it.

In a technical SEO audit, you can check for soft 404s with Google Search Console’s “Indexing” > “Pages” report, which will list all “Soft 404s.”

Low Priority

Mistake #8: Upper Case vs. Lower Case URLs

Ensure consistency in URL casing (use lowercase) to avoid potential indexing issues.

Search engines may treat uppercase and lowercase URLs differently. For example, you might have one page that looks like “www.example.com/SeoStructure” and another identical page that uses “www.example.com/seo-structure” with proper casing and hyphenation.

Use this rewrite module to fix the problem.

Speed and Experience

Critical Priority

Mistake #9: Messy URLs on Webpages

Clean up URLs and include relevant keywords for better readability and SEO. Messy URLs can be confusing for both users and search engines.

Don’t end up with messy, unintelligible URLs like “index.php?p=367595.” Try cleaning them up and adding relevant keywords to your URLs.

Mistake #10: Poor Core Web Vitals

Core Web Vitals (loading speed, responsiveness, and stability) impact both user experience and SEO.

Optimize your site by reducing code, using a CDN (content delivery network), and optimizing images to ensure a smooth user experience and search engine boost.

Some of the metrics to pay attention to are:

- Largest Contentful Paint (LCP) – the time it takes for your largest element to load

- Interaction to Next Paint (INP) – the time it takes for your website to respond to user interactions

- Cumulative Layout Shift (CLS) – tracks unexpected layout shifts during your page loading time

Site speed is incredibly important so if your numbers are low, you need to figure out how to improve them. Things like “lazy loading,” which defers loading non-essential content to speed up overall site speed, or using CDNs will help improve your site speed without affecting your user experience.

This Google tool will tell you essentially how your website is performing, so use it to your advantage.

High Priority

Mistake #11: Hidden Text

Avoid hidden text that bloats page size and hurts user experience.

Things like terms & conditions or location info can be meant for a single page, but end up embedded in all site pages.

Make sure to scan your site using a tool like Screaming Frog to make sure there’s no hidden text.

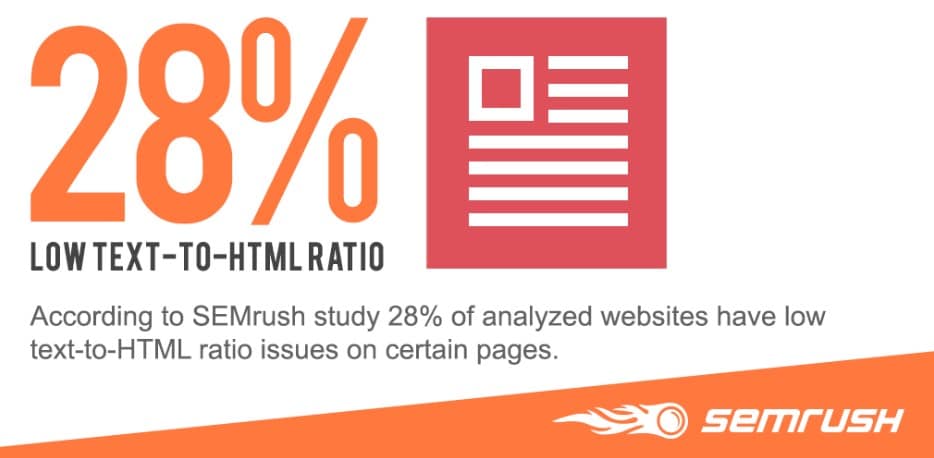

Mistake #12: Low Text to HTML Ratio

Increase text content and reduce unnecessary code to improve page load speed and crawlability for search engines. Search engines analyze the content on your website, so having a healthy balance of text and code is important.

If you have too much backend code on your site, it causes it to load too slowly. There have also been some recent indexing issues with JavaScript-heavy websites so, you’ll definitely want to make sure that your text outweighs your HTML code.

This problem has an easy solution: either remove unnecessary code or add more on-page text content. You can also remove or block any old or unnecessary pages.

You can also employ practices like server-side rending or dynamic rending to ensure that all of your critical content is accessible to search engines. This will improve the likelihood of your content showing up in search like it should.

Mistake #13: Your Website Has a Slow Load Time

If your website takes a long time to load, visitors will get impatient and bounce. This not only hurts user experience but also sends negative signals to search engines.

Google itself has said:

“Like us, our users place a lot of value in speed — that’s why we’ve decided to take site speed into account in our search rankings. We use a variety of sources to determine the speed of a site relative to other sites.”

Some ways to increase site speed include:

- Enabling compression – You’ll have to talk to your web development team about this. It’s not something you should attempt on your own as it usually involves updating your web server configuration. However, it will improve your site speed.

- Optimizing images – Many sites have images that are 500k or more in size. Some of those pics could be optimized so that they’re much smaller without sacrificing image quality. When your site has fewer bytes to load, it will render the page faster.

- Leveraging browser caching – If you’re using WordPress, you can grab a plugin that will enable you to use browser caching. That helps users who revisit your site because they’ll load resources (like images) from their hard drive instead of over the network.

- Using a CDN – A content delivery network (CDN) will deliver content quickly to your visitors by loading it from a node that’s close to their location. The downside is the cost. CDNs can be expensive. But if you’re concerned about user experience, they might be worth it.

Medium Priority

Mistake #14: Too Many Plugins

While plugins can enhance functionality and design, an excess of them can bloat your website and slow down its performance.

Each plugin adds code and potential compatibility issues, impacting page load times and user experience.

A sluggish website frustrates users and can negatively impact your SEO. Regularly review your plugins and remove any that are unnecessary or have lighter alternatives.

Some specific symptoms of too many plugins include:

- Slow website speed and long loading times

- High server resource usage

- Frequent database errors

- Site crashes

- Sluggish admin dashboards

- Poor mobile experience

- Maintenance issues

Mobile and Accessibility

Critical Priority

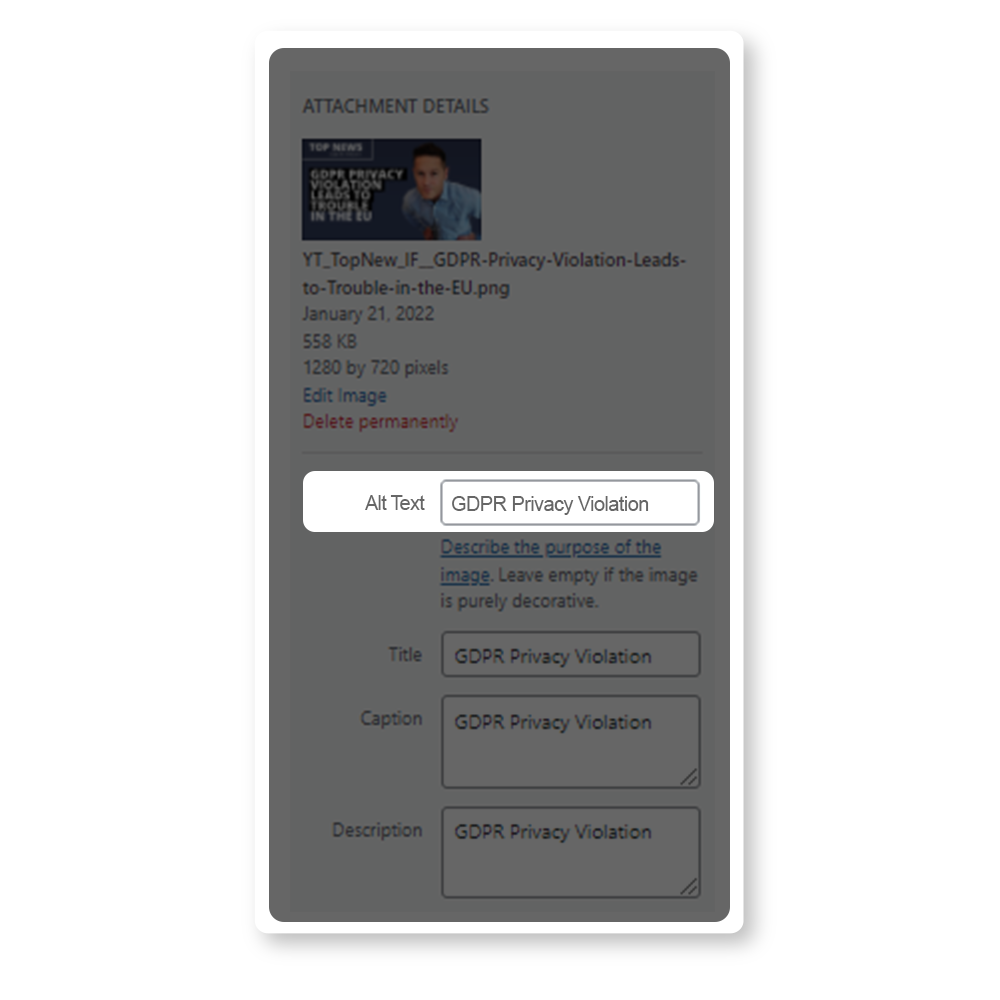

Mistake #15: Missing Alt Text Tags

Alt-text is great for two reasons.

1. They make your site more accessible. People who visit your site who are visually impaired can use these tags to know what the images on your site are and why they are there.

2. They provide more space for text content that you can use to help your site get ranked.

You’ll want to add alt text to images for accessibility and SEO. Describe the image content and incorporate relevant keywords.

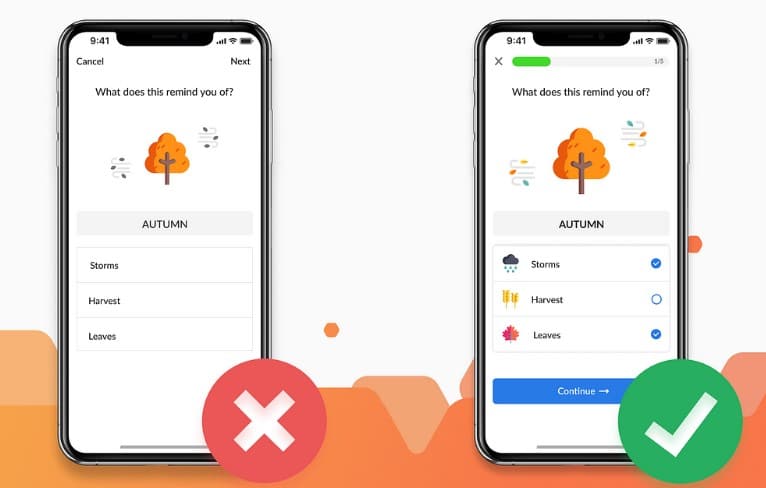

Mistake #16: Poor Mobile Experience

These days, this one’s a no-brainer.

With most web searches now happening on mobile devices, Google prioritizes websites that offer a great mobile experience.

Make sure your website is responsive and easy to navigate on smartphones and tablets. To properly optimize for mobile, you must take everything from site design and structure of your page to page speed into consideration.

High Priority

Mistake #17: Your Website Has Poor Navigation

Confusing website navigation frustrates users and makes it difficult for search engines to understand your website structure. A clear and simple navigation menu is essential for both user experience and SEO.

Some indicators that your website suffers from poor navigation could include:

- Complex or overloaded menus

- Hidden or non-standard menus

- Broken links and 404s

- Inconsistent structure across the site

- Misleading or vague labels

- High bounce rates due to difficulty navigating

Mistake #18: Incorrect Language Declaration

Ideally, you want your content delivered to the right audience, which also means reaching those that speak your language.

Declare your website’s default language clearly to help Google deliver your content to the right audience and improve international SEO.

To check whether you’ve done this properly, use this list when verifying your language and country inside of your site’s source code.

Site Structure and Navigation

Critical Priority

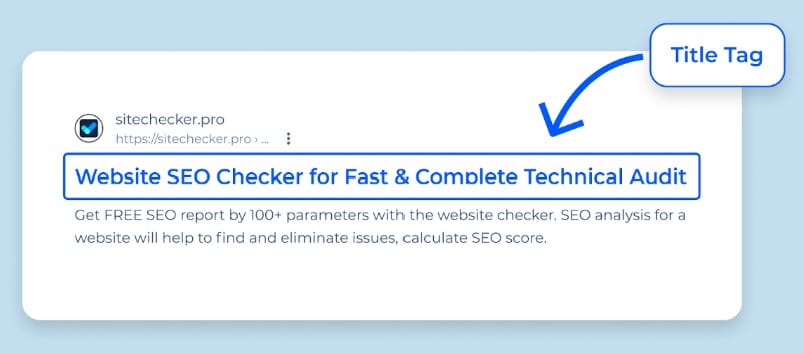

Mistake #19: Title Tag Issues

Title tags can have a variety of issues that affect SEO, including:

- Duplicate tags

- Missing title tags

- Too long or too short title tags

Your title tags, or page titles, help both users and search engines determine what your page is about.

Craft clear, concise title tags (50-70 characters) with relevant keywords to inform users and search engines. Include modifiers like “Best” or “Guide” for better visibility.

Optimize your titles with a keyword research tool: To ensure your titles are truly optimized, consider using a tool like DinoRANK keyword research tool.

This tool can help you identify the most relevant and effective keywords to include in your titles, enhancing both the visibility and SEO impact of your pages.

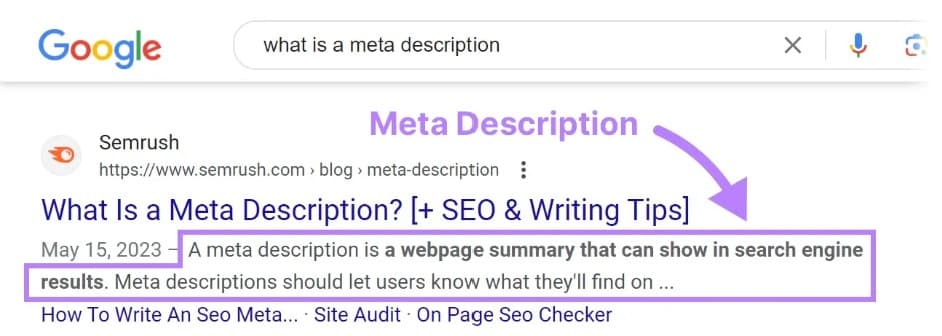

Mistake #20: Not Using Meta Descriptions

A page’s meta description is a short snippet that summarizes what your page is about.

Search engines generally display them when the searched-for phrase appears in the description.

Write compelling meta descriptions (120-160 characters) that summarize your page and include relevant keywords.

Mistake #21: Improper Move to New Website or URL Structure

Updating and moving websites is an important part of keeping a business fresh and relevant, but if the transition isn’t managed properly, a lot could go wrong.

Manage website migrations carefully to avoid traffic loss. Use 301 redirects to point to the new URLs.

For more on how to migrate your site and maintain your traffic, check out the full guide here.

High Priority

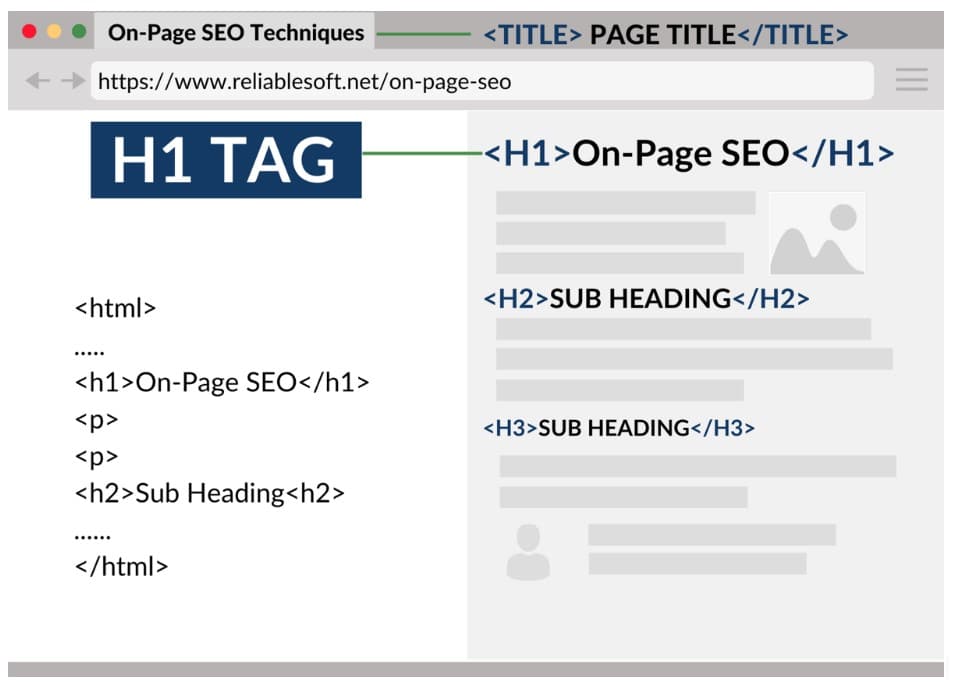

Mistake #22: H1 Tag Issues

While title tags appear in search results, H1 tags are visible to users on your page. The two should be different.

Each page needs a unique H1 tag (ideally under 60 characters) that reflects your target keyword and content.

Mistake #23: Broken Images

Broken images are common and often occur due to site or domain changes or a change in the file after publishing.

Fix broken images promptly to prevent higher bounce rates and negative SEO impact (search engines may view broken images as a sign of a poorly maintained website).

If you come across any of these on your site, make sure you troubleshoot fast.

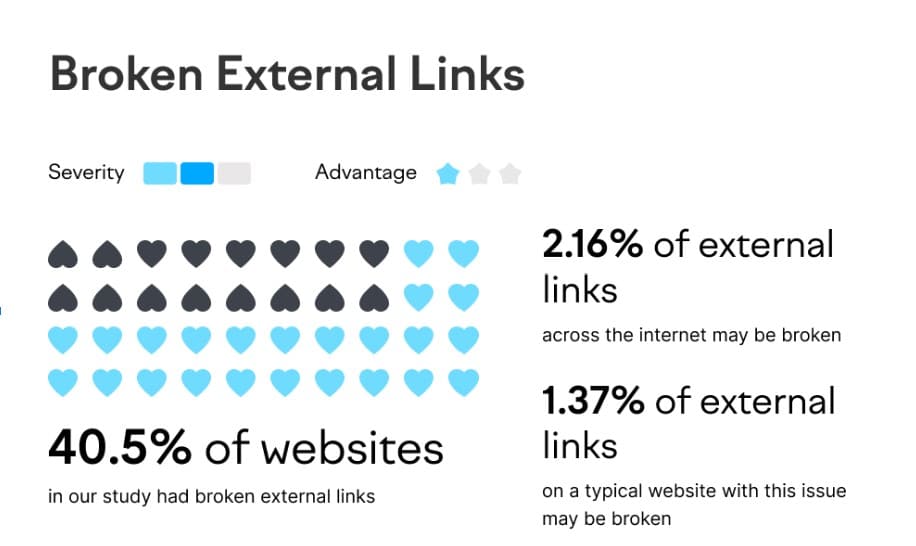

Mistake #24: Broken External Links on Your Website

Just link internal links, you’ll want to ensure all external links are working too. Broken external links can hurt your credibility and make your site look outdated.

Use SEO tools to identify and fix broken external links. Unfortunately, fixing broken backlinks isn’t quite as easy. Because these are hosted on outside sites, your first line of defense should be to contact the site the link came from and ask them to remove it.

Mistake #25: Questionable Link-Building Practices

While link building itself gives an obvious boost in search rinks, doing so in a questionable manner could result in penalties.

Beware of “black hat” strategies like link exchanges. Yes, they’ll get you a lot of links fast, but they’ll be low quality and won’t improve your rankings. Instead, focus on high-quality backlinks from reputable sources.

Medium Priority

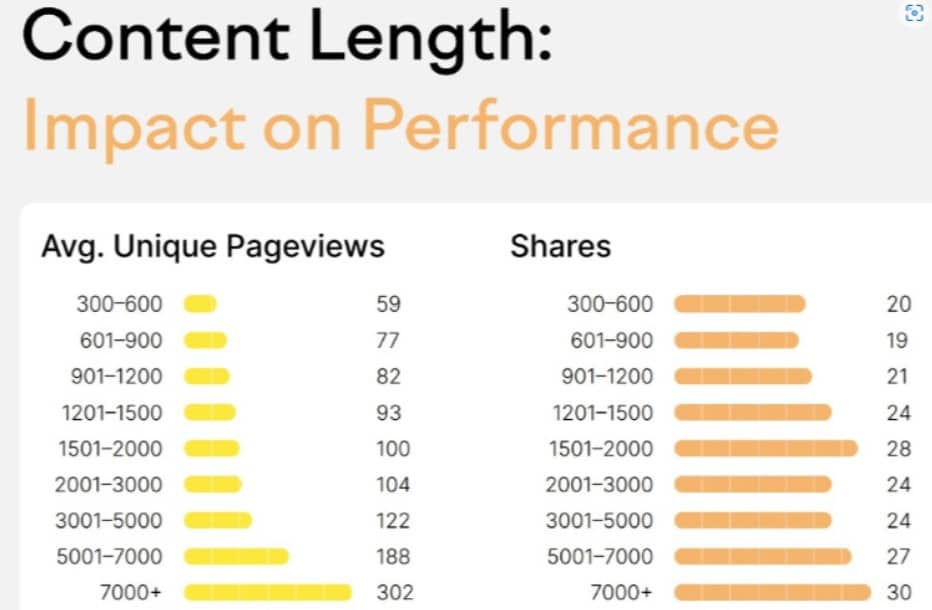

Mistake #26: Low Word Count

Aim for content that comprehensively addresses user needs. While word count isn’t a direct ranking factor, longer content typically performs better as it aids search engines in understanding user intent.

Aim for at least 300 for regular posts and 900 words for cornerstone content. Product pages can manage 200 words. However, the sweet spot for blog length appears to be between 1,760 and 2,400 words, with posts over 1,000 words, consistently delivering stronger results.

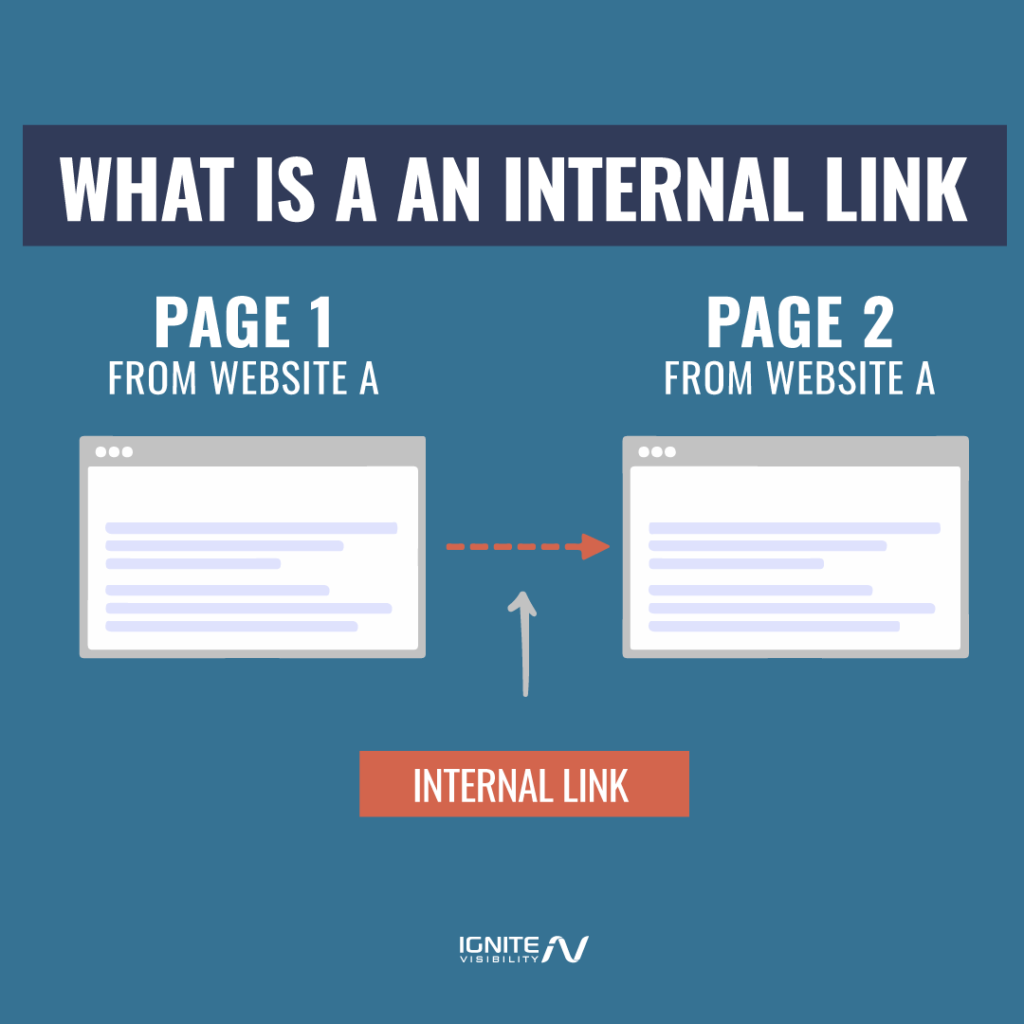

Mistake #27: Poor Internal Linking Structure

Internal linking ranks high in effective SEO strategies.

Strategically link your website’s pages using relevant anchor text to improve navigation and SEO (search engines consider internal linking an indicator of how well your content is organized).

Mistake #28: Broken Internal Links on Your Website

When crawling your website for indexing purposes, Google depends on the internal links within your page. If these links are broken, it won’t know where to go next.

Broken internal links also tank your credibility. Why would users want to use your website if it’s full of 404 error messages?

Use SEO tools to find and fix broken internal links to prevent search engines from crawling issues (broken internal links can lead to search engines getting stuck and not indexing all your important content).

Low Priority

Mistake #29: You’re Not Using Breadcrumb Menus

Put breadcrumb links on your web pages. That’s an especially great idea if you’re running an ecommerce site with lots of categories and subcategories.

They look like this: Categories > Electronics > Mobile Devices > Smartphones

Sitemap and Robots

Critical Priority

Mistake #30: Your Sitemap is Outdated, Broken or Missing

Sitemaps act as a roadmap for search engines, helping them discover and index all the important pages on your website.

They are super easy to make. In fact, most web design or hosting sites will prepare one for you. But this is also an aspect where your technical SEO can fail.

Here are some signs of an outdated or broken sitemap:

- Google Search Console errors such as “Submitted URL not found (404)”

- Missing content when publishing new pages or blog posts

- Redirected or broken links

- Incorrect tags

- URL discrepancies, such as HTTP instead of HTTPS or www vs. non-www versions

If your sitemap is outdated or missing, search engines might miss crucial pages, hurting your ranking.

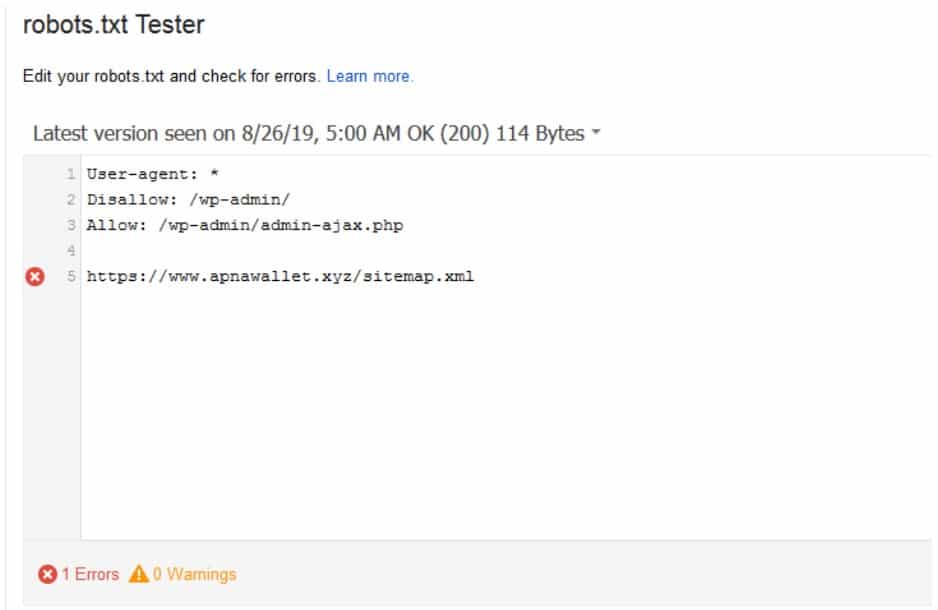

Mistake #31: A Robots.txt File Error

A robots.txt file instructs search engines on which pages to crawl and index.

Something as seemingly insignificant as a misplaced letter in your robots.txt file can do major damage and cause your page to be incorrectly indexed.

A misplaced “disallow” is another thing to be on the lookout for. This will signal Google and other search engines to not crawl the page containing the disallow, which would keep it from being properly indexed.

You can test the health of your robots.txt file by using the test tool inside of the Google Search Console.

Duplicate Content and Canonicals

Critical Priority

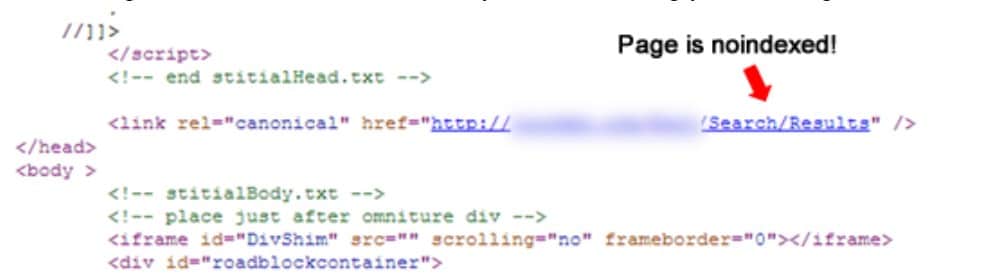

Mistake #32: Duplicate Content

If there’s one thing Google loves, it’s unique content.

That means that duplicate content is a huge problem if you’re trying to rank on the first page of the search results.

Use tools such as Screaming Frog, Deep Crawl, or SEMRush to find out if you have a duplicate content issue. These tools will crawl your site, as well as other sites on the internet, to find out if your content has been re-posted anywhere.

To combat this problem, make sure every page is unique. Every page should have its own URL, title, description, and H1/H2 headings. Your H1 heading should be a visible headline that contains your primary keyword. Be sure to put a direct keyword in every section of your page to capitalize on the strength of your keywords.

You should even be careful when you reuse images and the alt-tags that accompany those images. While these tags should contain keywords, they can not be identical. Come up with ways to incorporate the keywords while being different enough that your tags don’t get indexed as duplicate content.

Also, look for duplicate content in your structured data or schema. This is often an overlooked website aspect that can negatively impact your ranking. Google has a great Schema Markup tool that will help you make sure that duplicate content is not appearing in your schema.

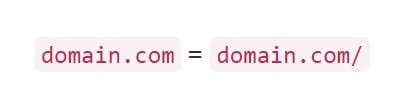

Mistake #33: Multiple Versions of Homepage

We’ve discussed previously that duplicate content presents a problem but that problem grows even bigger when it’s your homepage that’s duplicated.

You’ll want to ensure you don’t have multiple versions (www and non-www, .index.html versions, etc.) of your homepage.

If you find out that multiple versions of your site are live, add a 301 redirect to the duplicate page to point search engines and users in the direction of the correct homepage.

High Priority

Mistake #34: Rel=canonical Issues

You can use rel=canonicial to help consolidate duplicate content, telling the search engines which page should be indexed.

However, if used in the wrong place, it could cause some confusion and lead search engines to not rank your page at all.

Medium Priority

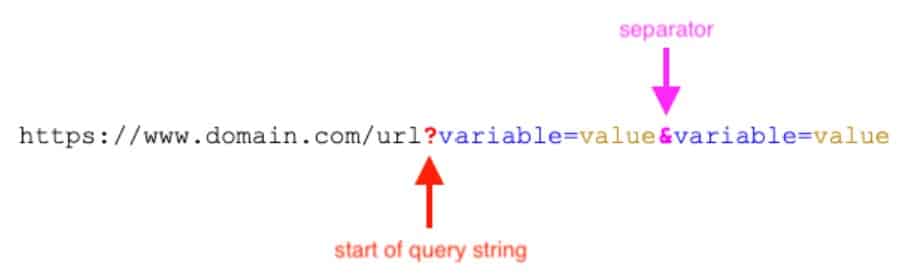

Mistake #35: There are Query Parameters at the End of URLs

Familiar with the overly-long URL?

This often happens when certain filters are added to URLs such as color, size, etc. Most commonly it affects ecommerce sites.

Clean up URLs by minimizing query parameters to avoid duplicate content and wasted crawl budget. Excessive query parameters can create thin or duplicate content, which can hurt your SEO.

Low Priority

Mistake #36: Improper Use of a Trailing Slash

A trailing slash is placed at the end of a URL as a forward slash: “/” and is used to help define your website’s directory.

Previously, folders would have trailing slashes and files would not. But as we know, the world of SEO is always changing.

If your content can be seen on versions with and without trailing slashes, then the pages can be treated like separate URLs.

What does this mean for your SEO?

Duplicate content. In most cases, a canonical tag will specify the preferred version, so you won’t have to worry.

However, if different content is showing on trailing slash and non-trailing slash URLs, you’ll want to pick one version to index and redirect the other version to it.

Security

Critical Priority

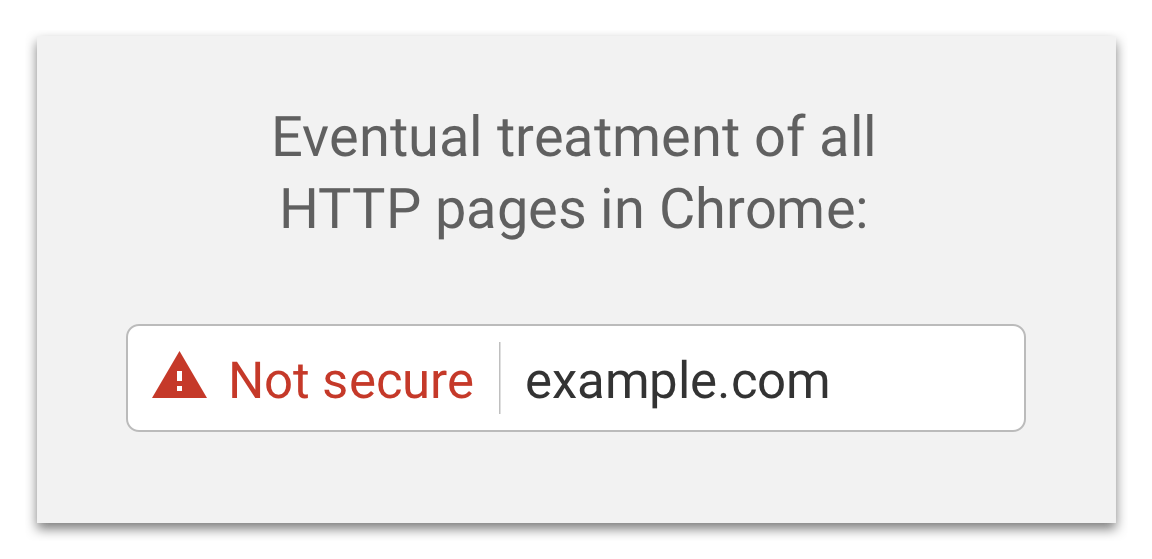

Mistake #37: Still Using HTTP

Since web security is always on everyone’s minds, all indexed sites now use HTTPS.

Websites without HTTPS encryption are flagged as insecure by search engines. This can deter users and damage your SEO. Switching to HTTPS protects your website and improves your search ranking potential.

Mistake #38: You’re Not Implementing SSL

Neglecting to implement SSL (Secure Sockets Layer) on your website can lead to dire consequences.

SSL encryption not only safeguards the data transmitted between your website and users but is also a ranking signal for search engines. Websites with SSL certificates are trusted more by visitors and search engines alike.

Without SSL, visitors might be greeted with security warnings, which can erode trust and drive them away. By securing your site with HTTPS, you not only protect sensitive information but also bolster your SEO efforts and instill confidence in your online presence.

Schema Markup

Critical Priority

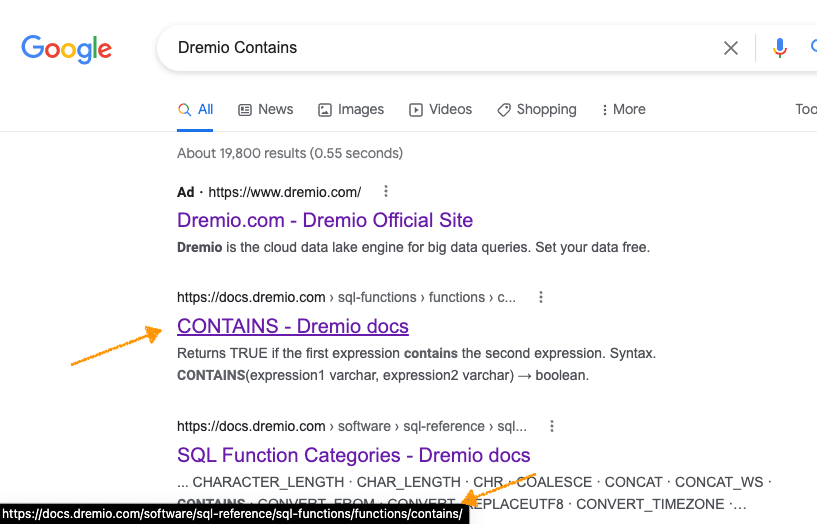

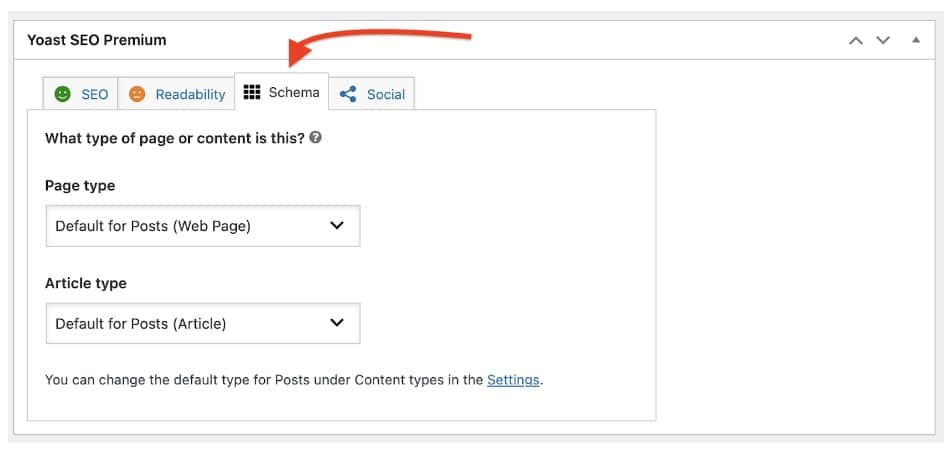

Mistake #39: Ignoring Schema Markup

Schema markup is a way to provide search engines with more information about your content. This can lead to rich snippets in search results, which are more visually appealing and can significantly improve click-through rates.

Ignoring schema markup means missing out on a chance to stand out in the search results, particularly when it comes to product details, reviews, events, and other structured content.

You can also insert structured data through the JSON-LD format. Both Google and Bing support JSON-LD because it’s seen as an easier way to organize and collect data using schema markup. It improves user experience, while also helping to protect sites from AI-driven search results.

To see if your schema or JSON-LD is working correctly, use the Google Rich Results Test. This will show you whether your structured data is doing what it’s supposed to or if there are issues for you to troubleshoot.

High Priority

Mistake #40: You’re Not Using Local Search and Structured Data Markup

Local searches drive a lot of search engine queries, and Google certainly recognizes that.

This is why a presence on search data providers like Yelp, Facebook, etc. is essential. Make sure your contact information is consistent on all pages.

Monitoring and Maintaining Technical SEO

Now that you know all that technical SEO entails, what can you do to make sure your technical SEO elements stay as up to date as possible?

- Monitor the technical aspects of your website. With regular audits and performance tracking, you can catch and correct small issues before they snowball into major problems.

- Analyze the data. These numbers will give you unmatched insight into what you can do to improve your site, both for the algorithms and your users.

- Stay up to date on algorithm changes. Whenever something changes in the algorithm, adjust your content accordingly. (hint: bookmark this page to stay up to date on all of Google’s Core Updates!)

- Conduct technical SEO site audits consistently. Perform an audit either every quarter or whenever you make changes to your website that could affect technical performance.

- Use reliable tools for auditing and reporting. Implement solutions like Google Search Console for site monitoring, crawl reports with actionable insights, and PageSpeed Insights to measure page loading speed and Core Web Vitals.

- Track the right KPIs over time. Based on your unique SEO goals, measure and monitor for crawl errors, coverage, Core Web Vitals, mobile usability, and indexation stats that could indicate performance.

- Document fixes and track impact. With the help of a centralized spreadsheet or another document, you can track all fixes and determine their impact with regular monitoring, which will help with ongoing maintenance.

Frequently Asked Questions About Technical SEO

1. What is a technical SEO audit?

A technical SEO site audit involves assessing a website’s performance when it comes to specifically technical elements affecting SEO, such as page loading speed, mobile-responsiveness, and crawlability and indexability from search engines.

2. How often should you do a technical SEO audit?

Generally, it’s best to conduct technical SEO site audits quarterly or after making any significant technical change to your website. Checking for an impact on rankings and indexability can help proactively identify and address any issues with core technical SEO factors.

3. What tools should I use for a technical SEO audit?

There are numerous tools you can use for technical SEO auditing on a website, including Google Search Console for consistent monitoring for technical issues, PageSpeed Insights to detect slow page loading speeds, and crawl reports detailing problems and specific fixes.

4. What are the most common technical SEO issues?

Some of the most common technical SEO issues to watch for include:

- Poor crawlability and indexability due to broken links, robots.txt issues, and server errors

- Slow page loading speed and other poor Core Web Vitals metrics

- Duplicate content and canonicalization issues

- Improper internal linking and site structure

- No mobile optimization

- Missing or incorrect structured data

5. How do technical SEO issues affect rankings?

Various issues detected during a technical website audit can negatively impact your rankings in different types of search results. For instance, slow page loading speeds could lead to higher bounce rates from visitors, signaling to Google that your pages are of poor quality. Missing sitemaps and other technical issues can also hurt crawlability and indexing.

Ignite Visibility’s Ready to Conduct a Technical SEO Audit!

Struggling with website ranking or traffic? Ignite Visibility can help!

We offer a technical SEO audit and an ongoing technical SEO service to ensure your site is optimized for search engines. Click here to see how our experts helped one client properly index all of their pages, leading to a 130% increase in pages indexed in just 4 months!

After all, a healthy website needs regular checkups. To ensure you get the organic traffic you deserve, our technical SEO experts will identify and fix issues that could be holding you back.

Ready to see what your website can achieve? Get a free technical SEO audit today!