It does take a few minutes to process the rendering of the site, but as promised by Google, it makes diagnosing errors on the site much more simple. It also gives webmasters a very clear idea of the type of website crawling that will be occurring as Google updates their robots to view what can and more importantly, can not be seen from a user perspective. Google recommends “making sure Googlebot can access any embedded resource that meaningfully contributes to your site’s visible content, or to its layout.”

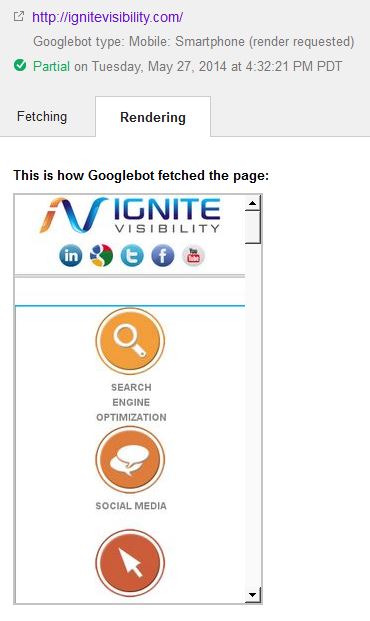

To the right, you can see what a mobile capture looks like. It will be interesting to see at point down the line (if at all) sites that have poor performance in these different areas get a new and different kind of penalty.