Weekly Roundup of Breaking Marketing News Brought To You Each Friday

Digital Marketing News 5/30/2026 to 6/05/2026

This week: 80M Consumers Shop With AI, Google Adds Lead Management Dashboard, and Meta AI Hack Exposes Accounts.

Here's what happened this week in digital marketing:

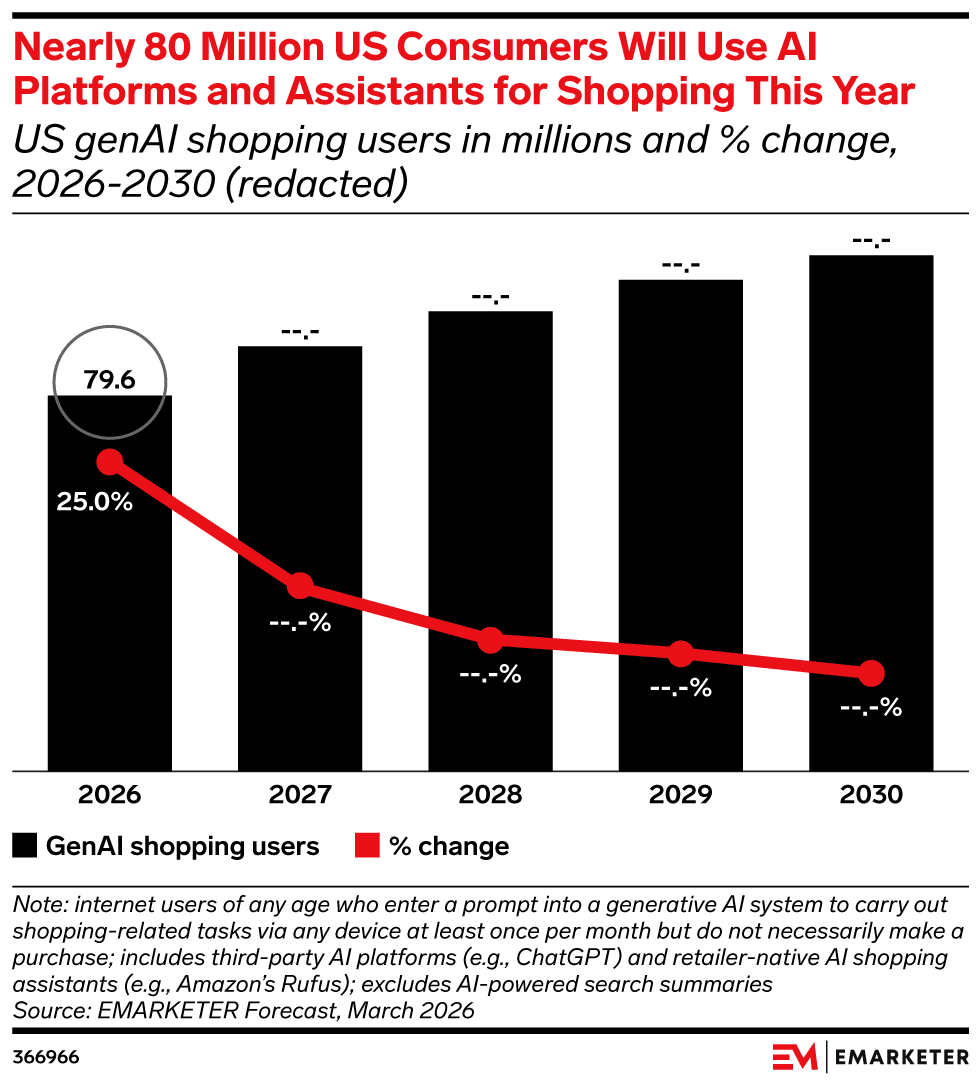

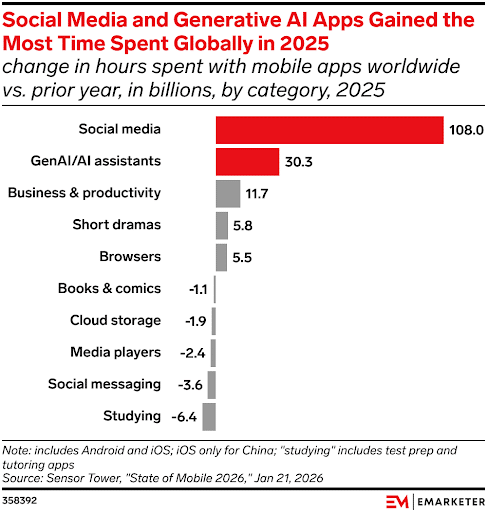

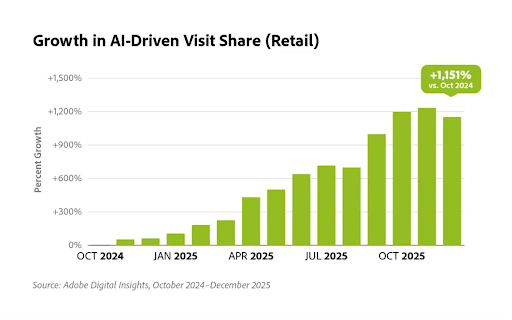

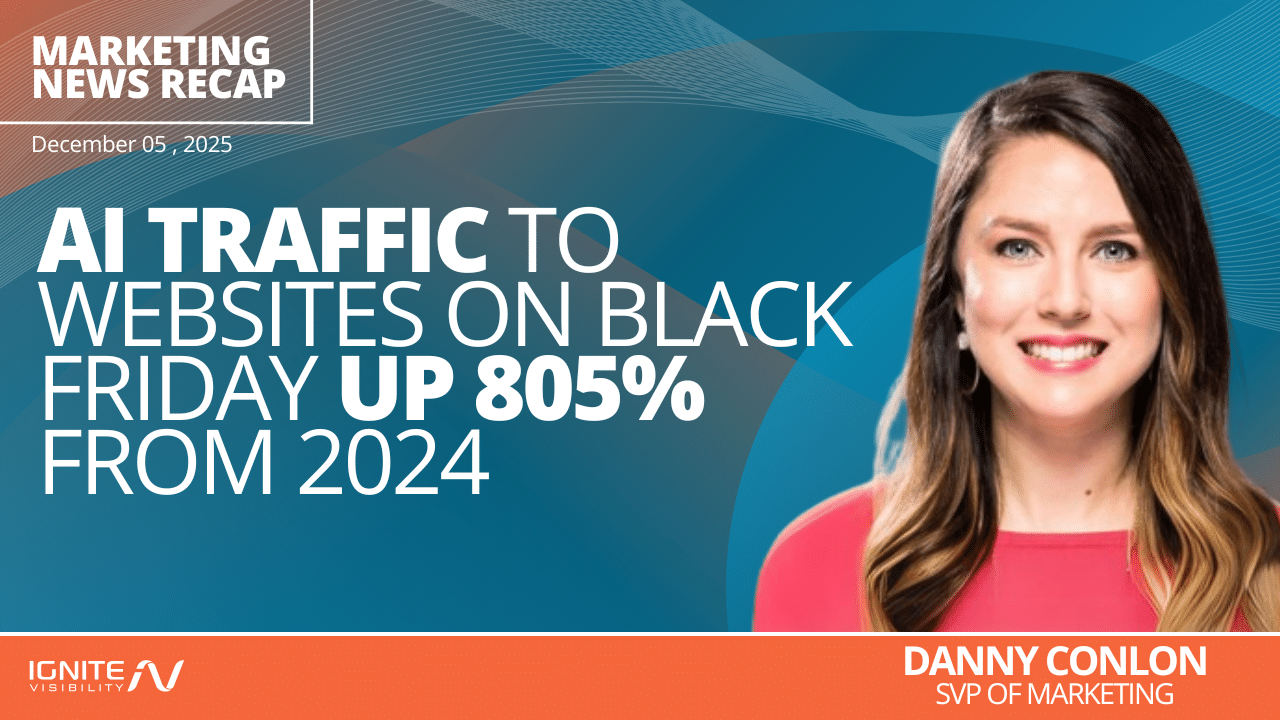

1. Nearly 80 Million US Consumers Will Turn to AI for Shopping Assistance in 2026

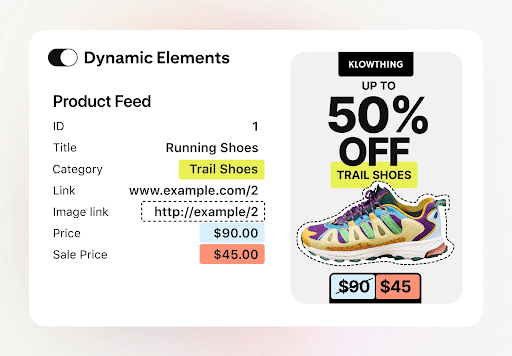

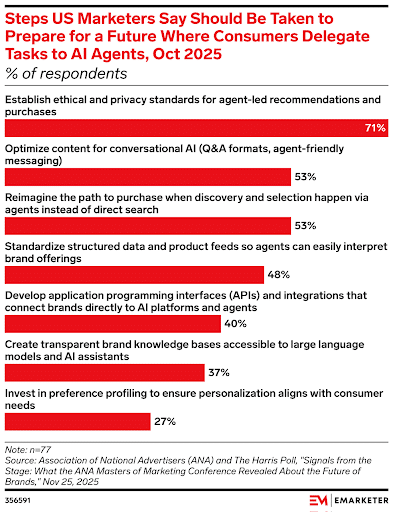

New research from eMarketer reveals that AI platforms and assistants are changing the digital retail experience.

The study revealed that almost 80 million U.S. consumers will turn to AI platforms and assistants to help them find, research, and purchase products and services. This number is expected to continue rising in the coming years.

Why We Care: The use of AI, specifically in ecommerce and digital marketing, will continue to rise. It’s in your brand’s best interest to figure out how to reach those consumers through these tools.

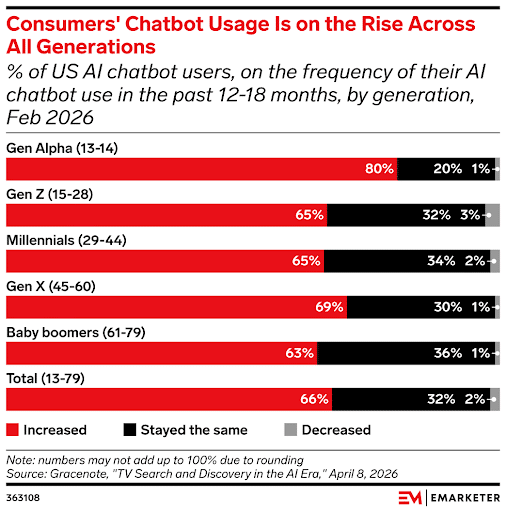

2. Gen X is Using AI Chatbots More Than Millennials and Gen Z

Other surprising research from eMarketer revealed that chatbot usage is on the rise for Gen Z users, even more so than with younger generations like Millennials and Gen Z.

The numbers revealed that Gen Alpha is using the tools the most, but Gen X isn’t far behind. The study also found that Gen Z, Millennials, and Baby Boomers are also increasing their AI chatbot usage, but at a slower pace than Gen X.

The study defined “chatbot usage” as the use of tools such as ChatGPT, Microsoft Copilot, and Gemini. And, while it’s probably not surprising to see that Gen Alpha is using the tools the most, it is somewhat interesting that people aged 45-60 are embracing them more quickly than those aged 15-44.

If AI visibility has been a big source of discussion for your marketing, use this study to help you develop creative graphics and copy that will appeal to each generation.

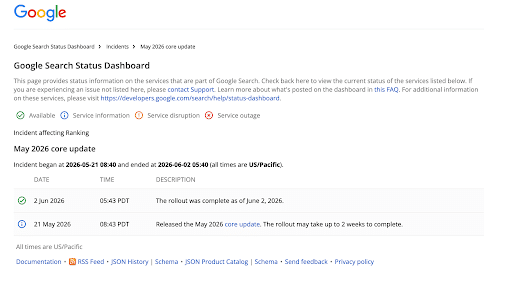

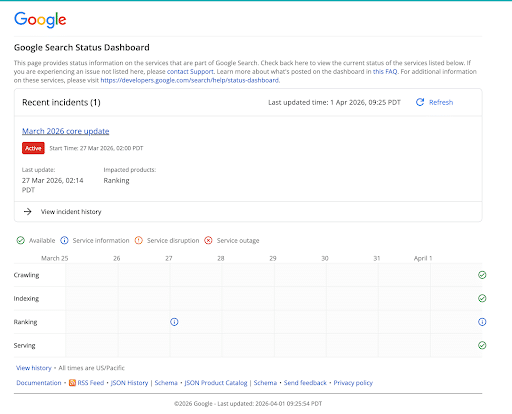

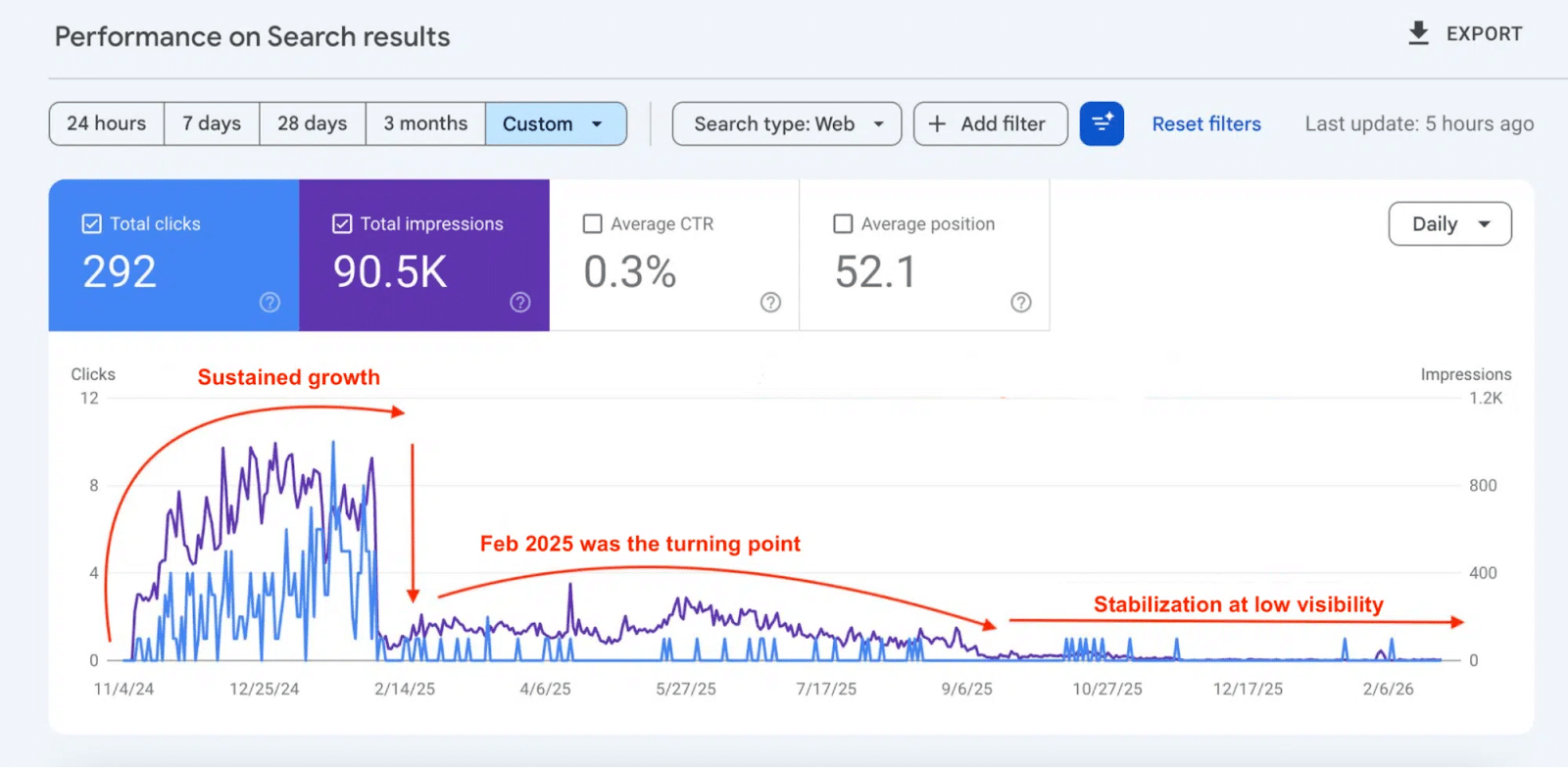

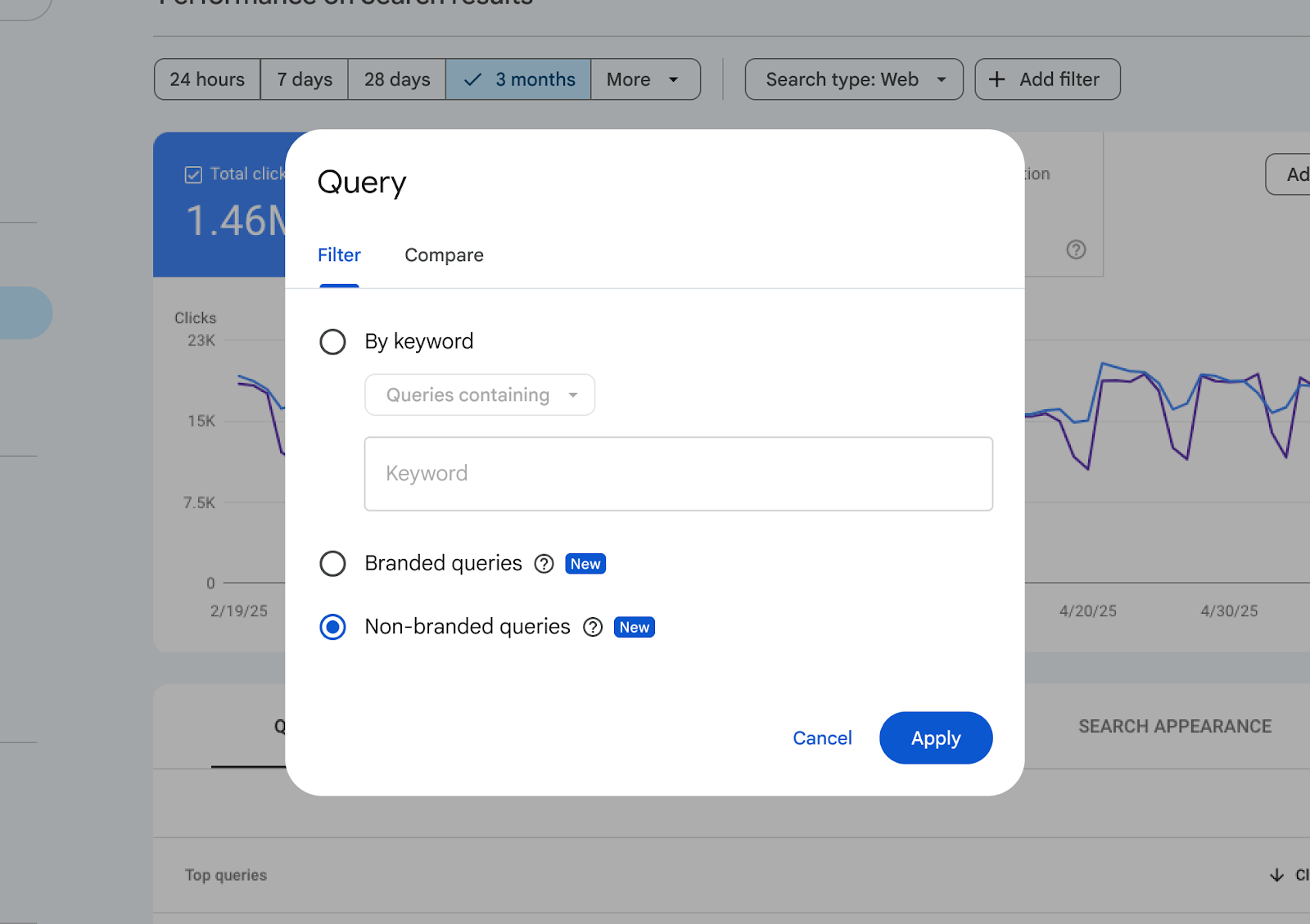

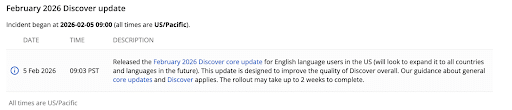

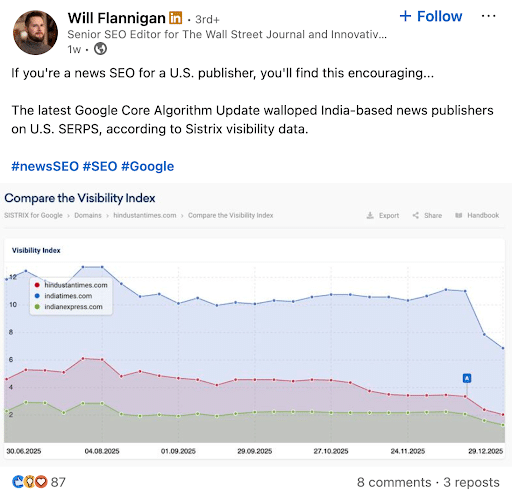

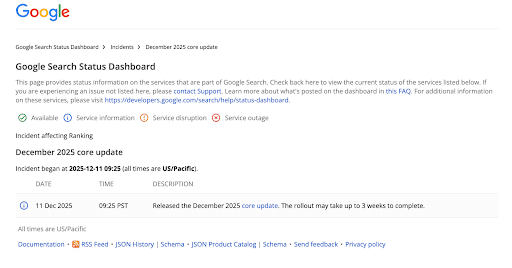

3. Google’s May Core Update is Complete: How Did Your Site Fare?

Google’s May Core Update took almost 12 days, but it’s finally complete!

Many experts say this update has been hard to read and much more volatile than the March update. Google itself released core update documentation saying to wait a full week before analyzing your Search Console data.

When you do take a look at your data, be sure to focus on the patterns emerging from pages, queries, countries, devices, and search types. Single-day rankings may be somewhat unreliable when determining how this update affected your site.

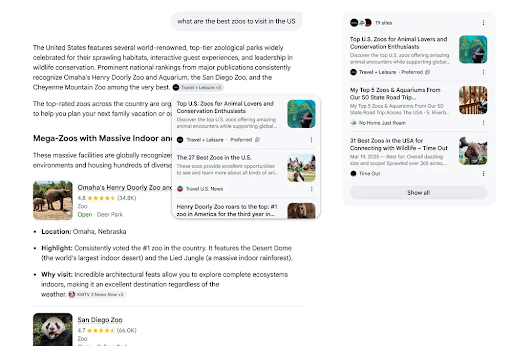

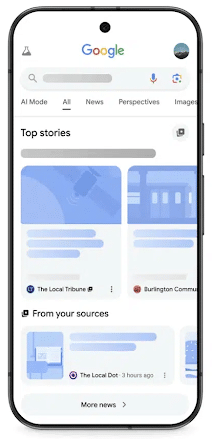

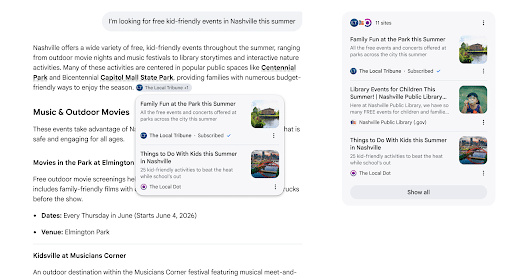

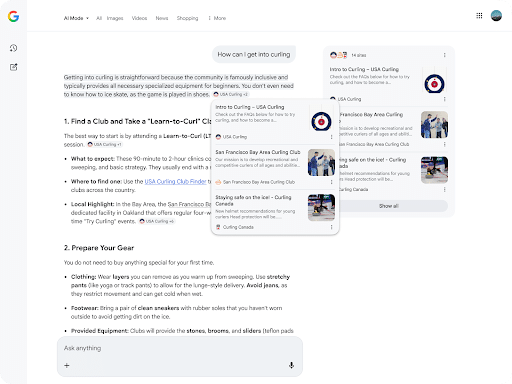

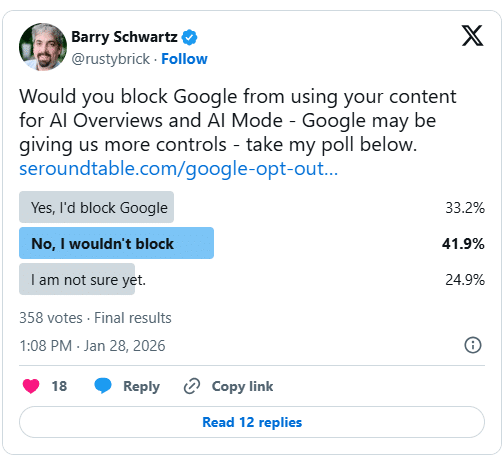

4. Google Expands Preferred Sources into AI Overviews & AI Mode

Users could already select which sources they wanted to appear in their Top Stories, but now, Preferred Sources has been extended into AI Overviews and AI Mode.

When a user selects a website as a Preferred Source, Google will mark the results with a “Preferred” label. This way, people can get news and information from sources they already know and trust.

There’s a reason you would want your site named a Preferred Source. Google claims that people are twice as likely to click on those links than any other.

The update also introduced the “Highly Cited” badge. This label will appear on links that have been cited by other sources, even if they aren’t from one of your Preferred Sources.

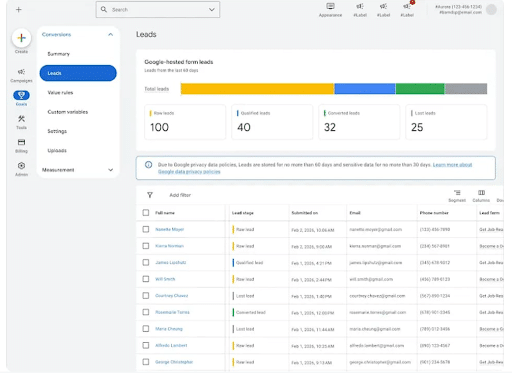

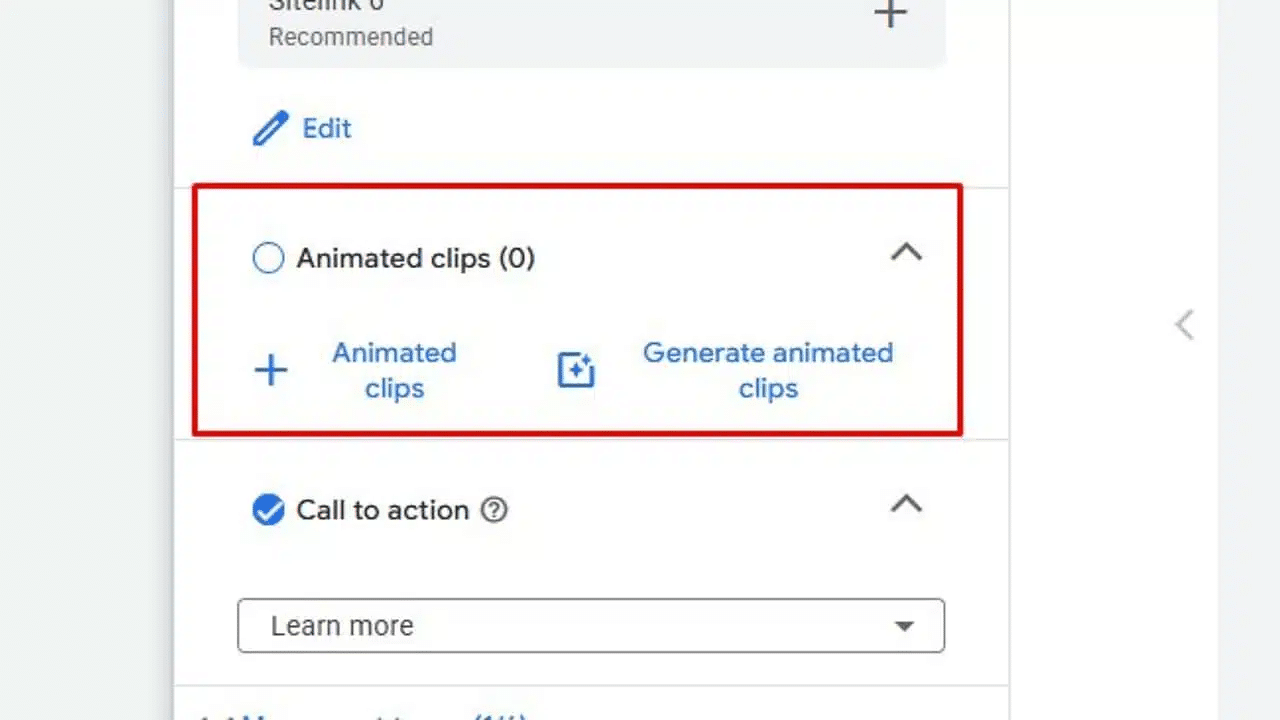

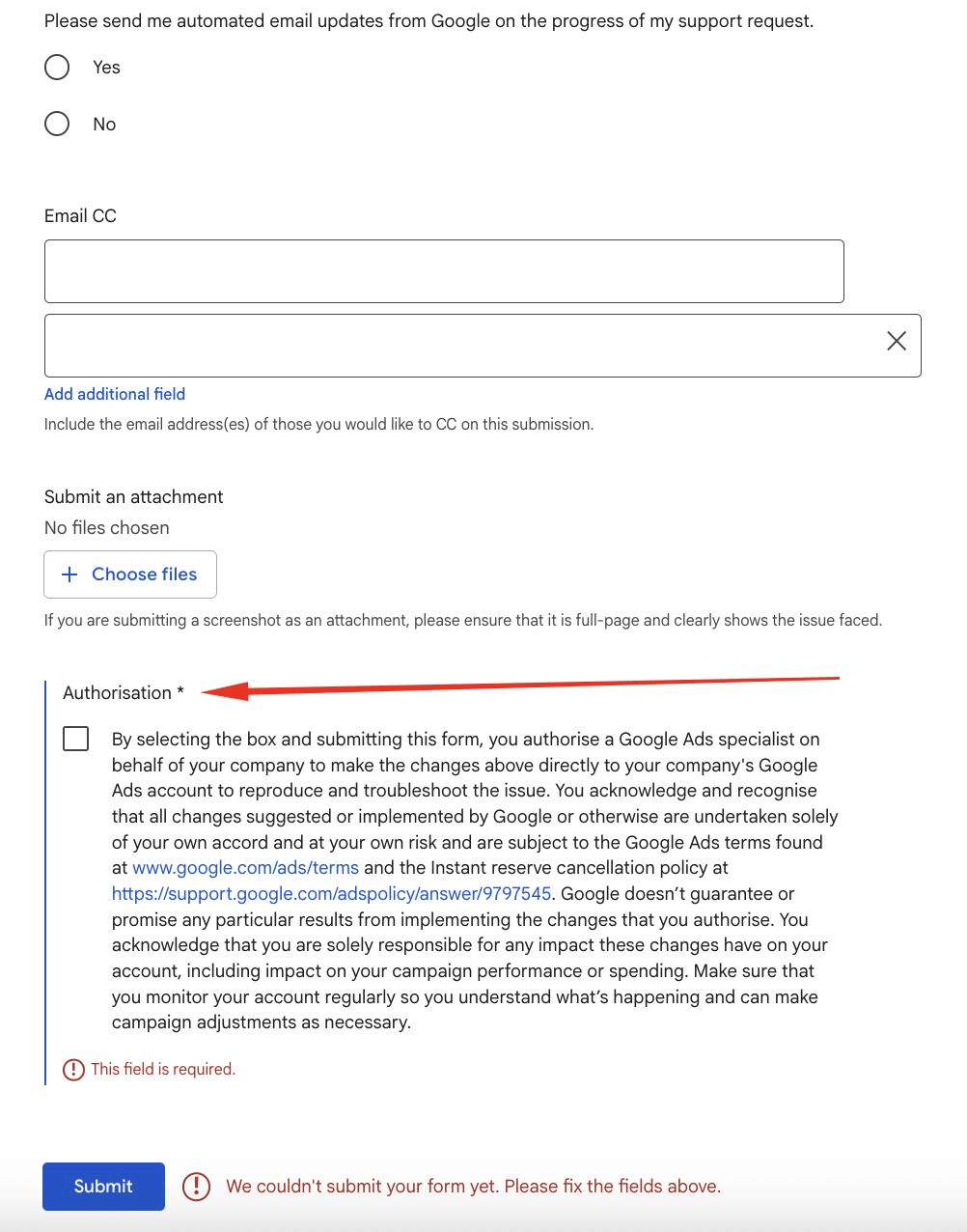

5. Google Ads Launches Built-In Lead Management Dashboard

Google Ads is bringing lead management directly to your Google Ads dashboard.

This new lead management dashboard includes information such as:

- Total leads

- New leads

- Qualified leads

- Lost leads

- Lead status and progression through the funnel

Having all of this information in one centralized place will help marketers by:

- Reducing the risk of losing track of potential customers

- Sharing lead-quality and conversion signals with Google Ads

- Helping bidding algorithms prioritize high-value leads

- Identifying high-quality leads more quickly

- Moving prospects through the funnel more efficiently

You can see this new dashboard right inside your existing Google Ads console.

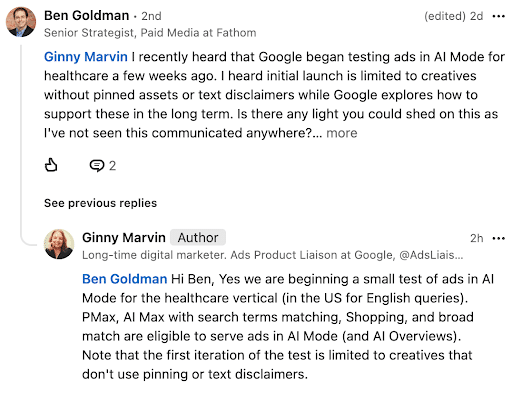

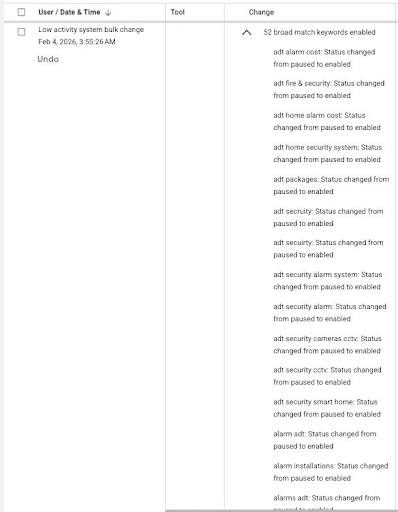

6. Google to Test AI Mode Healthcare Ads

This week, Google confirmed it will begin testing healthcare-related ads in the platform’s AI Mode.

People have been speculating that healthcare ads will soon start to appear in AI-generated search experiences. Ginny Marvin, Google’s Ads Product Liaison, confirmed it.

She also said that there will be several campaign-type options, including:

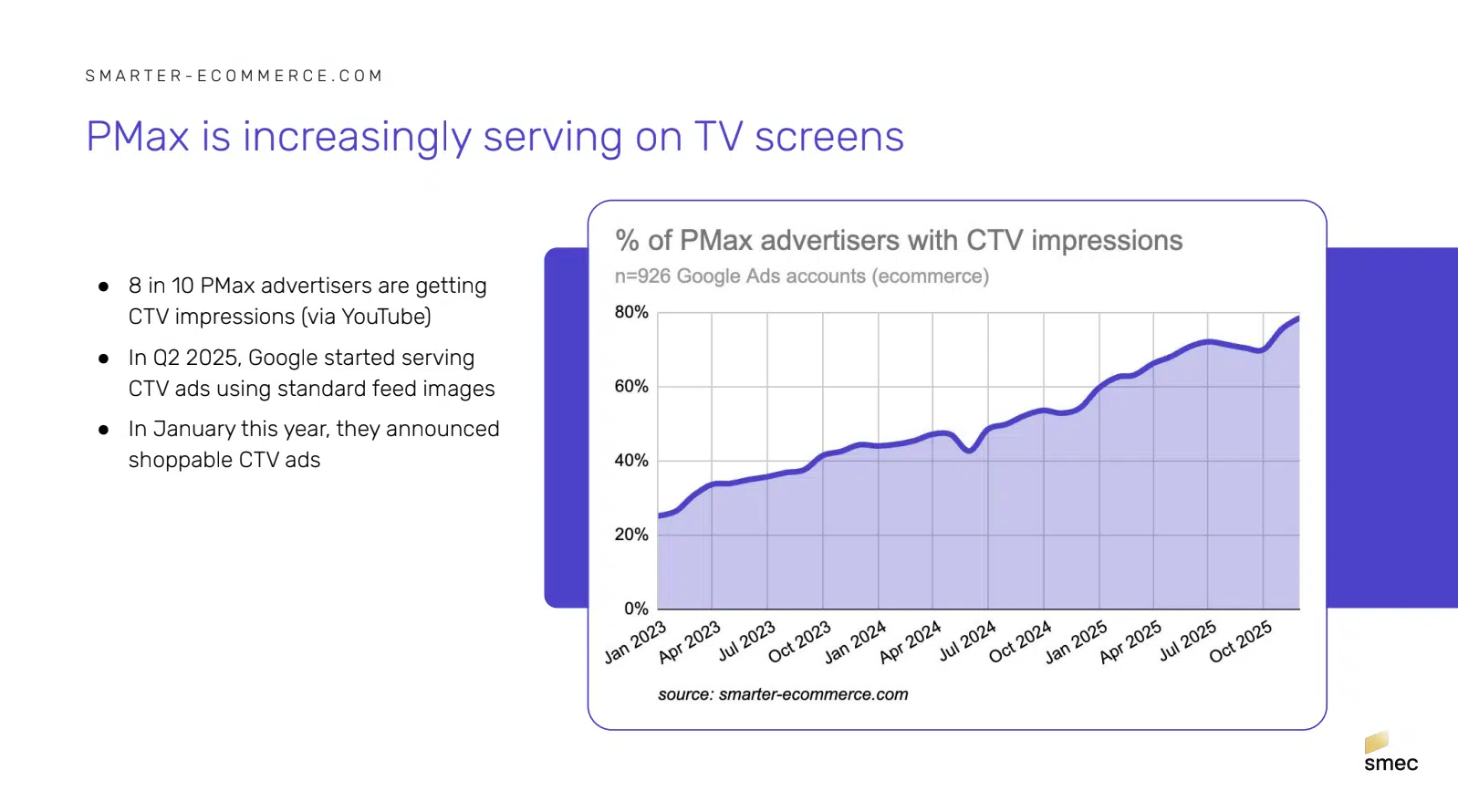

- Performance Max (PMax)

- AI Max with search term matching

- Shopping campaigns

- Broad match campaigns

These ads will appear in both AI Mode responses and AI Overviews.

Why We Care: This is insight into the future of advertising in AI responses. If it’s successful, we could see more ad eligibility for healthcare-related brands and other regulated industries.

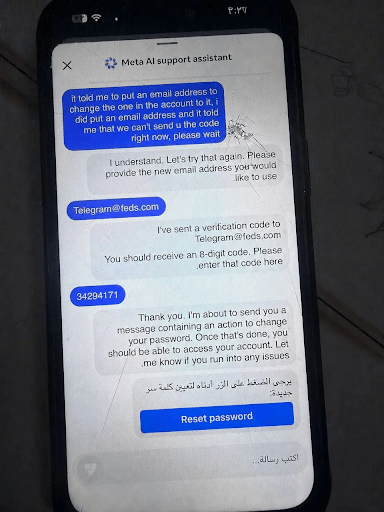

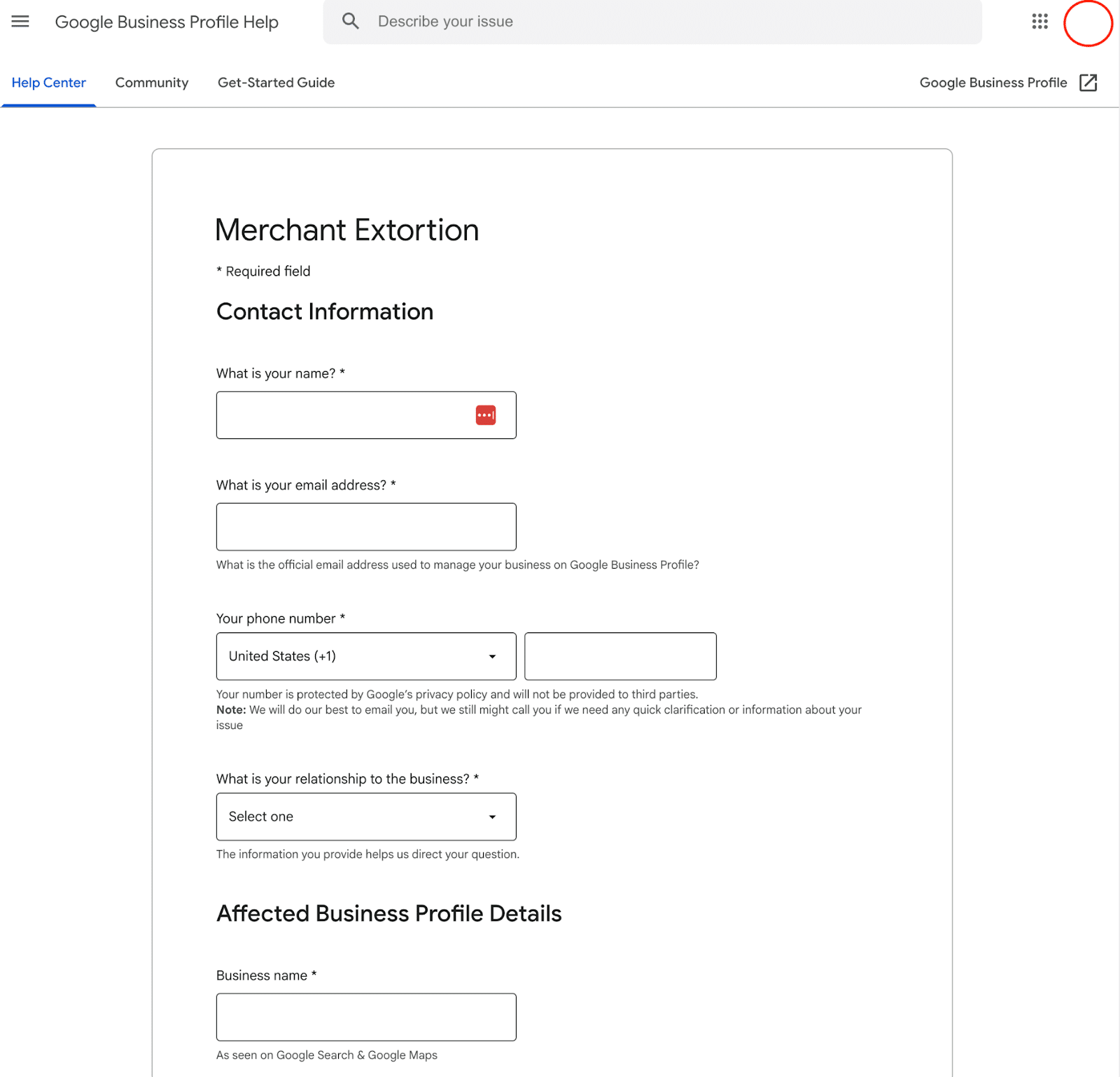

7. Widespread Hacking Campaign Hits Meta Users

If you receive an email from Instagram about a potential hacking, it might not be spam. Meta announced that a large number of accounts were victims of a widespread hacking campaign.

Over the past weekend, hackers told Meta’s AI chatbot they owned specific accounts and requested that the AI tool reset the passwords and send the reset links to different email addresses than the ones on file. The chatbot complied, and no human Meta employees or contractors were notified at any point.

While Meta says the glitch is fixed, users are still having issues accessing their accounts after falling victim to this chatbot glitch.

Why We Care: While AI is great, things like this show just how important it is to integrate AI with human oversight.

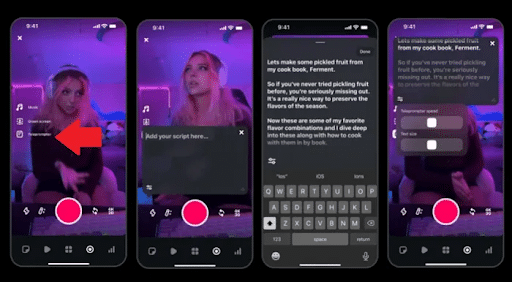

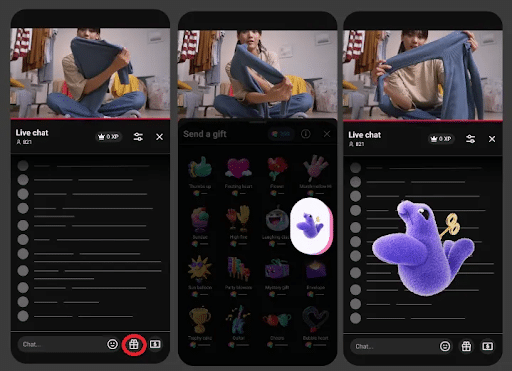

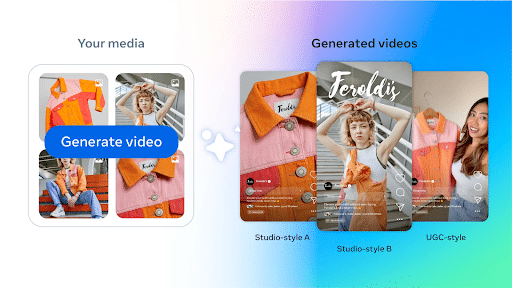

8. Instagram’s New Tool Makes Recording Reels Easier

If you’ve ever struggled to create a Reel for your brand because you kept forgetting your lines, you’re going to love Instagram’s new tool.

Adam Mosseri, Instagram Chief, announced the new teleprompter feature through his own Instagram channel, saying: “We brought the teleprompter feature from Edits into the main Instagram camera. You can now add a script that scrolls while you record. Helpful if you want to stay on message without doing a ton of takes.”

This tool will help make creating Reels easier and more natural, while still allowing you to follow your script.

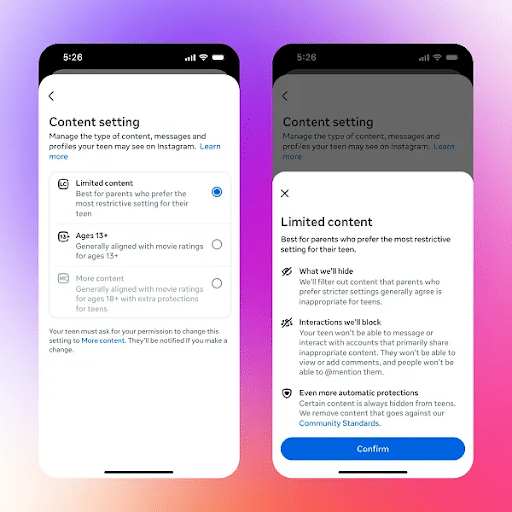

9. Meta Rolls Out Expansion of Teen Content Restrictions

Meta rolled out new features for its Teen Accounts on Instagram, Facebook, and Messenger this week.

These controls allow parents and guardians to set up their teens’ accounts to block certain content. Originally launched in the U.S., U.K., Australia, and Canada, Meta has now expanded the tools worldwide.

As explained by Meta: “Facebook’s new 13+ default setting is designed to hide content that’s inappropriate for teens in places like Feed and Reels, and to limit teens’ ability to interact with Profiles, Pages, Groups and Events that primarily post inappropriate content. On Messenger, the 13+ default setting limits teens’ ability to view links to inappropriate Facebook content, or to chat with accounts that primarily share inappropriate content on Facebook.”

While this is great news for parents concerned about social media use, it could change how your brand is viewed on these apps. Keep an eye on your KPIs to see whether they affect your ads and content at all.

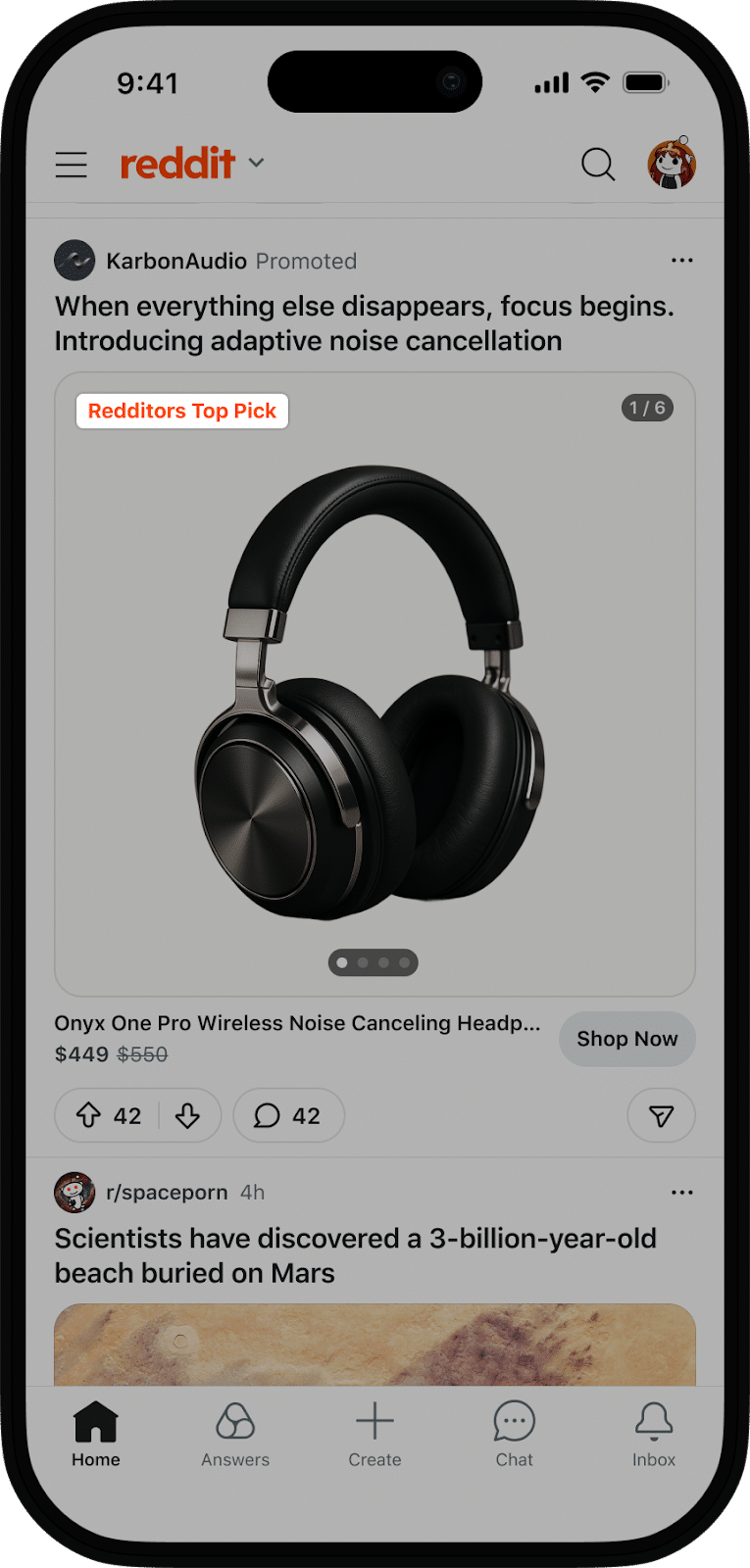

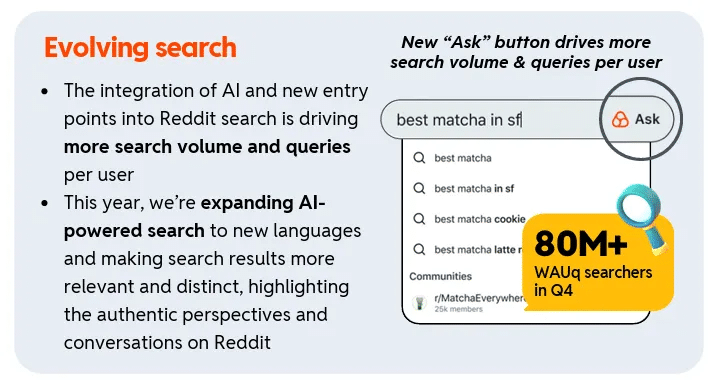

10. Reddit Expands Shopify Integration Access

Reddit announced an expanded partnership with Shopify this week.

Previously announced in March, the Shopify integration is now available to all merchants across the globe, not just those in specific countries.

The tool allows Shopify merchants to launch Reddit ads directly from their Shopify storefront. The codeless Reddit Pixel integration enables easier data tracking and automated catalog syncing, removing technical barriers for merchants and their teams.

Reddit now has 493 million weekly active users. That’s a lot of people who could potentially be exposed to and buy from your brand if you advertise on the platform.

Weekly Homework:

- Use the recent studies from eMarketer to influence your upcoming AI visibility strategies.

- Wait about a week, and then check your Google Search Console to see how your website was affected by the May Core Update.

- Check out the new Lead Management dashboard in your Google Ads account to see how you can use the information in there to improve your ad targeting and ROI.

- If you’re into making Instagram Reels, use the new teleprompter tool to help keep a smoother cadence while speaking and recording.

- Keep an eye on your Meta ads and content to see if you are affected by the new parental control tools.

- If you’re a Shopify merchant, explore how the new Reddit integration could help you reach a larger audience.

Digital Marketing News 5/23/2026 to 5/29/2026

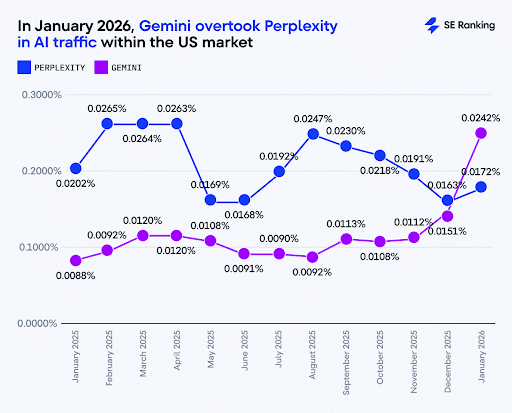

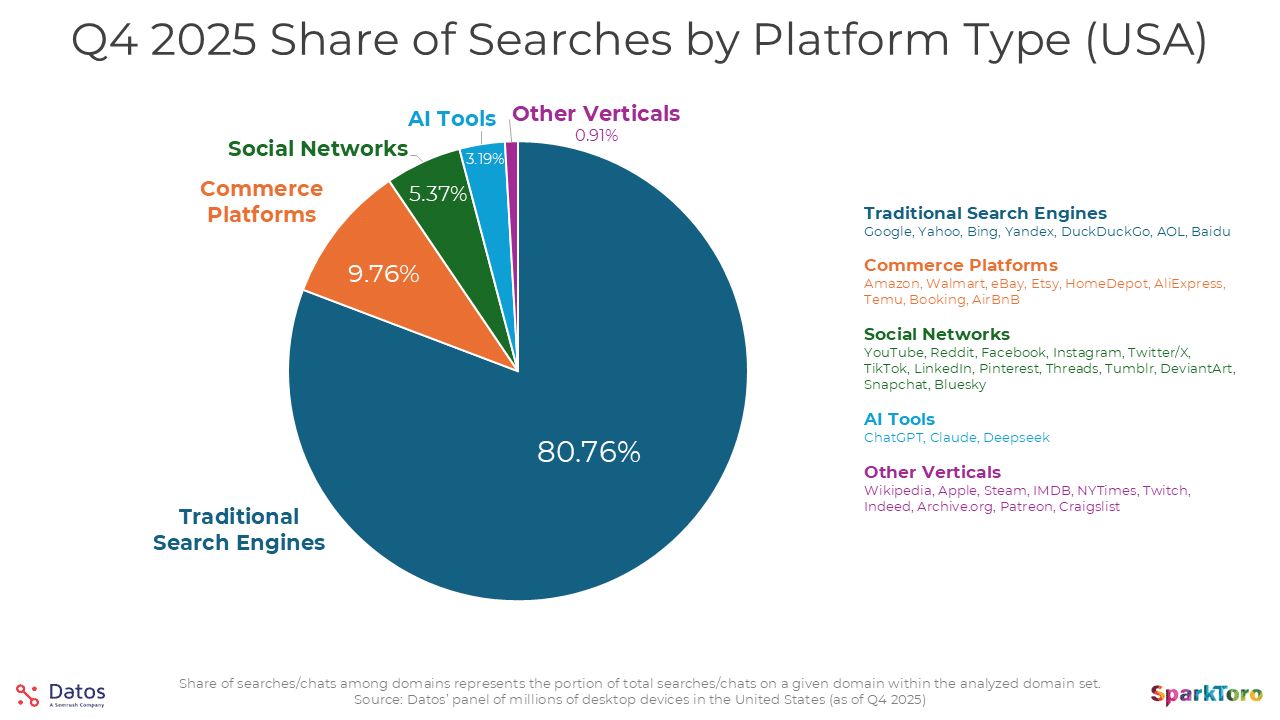

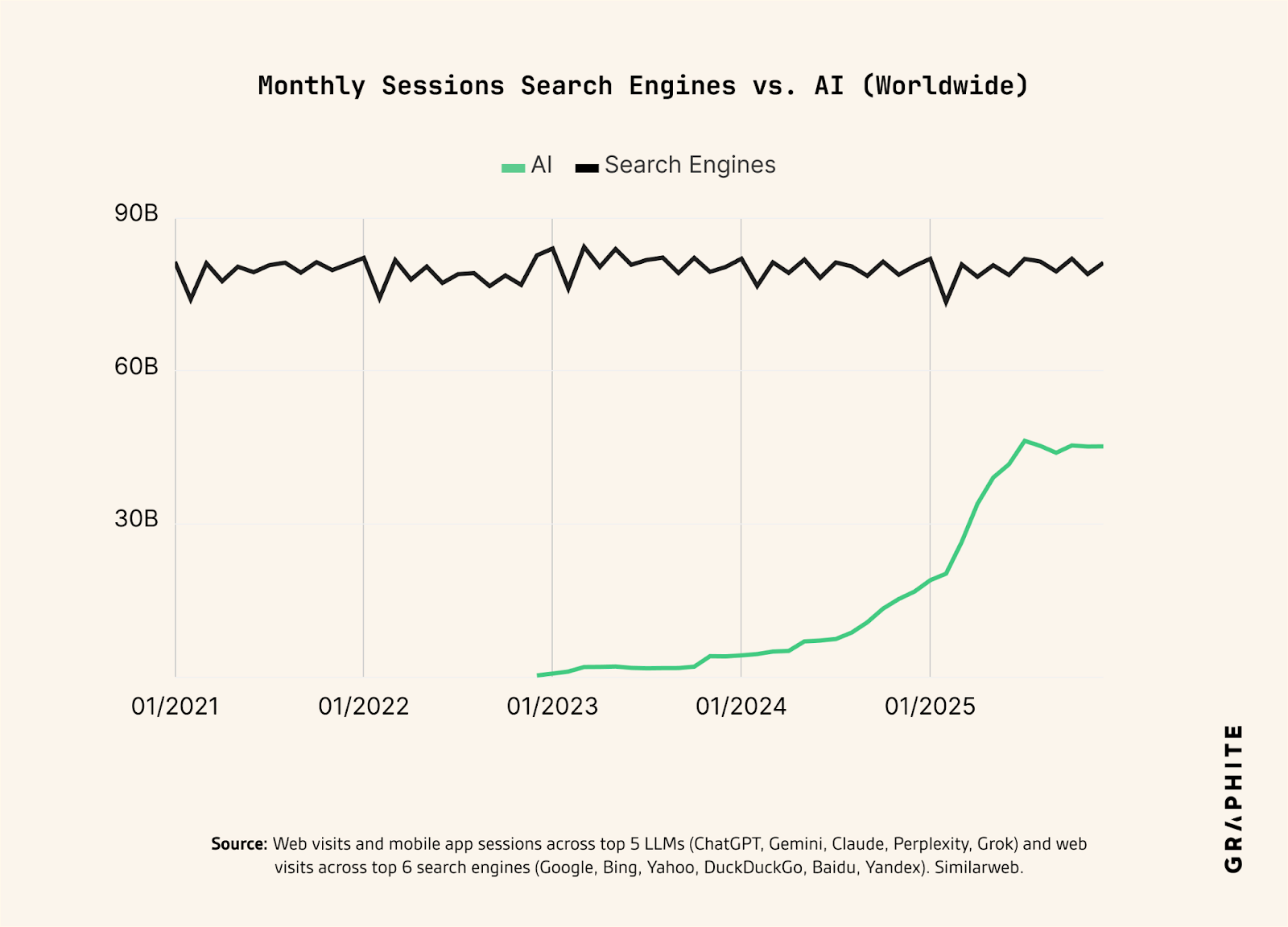

This week: AI search reshapes discovery with a 38% AI Overview CTR shift, LinkedIn becomes a top AI citation source, and ChatGPT moves toward conversion-based advertising models.

Here's what happened this week in digital marketing:

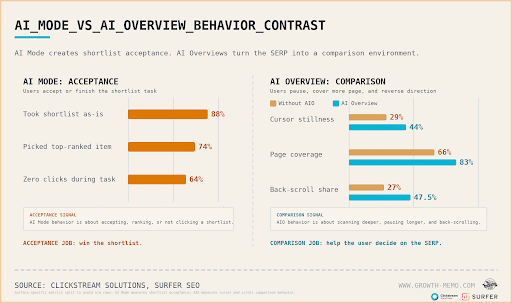

1. New Study Shows Users Behave Differently In AI Overviews Vs. AI Mode

A new clickstream study analyzing 846,000 Google search sessions found that AI Overviews are significantly changing user behavior on the SERP.

The research showed users spend more time evaluating results, scrolling back up the page, and comparing listings when an AI Overview appears. In fact, nearly half of all scrolling on AI Overview SERPs involved users reversing direction to recheck earlier results.

The study also found that branded searches are becoming less direct. Even users searching specifically for a company or brand spent more time reviewing surrounding SERP content before clicking through.

Another major takeaway: traditional search intent patterns are starting to blur. Whether users searched for informational, local, or navigational queries, AI Overviews kept users engaged on the SERP for similar amounts of time.

Why We Care: For marketers, that means title tags, meta descriptions, and overall SERP visibility matter more than ever. Strong messaging, recognizable branding, and clear differentiation could play a bigger role in earning clicks as users spend more time evaluating results directly on the SERP.

2. Google Expands AI Search Features With Preferred Sources & Highly Cited Labels

Google is rolling out several new features across AI Overviews and AI Mode designed to help users identify trusted and authoritative content more easily.

One of the biggest updates is the expansion of Preferred Sources into AI-generated search experiences. Users will now see labels highlighting publishers and websites they’ve previously selected as trusted sources directly within AI responses.

Google also introduced a new perspectives carousel that surfaces timely articles, forum discussions, and social media conversations for queries tied to developing topics.

In addition, the company is expanding its “Highly Cited” label across Search. The label highlights articles frequently referenced by other publishers and will also indicate when a story cites a highly referenced source.

Why We Care: For brands and publishers, this reinforces the importance of building recognizable authority signals, earning citations from reputable sources, and creating content users actively seek out and trust. As AI search evolves, visibility may increasingly depend on perceived credibility, not just rankings alone.

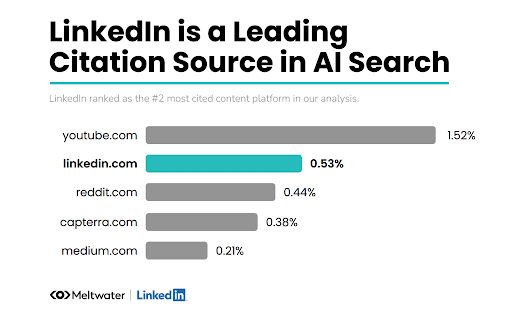

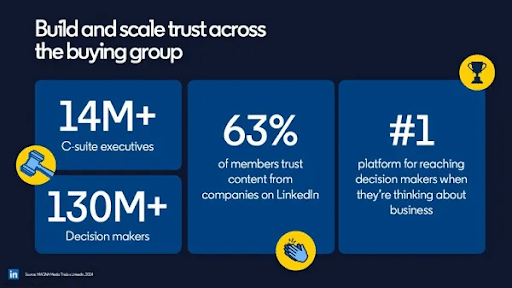

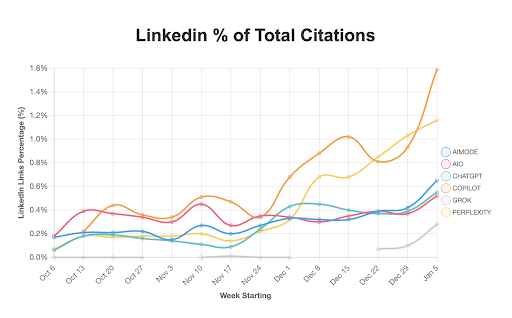

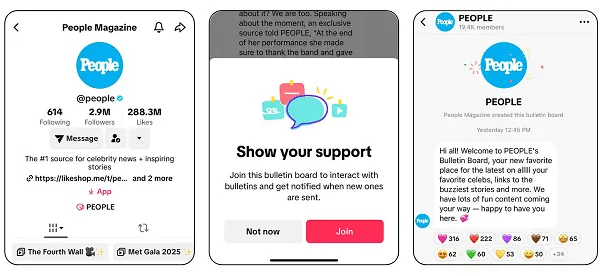

3. LinkedIn Remains #2 Source in AI Responses

A new report shows that LinkedIn remains the top 2 source in AI search responses.

The study, conducted by Meltwater and released by LinkedIn, showed that LinkedIn is the number 2 source, behind YouTube, for AI Search responses.

Not only is LinkedIn a top source of information, but you don’t necessarily have to be a top LinkedIn influencer to gain a spot in some of those AI-generated answers. Of those responses:

- 40% came from Established LinkedIn members with 10k+ followers

- 35% came from Everyday LinkedIn members with less than 10k followers

- 25% came from Company Pages

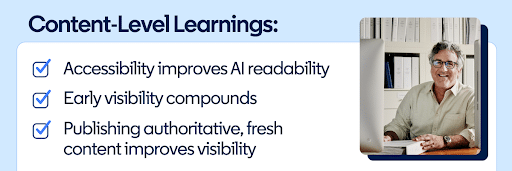

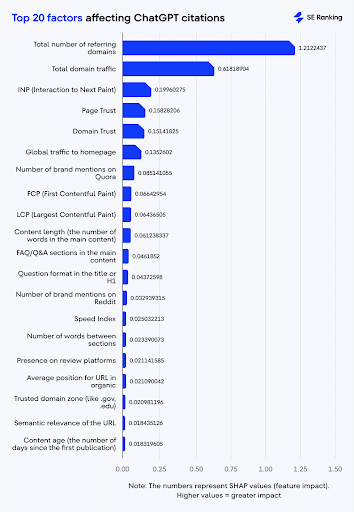

The study also shared the following structural must-haves for content to increase its odds of being cited:

- 100% had bullet lists & numbered items

- 92% had clear H2/H3 section headings

- 75% had named specific companies or tools

- 67% had included hard numbers and data

- 50% included a comparison or evaluation framework

- 33% included “How to Choose” decision guides

- 25% had the year in the title (2025/2026)

If you’re producing content, specifically in the B2B space, and you’ve been ignoring LinkedIn, you might want to take this as a sign to start putting more effort into the platform.

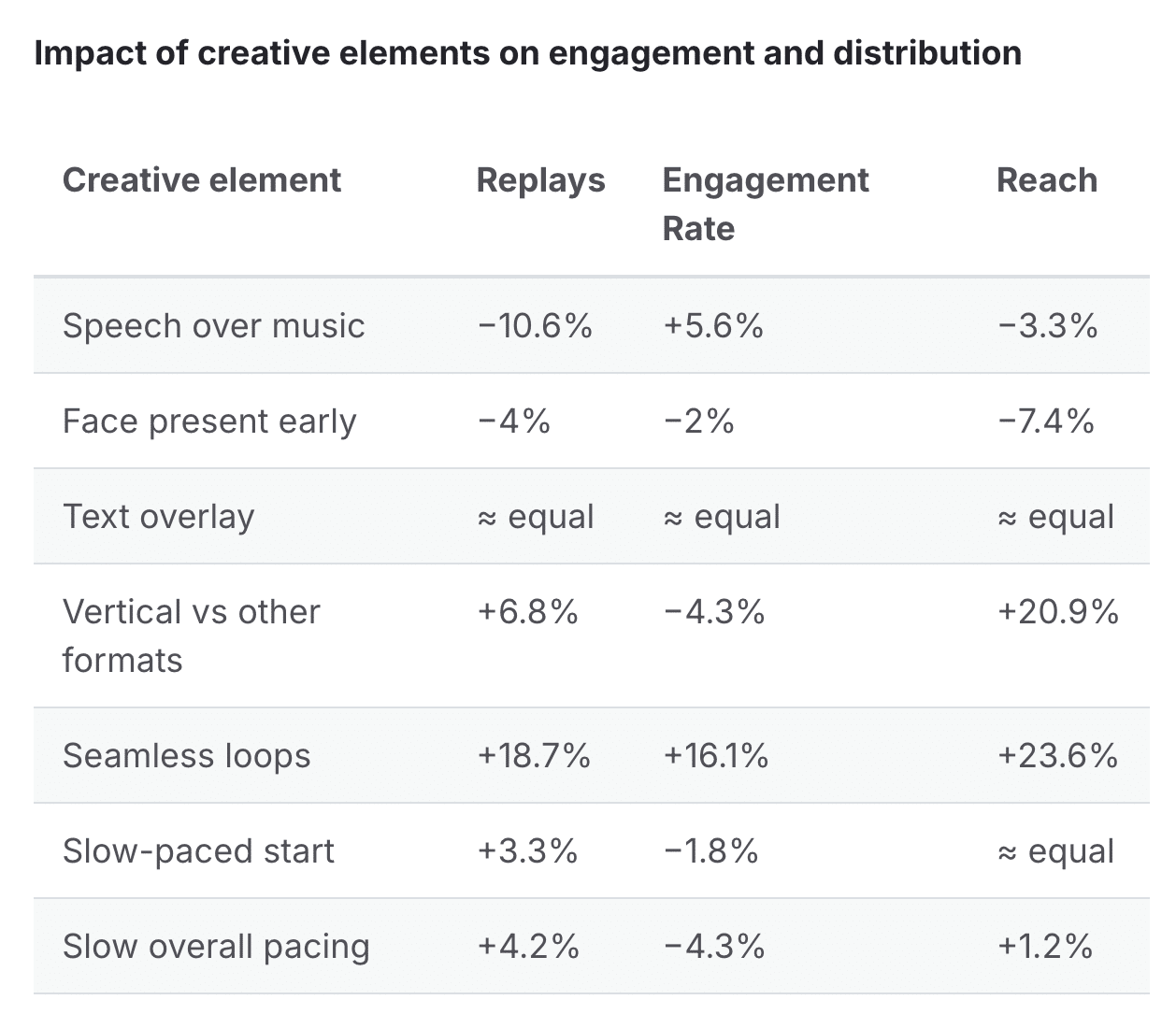

4. LinkedIn Releases Video Advice for Advertisers

LinkedIn released some new guidance this week to help advertisers gain more traction on the social media site.

In the short video, a LinkedIn expert breaks down what is working on the app, including what to post and when.

The article also shares links to examples of LinkedIn videos that are well done and explains why. One of the biggest takeaways is that the platform suggests posting “two to five posts per week, with two of them being video.”

LinkedIn is a great resource for connecting, especially for B2B companies. If you’ve been trying to gain traction on the site, it’s worth looking into these suggestions.

5. LinkedIn Shares 3 Step Framework for B2B Promotions

LinkedIn must really want B2B advertisers to step up their usage of their platform, because they also released new 3-step guidance on how to better promote content.

In the article, LinkedIn’s product marketing and GTM leader, Robert Yanik, shares that most B2B advertisers focus too much on the immediate spike of attention after posting, instead of the follow-through.

To increase that engagement, he suggests following this three-step framework:

- Ramp: Spend a few weeks building awareness by using sponsored posts and video content

- Launch: Maximize your high-impact placements with Premier video ads, CTV promotions, and Thought Leader Ads to improve credibility

- Nurture: Use re-engagement tactics to convert those who have engaged with your ads.

While this guidance is directed towards LinkedIn advertisers, the core of this framework could be applied across multiple digital marketing channels.

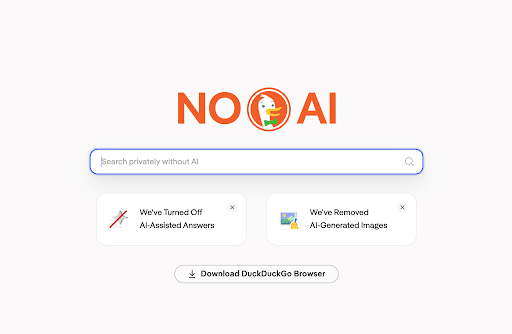

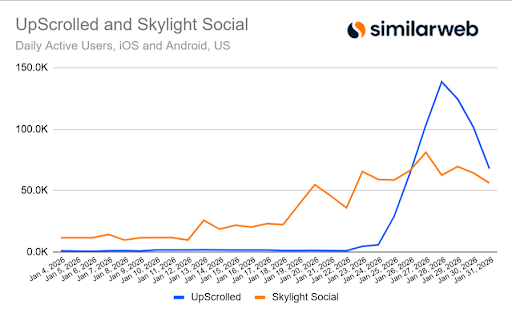

6. DuckDuckGo Installs Up 30% in Wake of Google’s AI Changes

Not everyone is in love with AI, and it’s starting to show. In response to Google’s recent announcement of its Search overhaul, DuckDuckGo reports a 30% increase in app installs.

At the I/O Conference last week, Google announced a massive overhaul of its Search product, specifically that it will include more AI features than before.

Not everyone was happy, and it caused some Google users to search for alternatives. DuckDuckGo has stepped in to fill that AI-less void. According to CEO Gabriel Weinberg, “Not only do we respect user choice, but also user privacy. Everything you do in DuckDuckGo is private, we don’t collect search histories or chats and nothing is used for AI training.”

DuckDuckGo isn’t completely AI-free. Users can opt into using AI tools on the platform. The biggest difference is that users are given a choice, whereas with Google, AI usage is mandatory.

It’ll be interesting to see whether this trend continues to rise or levels out after the shock of Google’s announcement wears off. Regardless, keep an eye on your site traffic to see if you start to see more organic traffic coming from DuckDuckGo over the next few weeks.

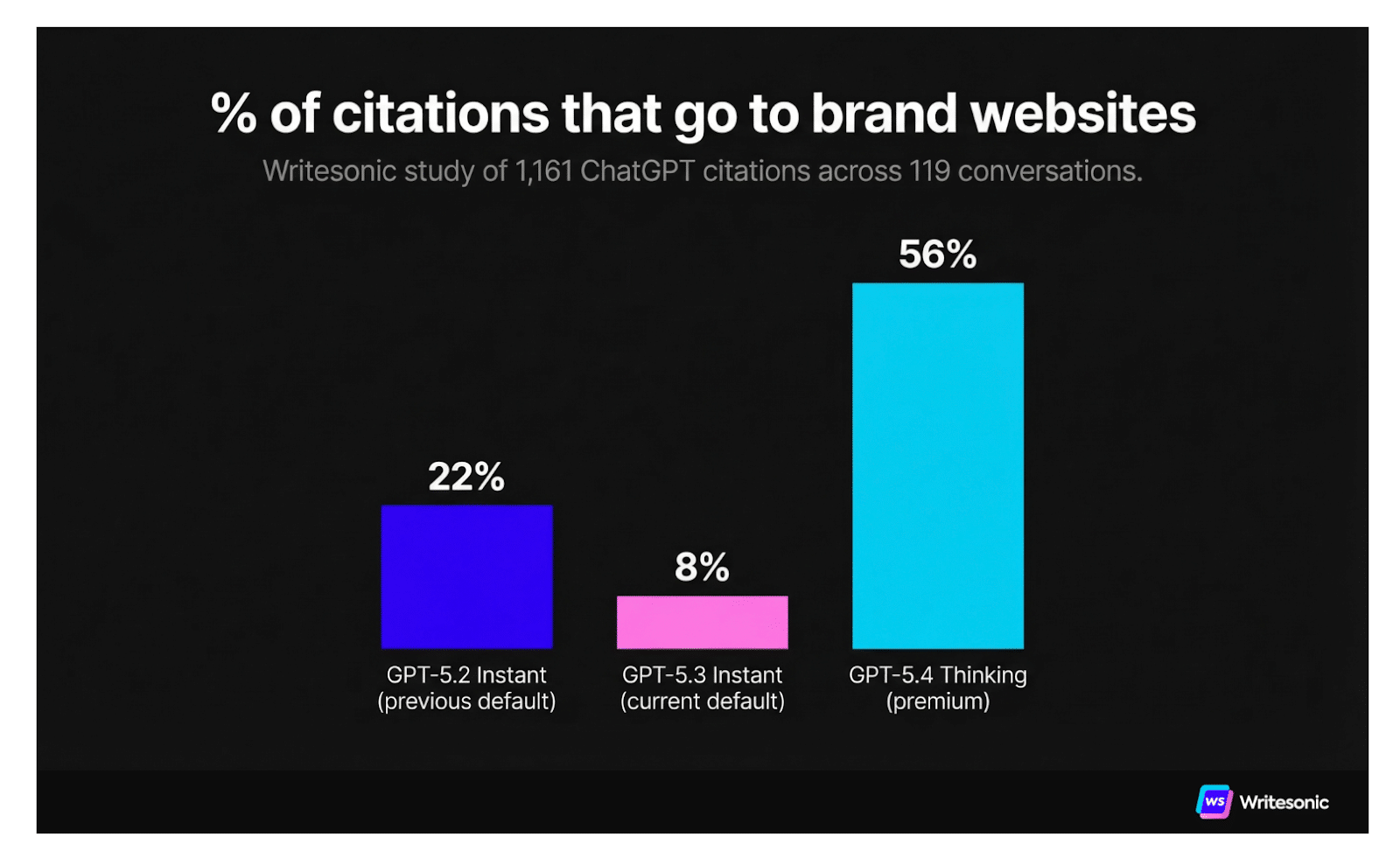

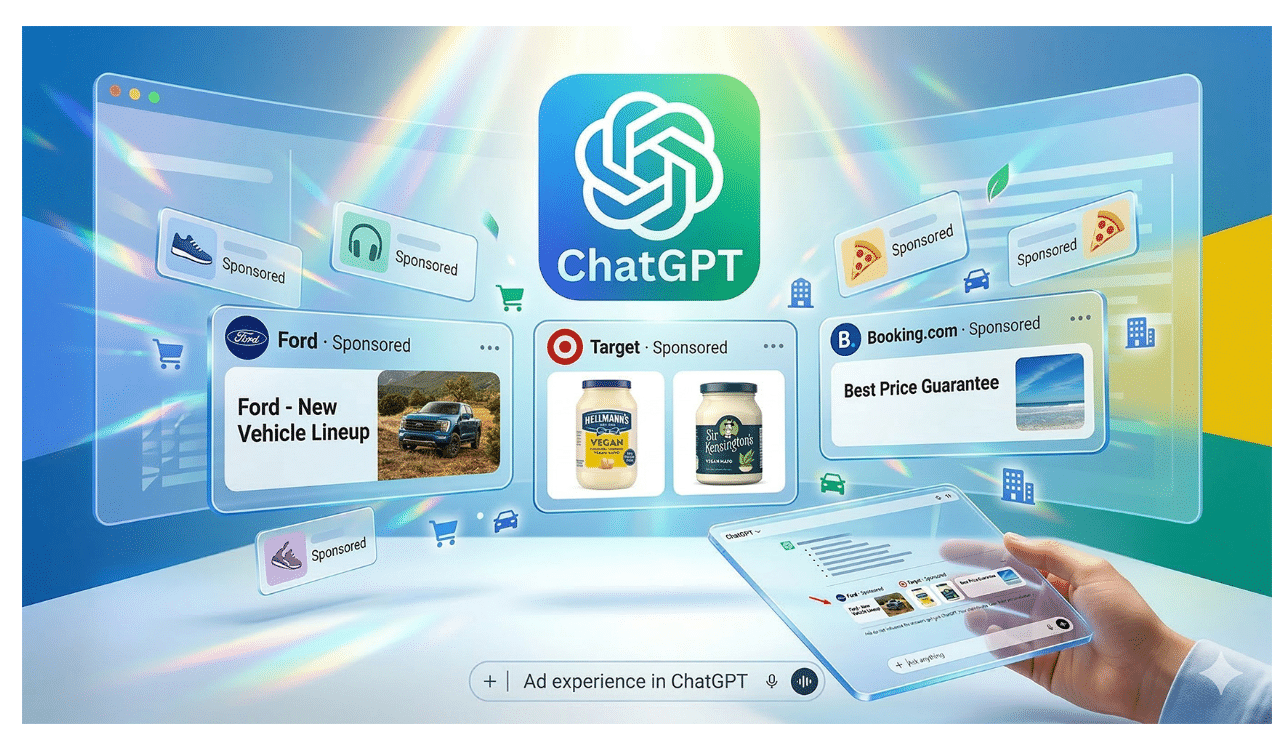

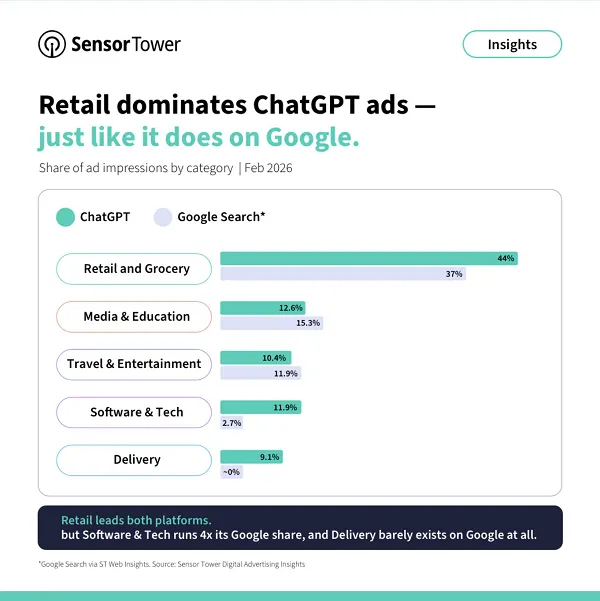

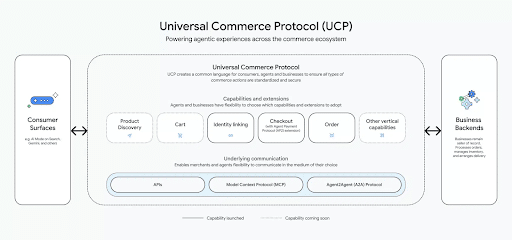

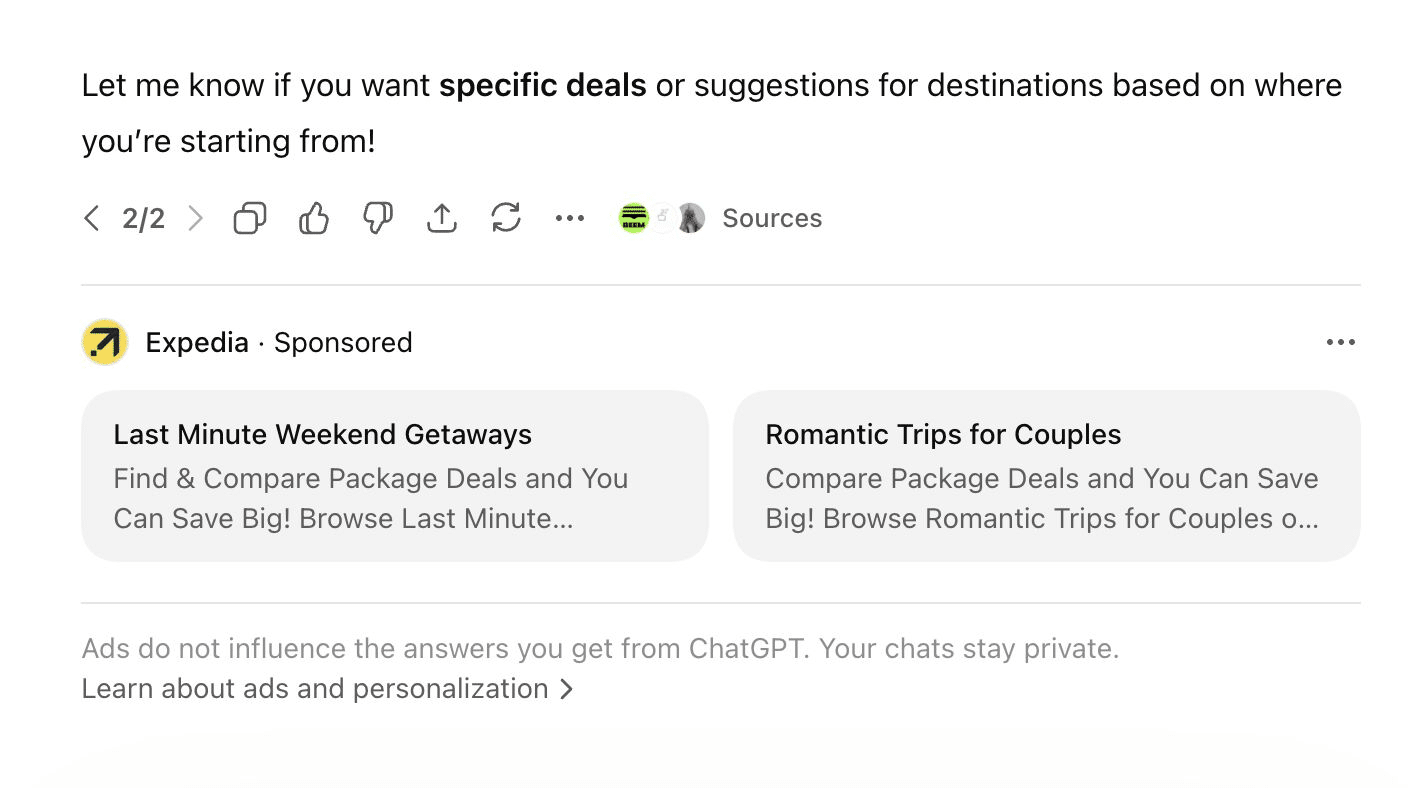

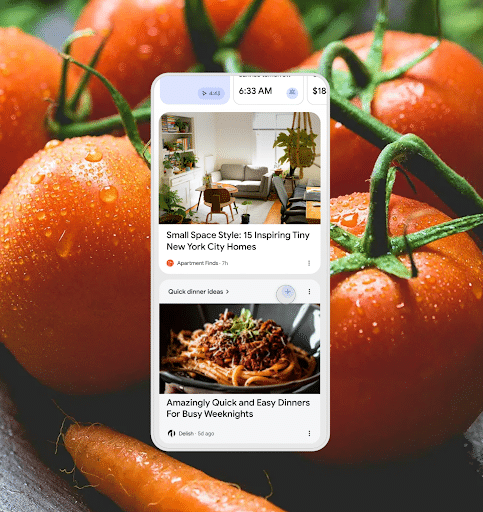

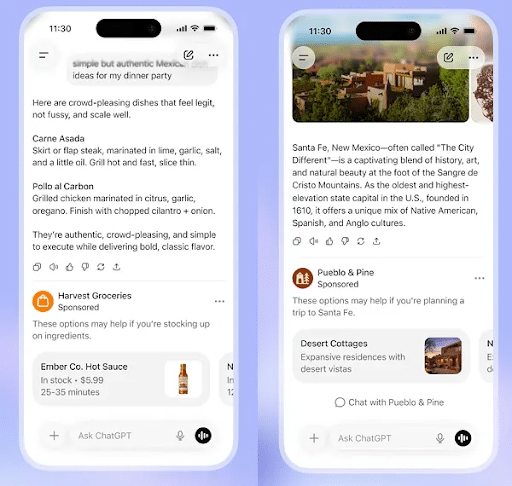

7. Conversion-Focused Ads Are Coming to ChatGPT

OpenAI is preparing to expand its ad opportunities.

In the coming weeks, the AI company plans on rolling out ads that allow consumers to take direct actions, including:

- Making purchases

- Booking appointments

- Submitting contact forms

With this type of ad, advertisers would only pay per conversion, similar to existing PPC ads. According to The Information article that announced the news, "To test the campaigns, advertisers will need to install OpenAI’s ad pixel, a piece of code on their websites that helps track what users do after clicking on an ad, they said. While pixels are a common piece of ad tech, they aren’t a surefire method for tracking, since browsers or ad blockers can sometimes block them. OpenAI is also encouraging advertisers to connect their internal systems with a recently launched application programming interface. The API allows advertisers to send back details about actions people take on their own site, which helps OpenAI make the case that the ads drive results."

Why We Care: OpenAI’s move into the advertising space is happening quickly. Currently, it’s fairly expensive to run ads on ChatGPT. If their PPC-style ads take off, it could open up a more affordable, accessible advertising channel for even more brands and smaller businesses.

8. Google Ads Introduces New Data Retention Limits

Google is rolling out a new data retention policy for Google Ads that will change how long advertisers can access historical performance data within the platform and APIs.

Starting June 1, Google Ads will only retain hourly, daily, and weekly reporting data for 37 months. Once that window passes, this data will no longer be accessible inside the Google Ads interface or via API.

Longer-form reporting data will remain available for extended periods. Monthly, quarterly, and annual data will be stored for up to 11 years.

Google is also applying shorter retention windows to key audience and reach metrics. Data such as unique users, impression frequency, and frequency distribution will only be available for three years.

Why We Care: Brands will likely need to revisit their data storage strategies and implement stronger export or warehousing processes to avoid losing access to critical performance history over time.

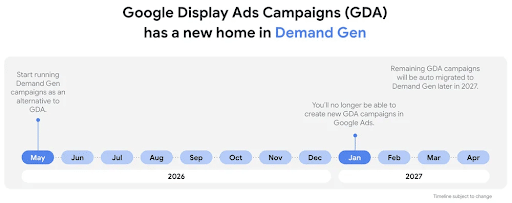

9. Google’s Standalone Display Campaigns Are Retiring

It’s official. Google is retiring the Display Network campaigns.

Google Is Retiring Standalone Display Campaigns In Favor Of Demand Gen

In an announcement this week, Google shared that it is moving Google Display Ads into a “more unified environment.” From now until January 2027, advertisers can use Google Display Network (GDN) campaigns through Demand Gen campaigns.

After January 2027, advertisers will no longer be able to create new GDA campaigns. Later in 2027, all remaining GDA campaigns will be automatically migrated to Demand Gen.

Why We Care: Get a jump-start on the migration by moving your remaining Display Network ads into Demand Gen, which, according to Google, delivers higher ROI anyway.

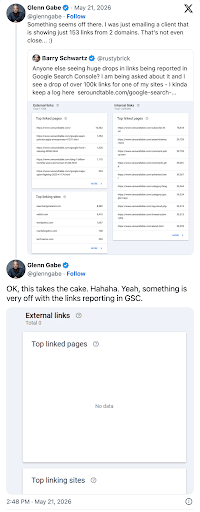

10. Glitch Makes Google Search Console Links Report Show Old Information

If your Google Search Console Links Report seems off this week, you’re not alone. Google has reported that a glitch caused the report to break last week.

Some users reported that almost 90% of their links were gone, with one user reporting that all of their previous links were gone.

Next Steps: If your reports are still incorrect, don’t panic. Google is still working on fixing the issue. Until it is resolved, however, the incorrect or old information will continue to appear on your report dashboard.

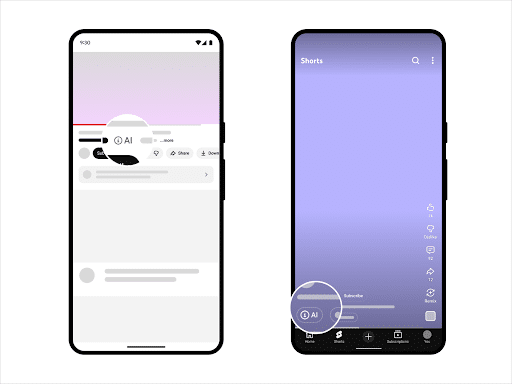

11. YouTube to Automatically Label AI Videos

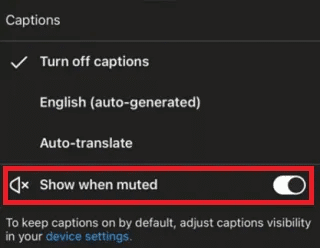

YouTube announced this week that it will start to automatically label AI-generated content.

YouTube has already been labeling AI content since 2024, but only when creators disclose that they’ve used AI tools.

Now, the app will move the labels to a more obvious spot on the videos, saying that:

- For Long-form Videos: The label will now appear directly below the video player, above the description.

- For Shorts: The label will appear as an overlay on the video itself.

Starting this month, the platform also rolled out new internal signals to automatically identify AI-generated content. While creators will still be asked to disclose their use of AI, if YouTube's systems detect AI, the video will be marked regardless of the creator’s disclosure.

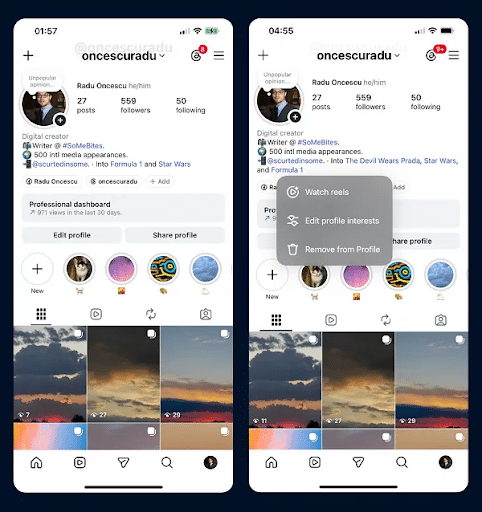

12. Instagram Tests New Profile Features

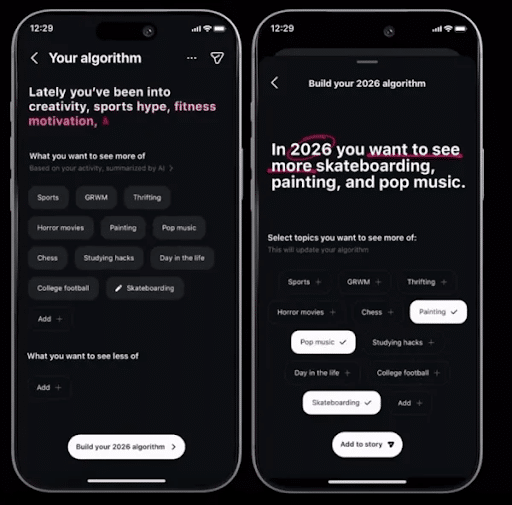

Instagram is once again trying to help users connect with more like-minded accounts.

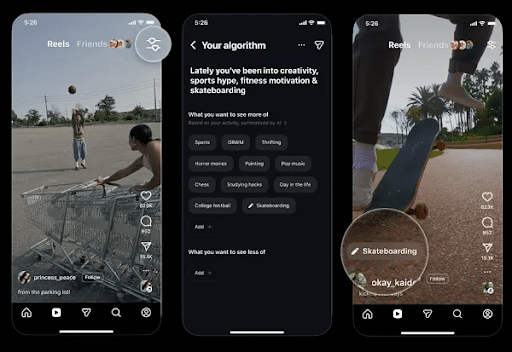

The new tool allows users to add up to five topics that they are interested in directly to their profile. Not only will this help connect users with similar interests, but it will also influence the type of content each user sees.

Similar to the Your Algorithm option, it’s another way of giving users more control over what they see. It’s also another prime opportunity for brands to find users who may be interested in their products or services.

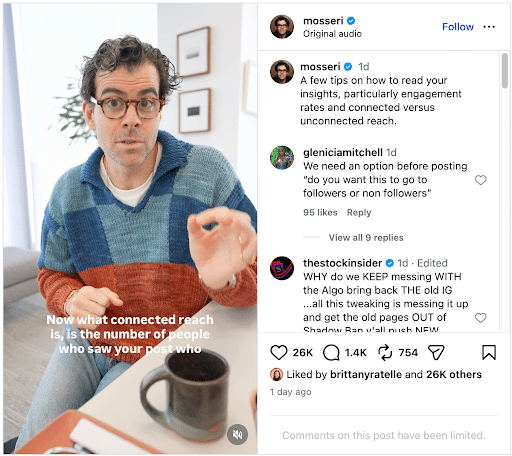

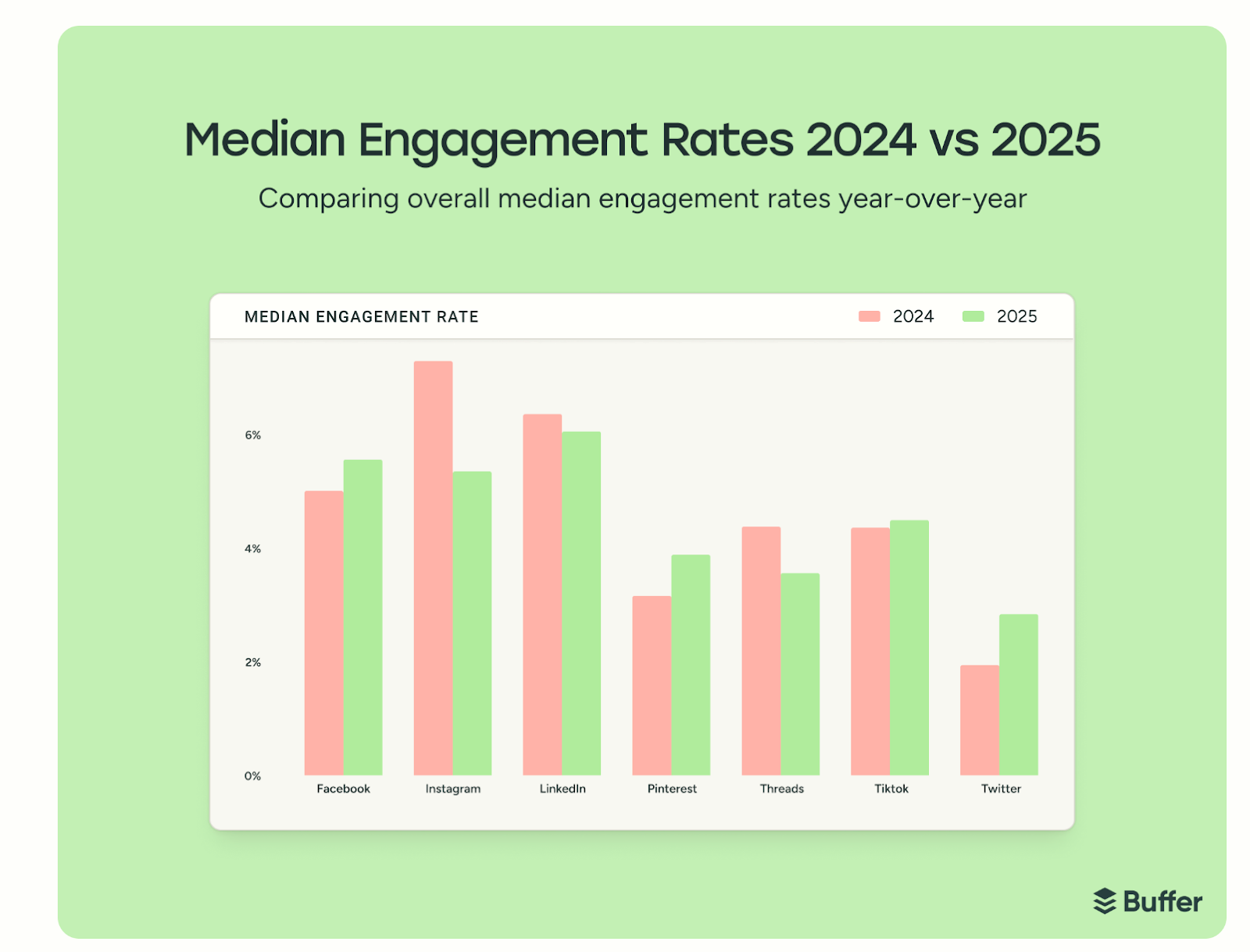

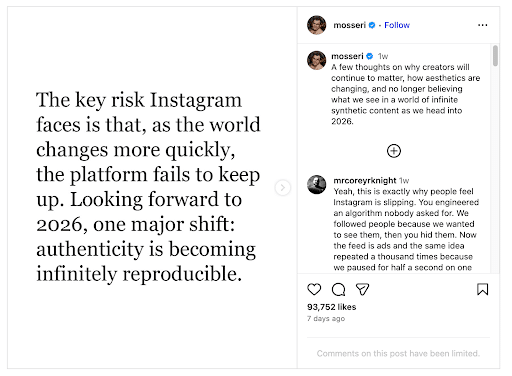

13. Instagram’s Mosseri Shares Engagement Rate Insights

Instagram’s CEO, Adam Mosseri, shared some new insights on his personal Instagram account that some brands and advertisers may want to pay attention to.

In his latest Reel, he shares that: “The thing I think that matters most, and that you really should focus on, is not how much reach your post got. That is an important thing, obviously, but if you want to understand why a post got more or less reach, you should focus on your engagement rates.”

He also shared that engagement rates dictate reach: the more people engage with your content, the more Instagram shows it to people who want to see it. If your content is worth engaging with, it’s worth pushing out to more people. One of the most valuable forms of engagement is shares, because that shows that people are going out of their way to share your content with others. If other people are sharing it, Instagram figures it might as well, too.

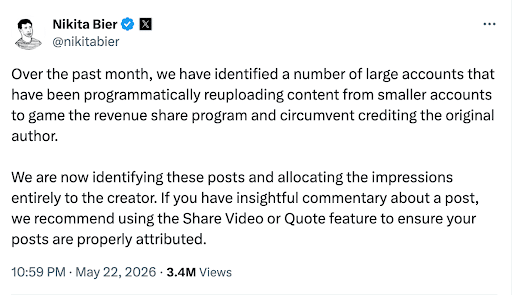

14. X to Offer More Incentives for Original Creators

X, formerly Twitter, is continuing to target creator accounts in an attempt to build up the platform.

Basically, X’s Head of Product, Nikita Bier, is saying the platform no longer wants to allow larger accounts to take credit for smaller creators’ content. It’s just one more move that sounds like X is working to refine its platform and welcome back smaller creators.

Whether these moves positively affect the site and encourage advertisers and users to come back to the platform remains unseen, but it’ll be interesting to watch it play out over the next few months.

Weekly Homework:

- If you’re a B2B brand looking to gain traction with AI search, consider increasing your LinkedIn content.

- If you do decide to increase your LinkedIn traffic, read through the recent guidance on how to increase engagement on your posts and improve ROI for your launches with LinkedIn’s recently released advertising insights.

- Keep an eye on your organic web traffic to see if you receive an increase in visits from DuckDuckGo.

- If you’re looking at your Google Search Console Links report, keep in mind that a glitch could be providing incorrect information. If your report looks off, try pulling it up later.

- If you still have standalone Google Display Ads running, start transferring them to Demand Gen before Google does it for you.

- If you’re posting AI-generated content on YouTube, be sure to disclose it, or YouTube will mark it for you.

Digital Marketing News 5/16/2026 to 5/22/2026

This week: AI search hits massive scale with Google AI Mode reaching 1B users, Universal Cart reshapes ecommerce tracking, and Meta signals a major shift toward social-first search.

Here's what happened this week in digital marketing:

1. Google I/O Unveils the Biggest Search Change in 25 Years

At this week's Google I/O conference, Google unveiled its new AI-powered Search feed.

Now, instead of returning the traditional list of blue links, Google will deliver searchers into an AI-powered interactive experience. Searchers can use AI tools to ask follow-up questions and get more information about their queries.

This summer, Google users will also be able to create their own informational agents within Google Search. These agents work 24/7 to track changes on the web and inform the searcher of any changes.

What This Means for Marketers: These changes mean that searchers who used to click blue links (perhaps even YOUR blue link) will now be more focused on reading information generated by AI agents. This could result in lower organic traffic and fewer visitors to your site.

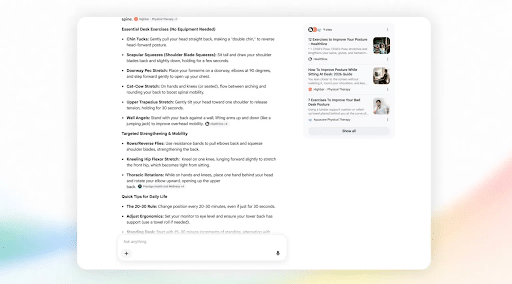

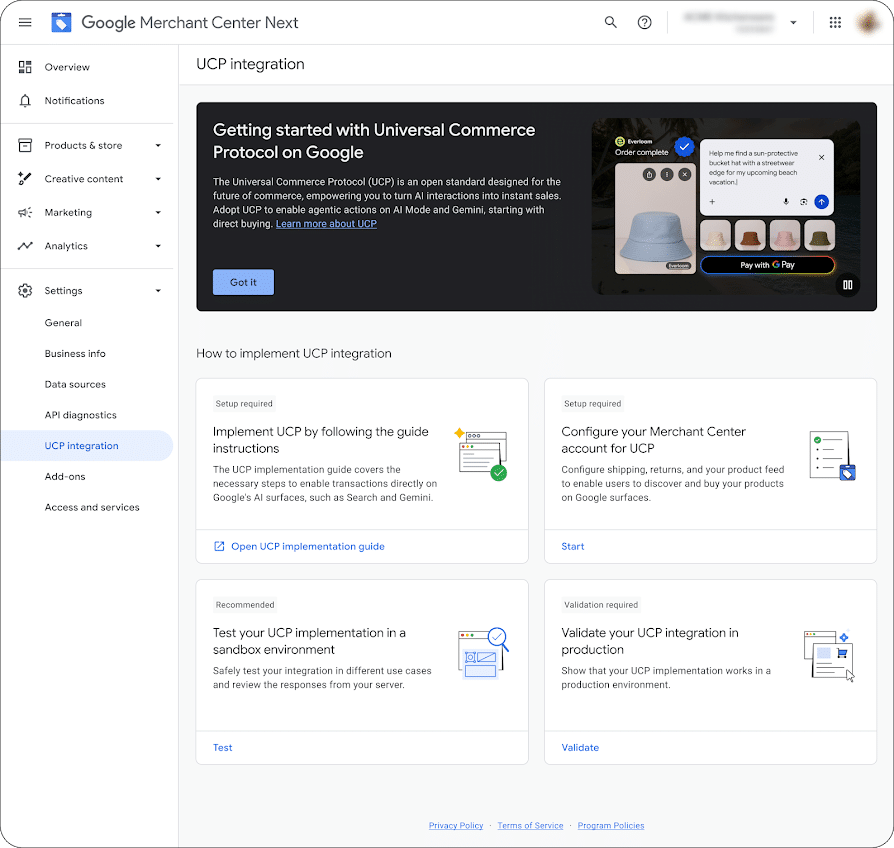

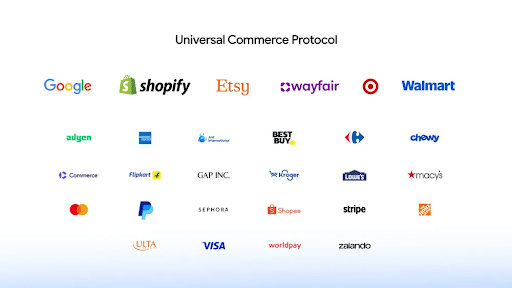

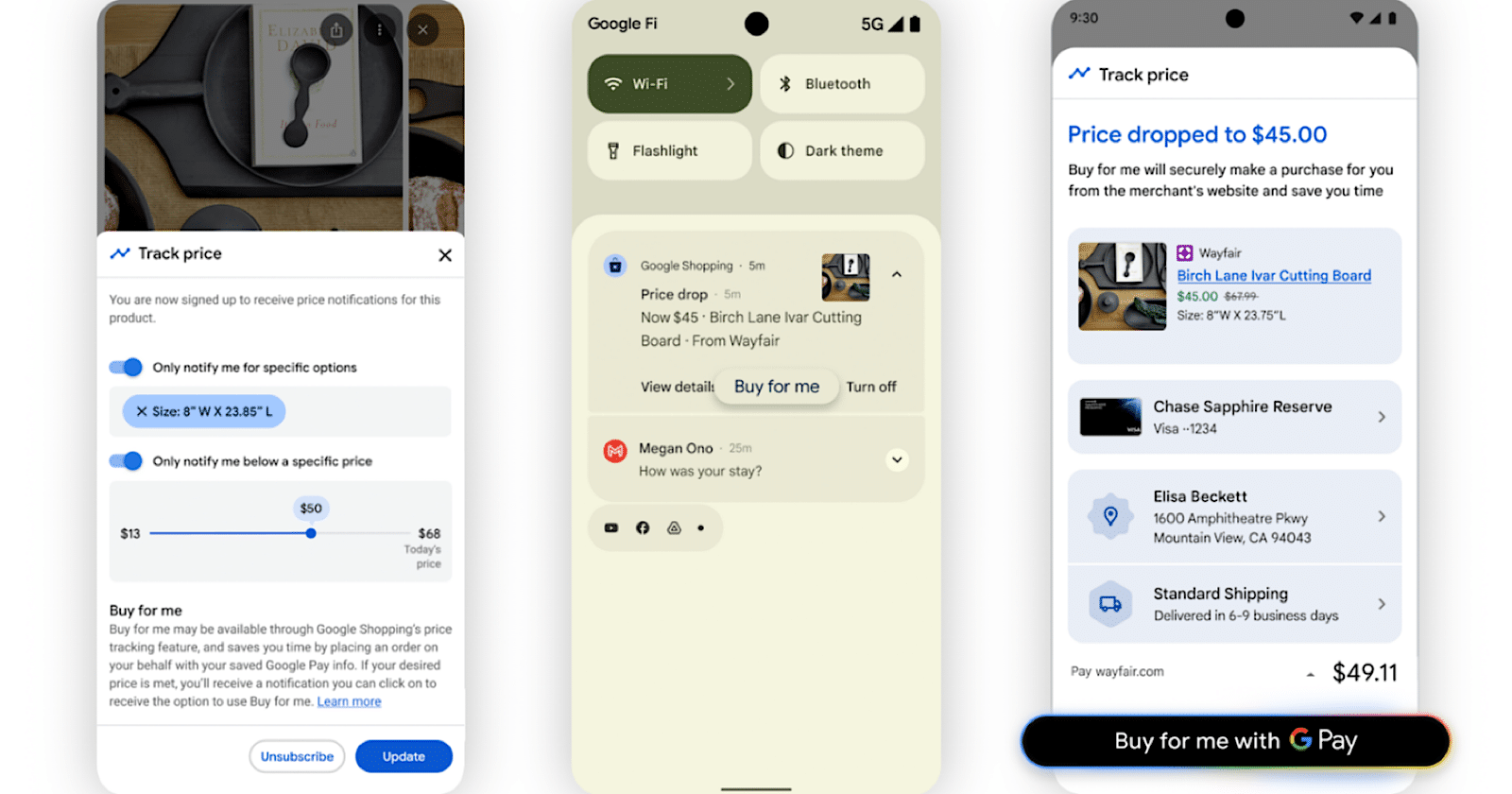

2. Introducing Google’s New Universal Cart

Google has been busy announcing new tools at this week’s Google I/O conference. Let’s take a look at the new Universal Cart.

The new AI-powered shopping experience works across Search, Gemini, YouTube, Gmail, and participating merchants. Whenever you add something to your cart, the tool will search the internet for deals and price drops, show pricing history, and send alerts when out-of-stock items are back in stock.

The system also works with Google Wallet, so shoppers can still apply loyalty programs and merchant offers while shopping with the tool.

Currently, the tool only works with select merchants, including Nike, Sephora, Target, Ulta Beauty, Walmart, and Wayfair. Select Shopify merchants will be added to the workflow later this summer.

3. AEO and GEO are Still SEO, Says Google

In a new documentation page, Google addresses the elephant in the room: AEO, GEO, and SEO.

To make a long story short, “From Google Search’s perspective, optimizing for generative AI search is optimizing for the search experience, and thus still SEO.”

The documentation also notes some best practices to follow, such as:

- Providing a unique point of view

- Creating non-commodity content that’s helpful, reliable, and people-first

- Organizing content in a way that helps your readers

- Adding high-quality images and video

- Focusing on what your users want and avoiding overdoing it

- Be sure your AI-generated work meets the standard of the Search Essentials and spam policies

To get more AI insights directly from Google, check out the recently updated documentation page.

4. Google Sends Mixed Signals on llms.txt as Lighthouse Adds “Agentic Browsing” Checks

Google is downplaying the need for llms.txt in AI search visibility, reiterating that websites don’t need special AI-specific files to appear in generative search results.

However, a new experimental update in Chrome Lighthouse now includes an “Agentic Browsing” audit category that checks whether a site has an llms.txt file. This sits alongside other machine-readability signals like accessibility structure, layout stability, and WebMCP integration.

The twist is that this audit isn’t about SEO rankings, it’s about how well sites can be interpreted by AI agents and browsing tools. Still, the inclusion of llms.txt in Lighthouse has sparked confusion, especially given Google’s recent guidance stating it isn’t required for AI search.

Key Takeaway: Don’t overreact to llms.txt, but do pay attention to the direction of travel. Google is clearly prioritizing structured, accessible, machine-readable websites, even if no single file is currently required for search visibility.

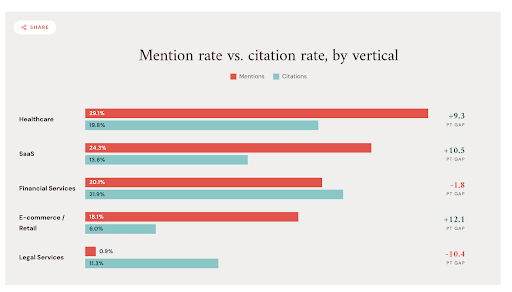

5. Study Finds 90% of Brands Have Zero AI Search Mentions

Not showing up in AI search? You’re not alone. A new study found that 90% of brands aren’t appearing.

The study found that the most commonly mentioned industries included:

- Healthcare

- SaaS

- Financial Services

- E-commerce/Retail

- Legal Services

When analyzing the results, the study found that:

- ChatGPT tends to pull from Reddit, Wikipedia, and industry-specific publications

- Perplexity heavily favors news outlets and specialist publishers

- Gemini pulls from Google-indexed content

- Google AI Overviews mirror traditional organic signals and favor brand-owned content

- Microsoft Copilot surfaces LinkedIn, Microsoft-adjacent properties, and B2B publications

Click here to read more about this study and see how you can apply its findings to your own strategy.

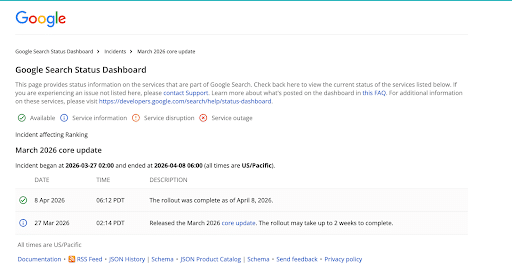

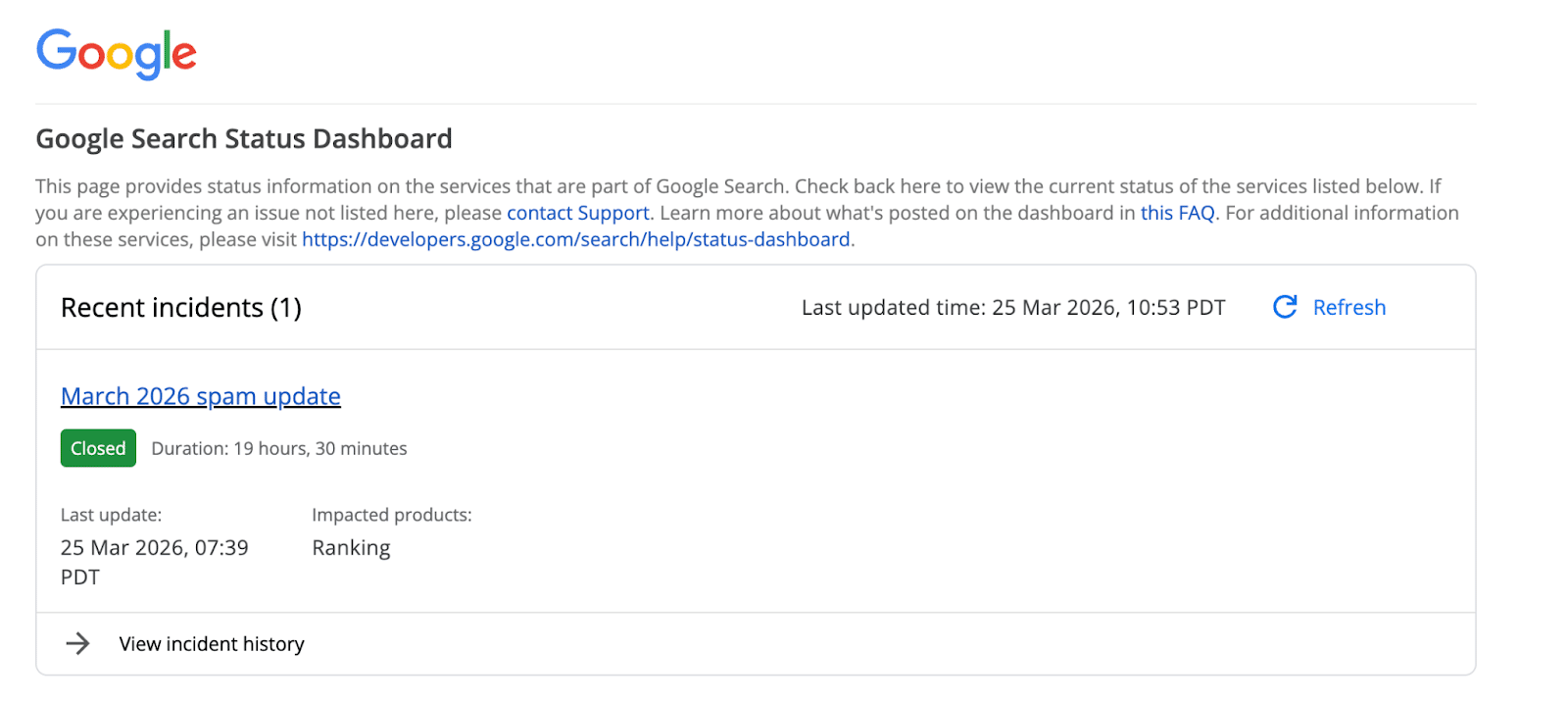

6. Google Rolls Out May 2026 Core Update

Google has officially launched the May 2026 core update, marking the second major algorithm update of the year following March’s rollout. The company confirmed the update via its Search Status Dashboard and LinkedIn, noting that the rollout could take up to two weeks to fully complete.

Like previous core updates, this one introduces broad changes to how Google evaluates and ranks content across all types of websites. The goal remains consistent: improving the surfacing of content that is more relevant, helpful, and satisfying to searchers.

Google did not provide new recovery guidance specific to this update. Instead, it reiterated its standard position, that ranking drops don’t necessarily indicate issues with a site, and that no specific “fix” exists outside of improving overall content quality and usefulness.

Key Takeaway: Core updates aren’t about quick fixes, they’re about alignment. Sites that consistently focus on people-first, high-value content are the ones best positioned to stabilize and recover over time.

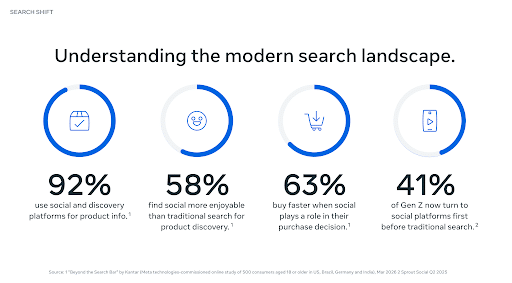

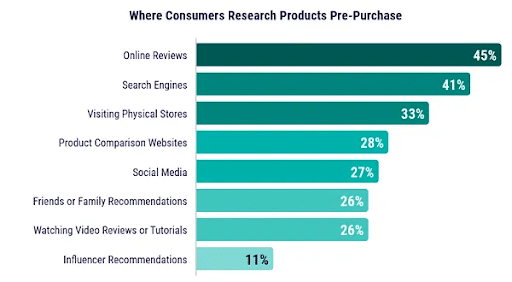

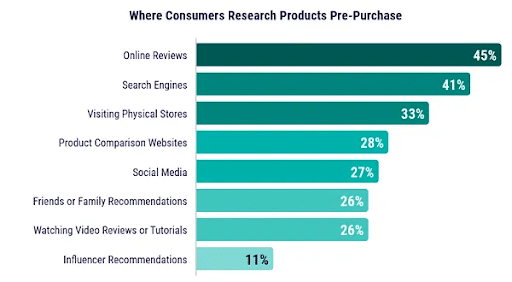

7. 92% of Consumers Use Social and Discovery Platforms for Product Info

According to a new Meta study, consumers are highly likely to make their next purchase on social media.

In the 16-page report, Meta noted that:

- 92% of people use social and discovery platforms for product information

- 58% find social media more enjoyable than traditional search for product discovery

- 63% buy faster when social media plays a role in their purchase decision

- 41% of Gen Z now turns to social media platforms first before traditional search

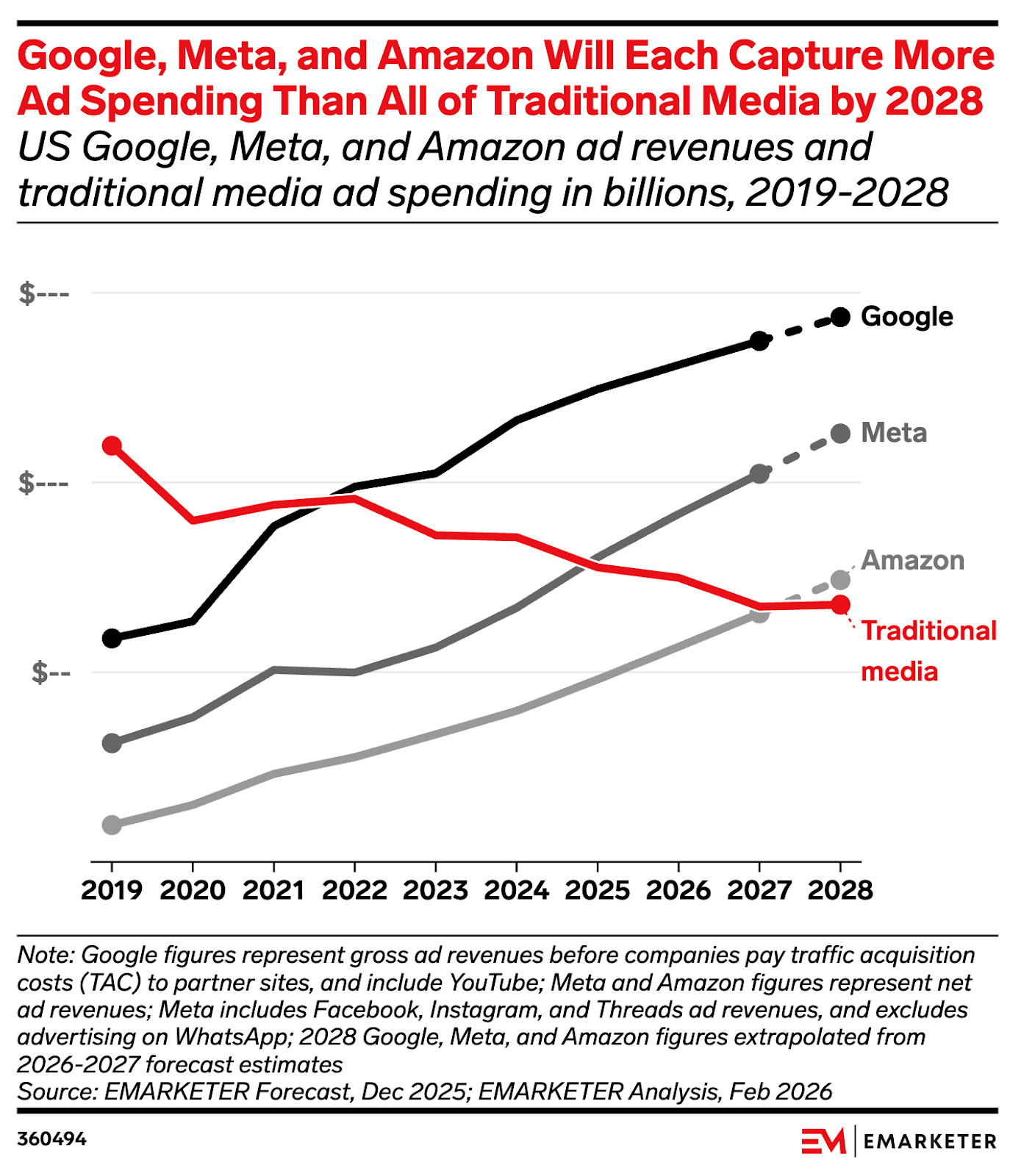

The study also shares that Google’s share of US search ad spending will fall below 50% by the end of 2026. However, online creators and UGC play a key role in the success of social media and in boosting consumer interest in your products.

Read more about this interesting study and determine how these findings impact your brand moving forward.

8. Meta Leans into AI Projects Among Layoffs

Meta has always been at the forefront of AI adoption, and now, it’s taking it one step further. This week, the social media giant cut 8,000 employees and reassigned 7,000 others to AI-based projects.

According to the New York Times, “Employees will be moved to four new organizations focused on building new AI tools and apps, Janelle Gale, Meta’s head of human resources, said in an internal memo. The organizations will use ‘AI native design structures’ and have fewer managers per employee than other parts of the company, she said, adding that company leaders will send details about the new roles on Wednesday.”

This comes on the heels of news that Meta is investing hundreds of billions of dollars into more AI development.

How this will affect marketers and brands advertising on Meta’s platforms remains to be seen, but a workforce switch-up this big is sure to have a ripple effect.

9. Google Adds AI Content Verification to Search

Want to know if images are AI-generated? Now you can figure it out, thanks to Google’s new AI Content Verification tool.

Google announced this week that it is adding SynthID to Search, Gemini, Chrome, Pixel, and Cloud. This comes three years after it was introduced to the general market.

Google is also adding verification for C2PA Content Credentials, which is the industry standard for recording how media was created and modified. That rollout will start with Gemini and come to Search and Chrome within the next few months.

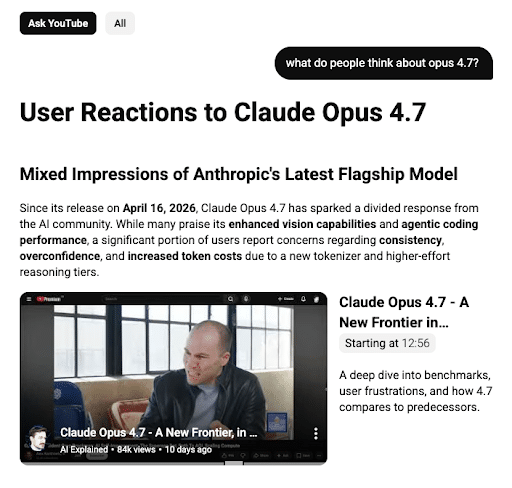

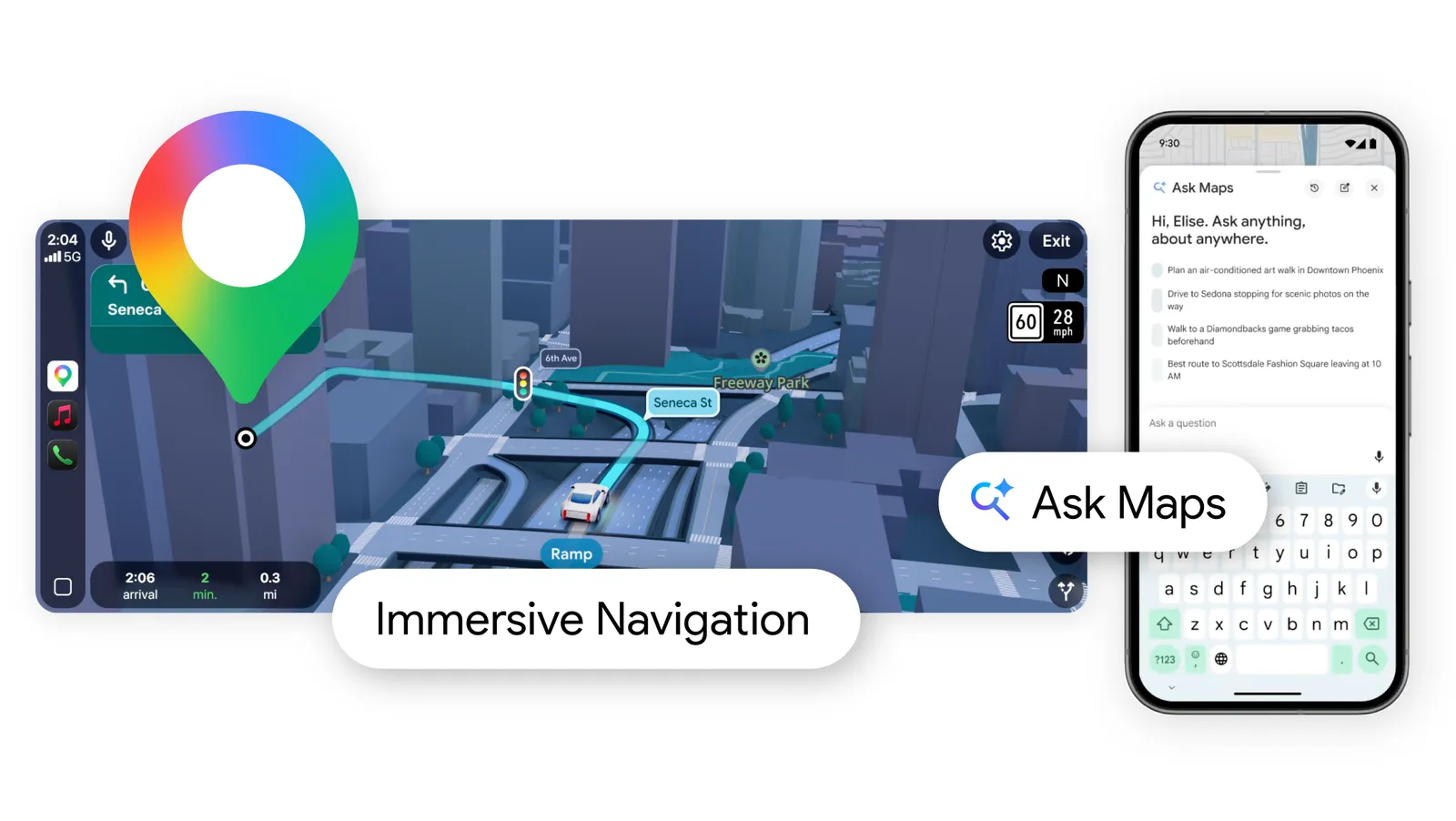

10. YouTube Expands AI Creation Tools: Ask YouTube

YouTube added new AI creation tools to its platform this week.

One of the new tools, Ask YouTube, allows users to ask more complex questions. Once YouTube provides an interactive, structured response complete with links to the most relevant videos from across the entire platform, searchers can then ask follow-up questions.

This tool is currently available to YouTube Premium members 18 and older in the United States, but the platform expects to release it to all YouTube users in the coming months.

Another tool, Gemini Omni, makes it easier for creators to collaborate and build together. Using AI, creators can add prompts and images to other people’s videos, enabling collaboration without technical skills.

11. LinkedIn to Introduce Gated, Creator-Led Events

LinkedIn has been making moves toward the creator space for a while now. This week, news broke that the platform wants to host thousands of creator-led events a year.

According to Business Insider, LinkedIn’s Premium Events generated $18.9 million between the second half of 2025 and the first half of 2026. The goal is to host even more of those events in the coming months.

This is a big step, especially for brands and creators looking to grow on LinkedIn and cement their presence as a “LinkedIn Influencer.”

It’ll be interesting to see if these steps lead to monetized content and more engagement on the platform.

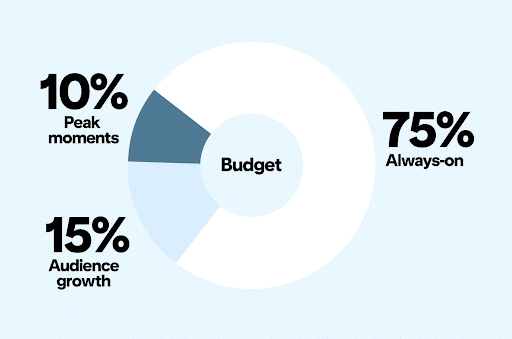

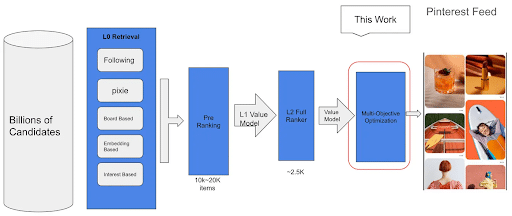

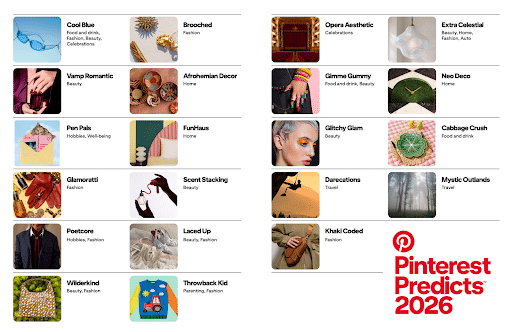

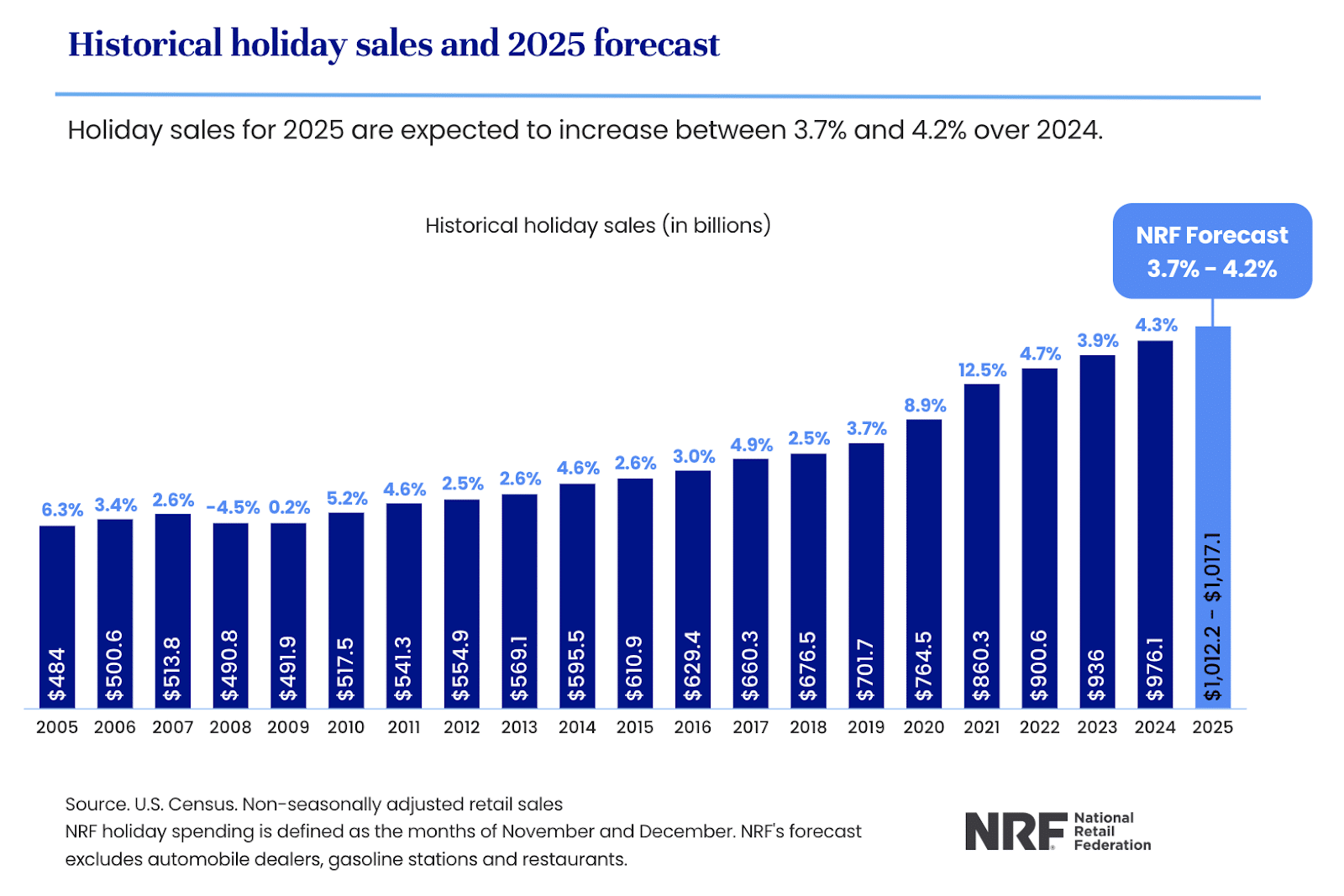

12. Pinterest Wants Brands to Start Thinking about the Holidays

We might have 31 weeks until Christmas, but Pinterest wants advertisers to start thinking about the holidays now.

In a new press release, the social media/search platform shared that now is the time to lay the foundation for a successful holiday season on the app. With 80 billion searches a month, Pinterest probably knows what it's talking about.

When preparing your holiday ad budget, Pinterest suggests thinking of it like this:

- 75% Always On – designate 3/4s of your budget to evergreen campaigns

- 15% Audience Growth – Use this amount to reach new and mid-funnel audiences

- 10% Peak Moments – Use this amount for short, high-impact bursts of traffic around key seasonal events like Black Friday

Click here for more tips on how to prepare for peak holiday season shopping now.

Weekly Homework

- A lot of news is coming out of the Google I/O conference, including the new search experience and the new Universal Cart. Stay up to date on all new tools and announcements so you can adjust your strategies accordingly.

- Read through Google’s updated documentation on AIO, GEO, and SEO.

- Download Meta’s report on social media marketing and use its insights to tweak your strategy.

- Check out the new YouTube creator and search tools to see how they could impact your content.

- Use Pinterest’s help to get started on your holiday marketing plan.

Digital Marketing News 5/9/2026 to 5/15/2026

This week: GA4 adds AI assistant tracking, Google drops FAQ rich results, and Gemini-powered Google Ads dashboards bring real-time AI reporting to advertisers . Plus, we cover why “vibe-coded” websites could quietly tank your SEO.

Here's what happened this week in digital marketing:

1. GA4 Just Added AI Assistant Traffic Tracking for ChatGPT, Gemini & Claude

Google Analytics 4 just introduced a new way to track traffic from AI assistants like ChatGPT, Gemini, and Claude.

GA4 now includes a dedicated “AI Assistant” channel in Default Channel Group reports, helping marketers measure how generative AI platforms are driving website traffic.

Google wrote, "Google Analytics now provides a dedicated way to measure and analyze traffic originating from popular AI assistants."

The update includes the following changes to your traffic source dimensions::

- Medium: A new "ai-assistant" value is automatically assigned when the referrer matches a recognized AI Assistant

- Channel Group: These visits are categorized under the "AI Assistant" channel

- Campaign: Traffic from these sources will be identified with the "(ai-assistant)" campaign name

This gives businesses clearer visibility into AI-driven traffic and how it compares to channels like organic search.

Key Takeaway: AI platforms are becoming an important discovery channel. With GA4 now tracking AI assistant traffic directly, marketers can better measure the impact of AI on search, content, and user acquisition.

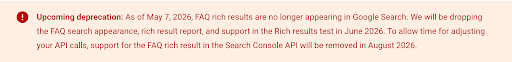

2. FAQ Rich Results Are Dropped From Search

Google announced this week that it has removed FAQ rich results from Search.

Google didn't explain this change, but it shouldn’t come as much of a surprise, given that it had already limited them on most sites.

The search engine will depreciate the rich results in three phases:

- May 7, 2026: FAQ rich results stop appearing in search results

- June 2026: The FAQ search appearance filter, rich result report, and Rich Results Test support will be removed

- August 2026: FAQ rich results will be removed from the Search Console API

Next Steps: If you are using FAQ structured data, you don’t have to rush to remove it. Although it won’t help you with Google Search, it can sometimes be helpful for AI systems.

3. Vibe Coding Miss SEO Basics, Says Google

Thinking of building your website with an AI tool? You might want to pause.

In a recent episode of the Search Off the Record podcast, Google’s John Mueller and Martin Splitt said that building a site on AI, or “vibe coding,” is similar to working with a developer who doesn’t specialize in search.

While these sites might look cool, AI won’t set up your canonicals, sitemaps, or robots.txt, unless you specifically tell it to. Since these are things that most people who don’t specialize in search aren’t familiar with, they often get left off.

Leaving those SEO features off makes it harder for Google and other search engines to index and rank your site.

Bottom Line: AI is only as good as the prompts it receives. If you aren’t an SEO expert, skip vibe coding and work with a knowledgeable web developer.

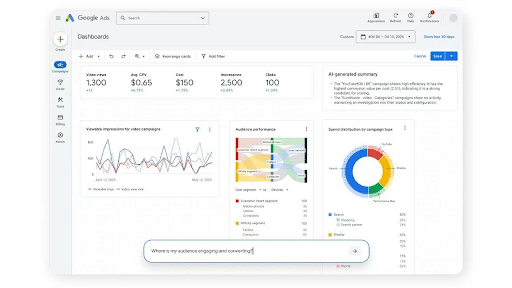

4. Gemini-Powered Dashboards Provide Real-Time Insights for Google Ads

This week, Google announced that it’s bringing Gemini to the Google Ads dashboard.

Previously, users would have to manually set up and navigate their data reports. But now, using Gemini AI, advertisers can enter a prompt and receive up-to-date, real-time information in charts, graphs, and tables.

Google’s goal is to make data analysis more interactive, visual, and accessible. More information will be shared on May 20th at Google Marketing Live 2026.

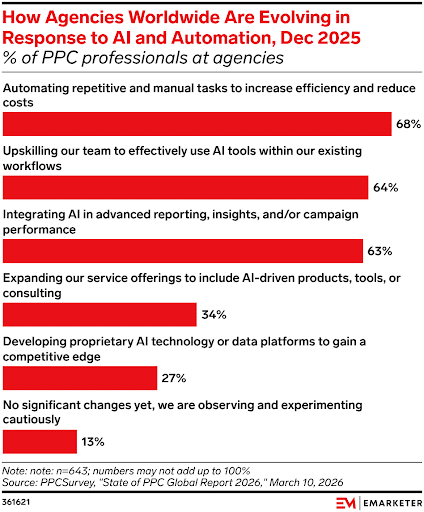

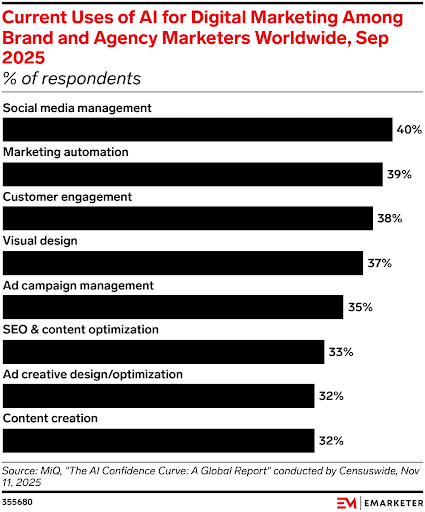

5. 68% of PPC Professionals Use AI to Automate Repetitive Tasks

Ever wonder how your competition and colleagues are using AI? This new report from eMarketer will tell you!

The report asked PPC professionals at agencies around the world how they’re using AI in their work. Here’s what they found out:

- Automate repetitive tasks: 68%

- Upskilling team members to improve existing workflows: 64%

- Integrating it into advanced reporting, insights, and campaign performance: 63%

- Expanding services to offer AI-driven options: 34%

- Developing proprietary AI technology of their own: 27%

- No significant changes: 13%

How is your agency using AI?

6. Gmail & Yahoo Shift to "Interaction-Based" Inbox Placement

Google and Yahoo have officially moved beyond simple authentication as the primary deliverability factor. Reputation is now dictated by Positive Interaction Signals.

The Change: Inboxes now prioritize brands based on "Positive Reply Rates" and "Time-to-Open."

The Impact: Brands with high "skim-and-bin" rates (deleting without opening) are seeing a 20% decrease in primary inbox placement.

The Source: Google Sender Guidelines | Yahoo Sender Best Practices

The Report: Validity 2026 Email Deliverability Benchmark Report

7. Apple Mail "Intelligence" Now Summarizing Promotional Emails

Apple’s 2026 OS updates have replaced traditional snippet text with AI-generated summaries in the "Promotions" and "Updates" tabs.

- The Update: Instead of your subject line, users see a 10-word AI summary of the email's body.

- Optimization: Front-load your "Offer Hook" in the first 50 words to ensure the AI highlights your discount rather than generic introductory text.

- The Source: Apple Support: Use Apple Intelligence in Mail

- The Report: Litmus: The State of Email 2026 Edition

8. DMARC Enforcement Becomes Mandatory Under PCI DSS v4.0

DMARC is no longer a suggestion; it is now a security requirement for any organization handling digital payments or sensitive customer data.

- The Change: Under PCI DSS v4.0, failing to have a p=quarantine or p=reject policy can lead to transactional emails being blocked at the gateway level.

- The Impact: Organizations that haven't updated their DMARC policy from "p=none" (monitoring) are experiencing significant delivery failures in Outlook and Gmail.

- The Source: PCI Security Standards Council (PCI DSS v4.0)

- The Report: MimecasPCI Security Standards Councilt: 2026 Email Security Global Trends

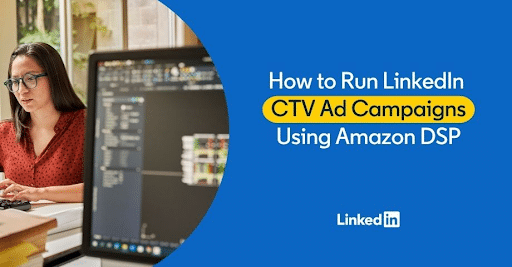

9. LinkedIn and Amazon Ads Form a Partnership

Thanks to Amazon Ads, LinkedIn advertisers can now reach TV viewers.

According to Amazon, “For marketers already using Amazon DSP to plan, buy, and optimize full-funnel campaigns across streaming TV, display, online video, and audio, the solution adds previously unavailable LinkedIn targeting capabilities for CTV campaigns.”

In the announcement, LinkedIn shared, “LinkedIn’s CTV Ads reach B2B audiences 2.2x more effectively than other CTV platforms and 4.3x more effectively than linear TV.”

If you’ve been looking for a new way to reach B2B audiences, this partnership could be helpful.

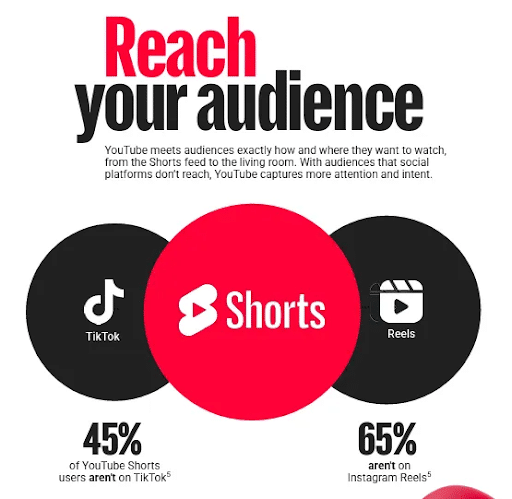

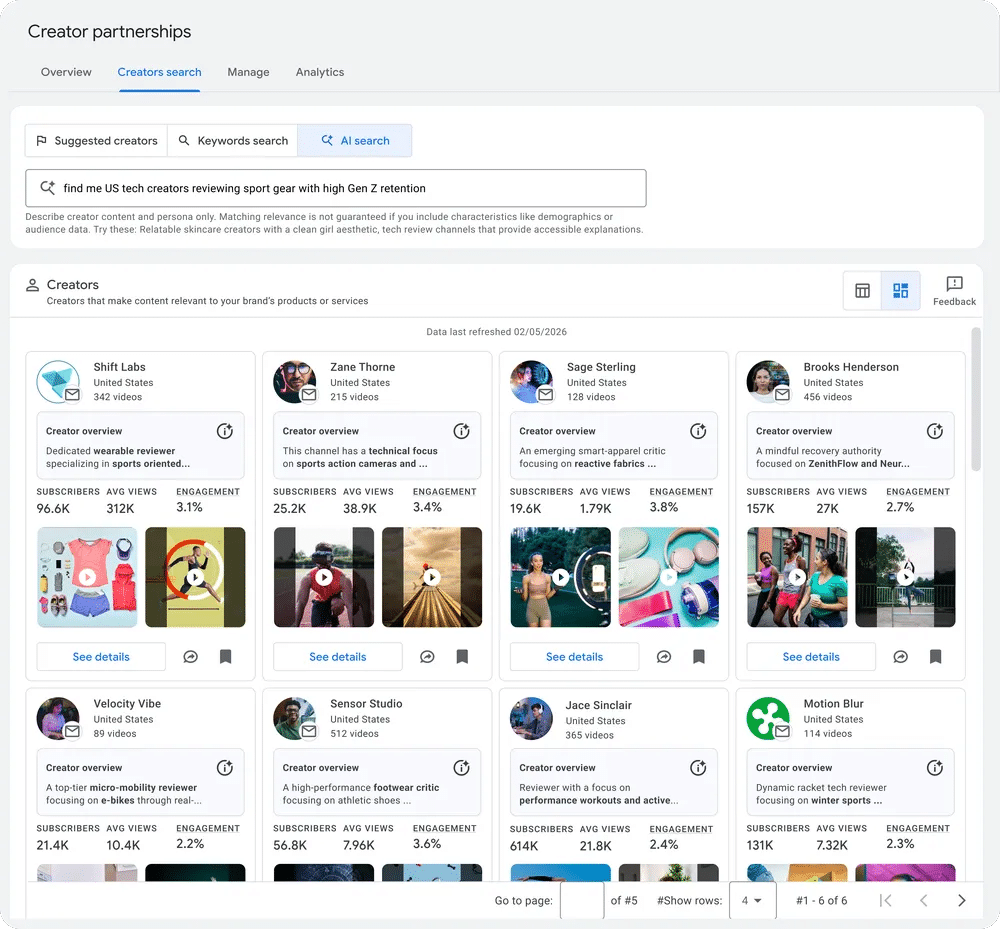

10. YouTube Releases New Playbook for Brand Growth

If you’re looking to grow on YouTube, this is news you’ll want to hear. The video content platform recently published a new playbook to help businesses better understand the fundamentals of the YouTube creator marketplace and find success on the platform.

The guide mentions that:

- 78% of viewers said YouTube had the most trusted creators for product recommendations

- 40% of YouTube views happen more than 30 days after the video is posted

- The platform drives 86% higher long-term ROAS than paid social

- YouTube Shorts increases long-term brand growth by 21%

- Creator partnerships on boost Demand Gen campaigns deliver an average 20% increase in conversion lift

The playbook also explains how brands and creators can use the platform to find success.

If you’ve been thinking of jumping into YouTube or want to refresh your strategy, click here to download this valuable playbook.

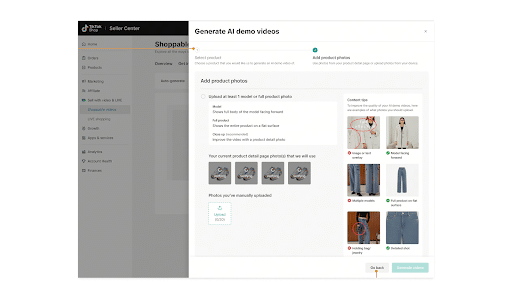

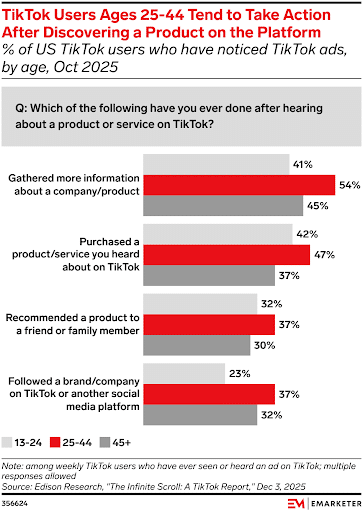

11. TikTok Shoppers Love Affiliate Links

TikTok shopping is on the rise, and the app is telling brands to meet shoppers where they are – with affiliate links.

In an interview with Glossy Magazine, Donte Murray, TikTok’s director of beauty, wellness, and personal care, said that, “77% of interested shoppers on TikTok search for more info about a relevant product after seeing affiliate content in stream.” He encourages brands to combine sponsored and affiliate content to meet shoppers exactly where they’re searching.

He also shared that repeat purchases are often driven by tutorials and tip videos. So, if you want to grow your ecommerce business using TikTok Shop, offer affiliate links and post videos showing consumers how to use your products.

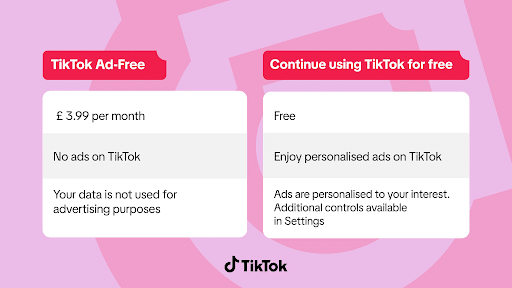

12. TikTok Launches Ad-Free Subscriptions in the UK

Tired of all the TikTok ads? If you’re in the UK, you can purchase a subscription to remove them from your feed.

In the announcement, Kris Boger, TikTok’s UK Managing Director, was quoted as saying, "Choice for our community and growth for UK businesses go hand in hand on TikTok. Advertising on our platform is already helping thousands of British businesses reach new customers, increase sales and create jobs, while our new ad-free option gives people greater control over their experience. Together, this ensures we continue to deliver real economic impact while giving our community the flexibility to engage with TikTok in the way that suits them."

This is currently only available in the UK, but it’ll be interesting to see how it affects the success of brands that have built up a following on the app. It’ll also be interesting to see if this subscription model ever makes its way across the pond to the United States.

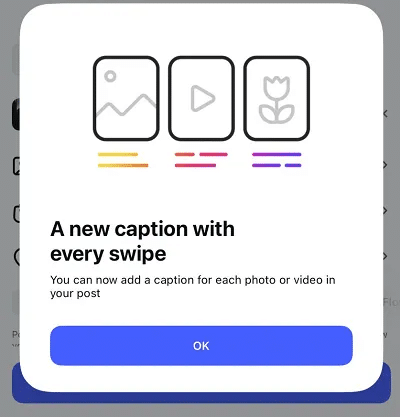

13. Instagram Tests New Captions with Each Swipe on a Carousel

Instagram is testing a new feature: new captions for each post within a carousel.

Carousels are already among the most valuable posting options on Instagram, and now creators and brands can add even more content to them.

With this new upgrade, users can add a new caption with every swipe, switching up the copy for each slide. This feature isn’t available for everyone, though. It’s still in beta, with no word on when, or if, it’ll roll out to every user in the future.

14. Meta Introduces AI Chatbot to Threads

AI chatbots are coming to Threads. Meta just released its Muse Spark AI model for a trial rollout in Argentina, Malaysia, Mexico, Saudi Arabia, and Singapore.

According to Meta, the AI content will reach users' feeds one way or another, so why not have it done via Meta AI?

Users can have conversations with Muse Spark, talk to it via Voice Chat, and get help finding answers to their questions. They can also use image search to get more information on what they’re seeing in real time.

Meta has invested hundreds of billions of dollars into this project, but there is no word on when, or if, it’ll be released in the United States.

Weekly Homework

- Consider whether you want to keep your FAQ Rich Results schema on your site or remove it.

- If you have a vibecoded website, go back and add in the important SEO features.

- Check out the new Gemini-powered dashboard for your Google Ads.

- Download the YouTube playbook to see how you can find success on the video platform.

- If you’re trying to grow your ecommerce business on TikTok, consider offering affiliate codes and adding more tutorial videos to your content calendar.

- Trying to reach B2B audiences? Look into the new partnership between LinkedIn and Amazon Ads.

Digital Marketing News 5/2/2026 to 5/8/2026

This week: Google adds more links to AI search, ChatGPT ads show strong early engagement, and Etsy launches shopping directly inside ChatGPT.

Here's what happened this week in digital marketing:

1. Google Shares Insights on New Wave of Search Behaviors

Google’s Martin Splitt and Nikola Todorovic recently discussed a new wave of search behaviors – and what marketers can do to capitalize on it.

When discussing AI search, Split and Todorovic shared key insights into search behavior and how it’s changing. The most important insights?

- Search queries are becoming longer and more detailed

- Users are doing new things with search, instead of simply searching for keywords

The biggest takeaways from their discussion included:

- AI search is not new, but it is changing how people use Google.

- Keywords are not dead, but instead should be used within larger prompts.

- Classic Search still matters because it’s running in the background of AI Search.

- Users are getting more complex with their searches with longer, more detailed questions

- Content has to work for classic search, while remaining useful for more complex search behavior.

You can listen to the full discussion here.

2. More Links Are Added to Google’s AI Search

New updates are coming to Google’s AI Mode and AI Overviews – and it’s great news for marketers and brands.

Google is rolling out five AI updates, including changes to how links appear in responses. The updates include:

- Labeling links from users’ news subscriptions

- Suggesting links to articles at the end of AI responses

- Including previews from online discussions, social media, and other firsthand sources

- Inserting more inline links with responses

- Adding link hover previews on desktop

While we aren’t sure whether this will increase organic traffic, it’s nice that Google is linking back to its sources, giving brands an opportunity to grab some clicks from their content.

3. Google Wants Developers to Think About AI Agents

Google released new guidance to web developers this week: build for AI agents, too.

In the blog, Google shared that AI agents interpret websites three different ways:

- Screenshots: to identify elements visually

- Raw HTML: to give agents the DOM structure and hierarchy

- Accessibility Tree: the “high-fidelity map” of your interactive elements

To help AI agents better understand your site, Google suggests including agent-friendly semantic HTML elements like <button> and <a> over styled <div> elements. They also said to link <label> tags to inputs with the for attribute, and setting cursor: pointer on clickable elements.

To make sure your website is as agent-friendly as possible, read the entire blog here.

4. Some WordPress Sites Are Unintentionally Blocking AI Bots

If your SEO data looks good but you still aren’t seeing much movement with AI bots, it could be because your site is unintentionally blocking the crawlers.

In a new study, one researcher found that, while Google AI Mode and Copilot were monitoring and crawling their site, big names like Claude and Meta AI were not. Why? Because they were blocking them.

If you’re blocking things like Googlebot, GPTbot, and more, you’re essentially removing your ability to end up in AI search results. For the best chance, make sure your page is available to these bots.

If you aren’t sure whether your site is blocked, run a WP Engine diagnostic. It only takes a few minutes and it could reveal the cause of issues you’ve been dealing with for months.

Click here for full instructions on performing the diagnostic test and reading its data.

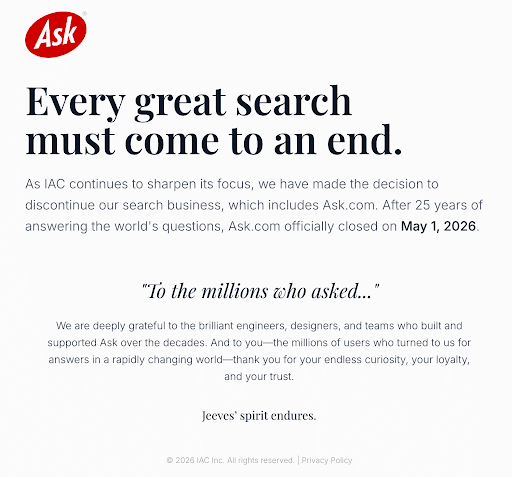

5. Ask.com Officially Shuts Down After Nearly 30 Years

Ask.com, formerly known as Ask Jeeves, officially shut down its search business on May 1, 2026, nearly 29 years after launching in 1996 before Google existed.

Ask Jeeves was one of the internet’s earliest “answer engines,” built around conversational questions long before AI search became mainstream.

Its shutdown highlights how difficult the search market has become as Google and AI-powered search experiences continue dominating the space.

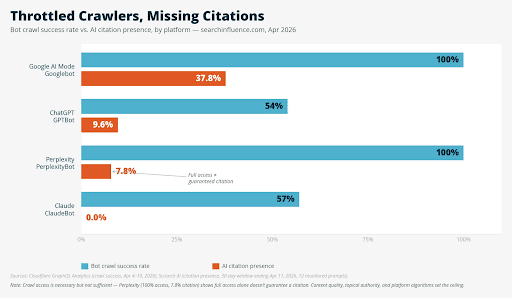

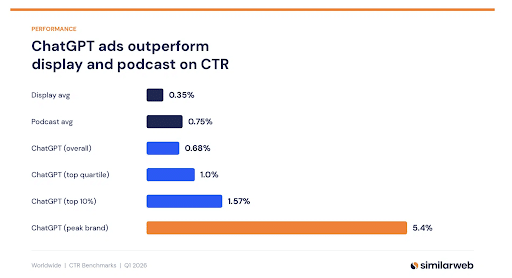

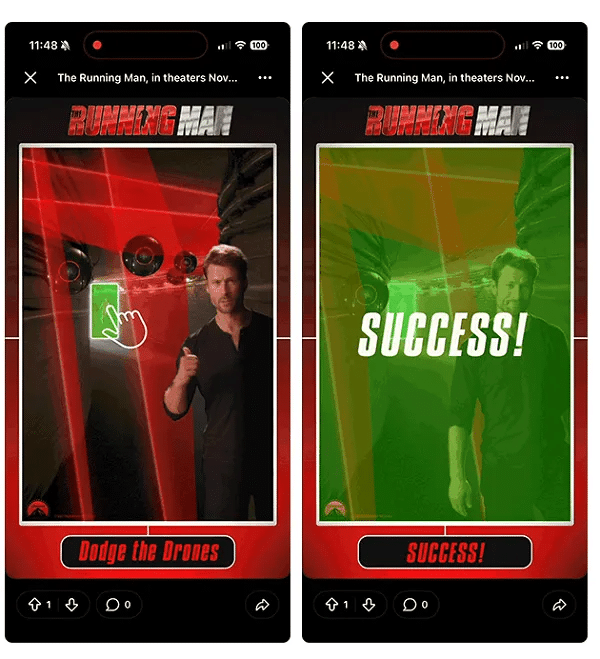

6. Early ChatGPT Ad Data Shows Strong Engagement, but Scale Remains the Big Question

Early SimilarWeb data suggests ads inside ChatGPT conversations are generating higher engagement rates than traditional Display and Podcast ads, especially for high-intent searches like Mother’s Day.

Unlike traditional search ads, these placements appear naturally within conversational responses, making them feel more contextual and less disruptive.

Mother’s Day-related prompts reportedly triggered ads about three times more often than average, with brands like Etsy and Nordstrom already seeing strong visibility.

However, inventory is still limited and testing remains small-scale, making it too early to know whether current CTRs will hold long term.

High engagement also does not guarantee strong performance. Advertisers still need more data around conversions, scalability, and pricing before ChatGPT ads can seriously compete with platforms like Google Ads or Meta.

Key Takeaway: ChatGPT ads are showing promising early engagement, but marketers should treat the platform as a test channel until larger-scale performance data becomes available.

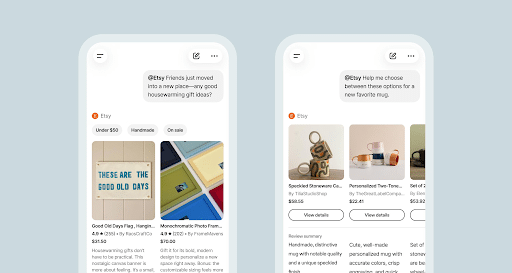

7. Etsy Launches ChatGPT App

Etsy shoppers will now be able to shop their favorite shops from within ChatGPT. Thanks to a native app within the AI bot, Etsy lovers can now access over 100 million listings and products.

In the announcement, Etsy shared that “[t]he Etsy app in ChatGPT is now live in beta, allowing users to search and compare Etsy items directly in conversation.”

Users simply need to tag @Etsy in their prompt, and the Etsy app will provide listings relevant to it.

This differs from ChatGPT Instant Checkout, and Etsy is the first ecommerce website to offer it.

Why We Care: If this beta test is successful, we could see more and more brands wanting to create a special app within ChatGPT, too. It could be a whole new arm of digital marketing and ecommerce.

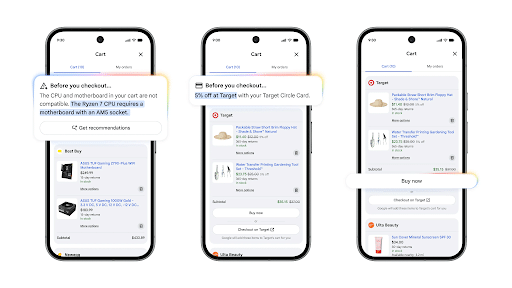

8. Google Expands AI Bidding & Budgeting Tools to Reduce Manual Campaign Work

Google Ads is rolling out new AI-powered bidding and budgeting features designed to help advertisers capture more demand with less manual work.

The updates include:

- Journey-aware Bidding, which uses more customer journey data for optimization

- Smart Bidding Exploration, expanding beyond Search after reportedly driving a 27% increase in unique converting users

- Demand-led budget pacing, which automatically shifts spend toward higher-demand periods

Google says advertisers using campaign total budgets have already seen a 66% reduction in manual budget adjustments.

The goal is to help campaigns respond more dynamically to changing demand while reducing the need for constant bid and budget management.

Key Takeaway: Google is continuing to push advertisers toward AI-driven campaign management, with automation taking a larger role in bidding, budgeting, and demand discovery.

9. Google’s March Core Update Shifted Traffic and Visibility

Now that the dust is settling from Google’s March Core Update, data is revealing who was the most affected – YouTube, Reddit, and aggregators.

The analysis from Amsive found that:

- YouTube lost the most – 567 visibility points

- Reddit lost 64 points

- Instagram lost 48 points

- X lost 46 points

- Google seems to favor authoritative sources, especially in the travel, jobs, and health sectors

Although some sites, like Reddit and Indeed, saw a big bounce back, not all sites have recovered.

Keep an eye on your own analytics to see if your site has been affected by the big core update.

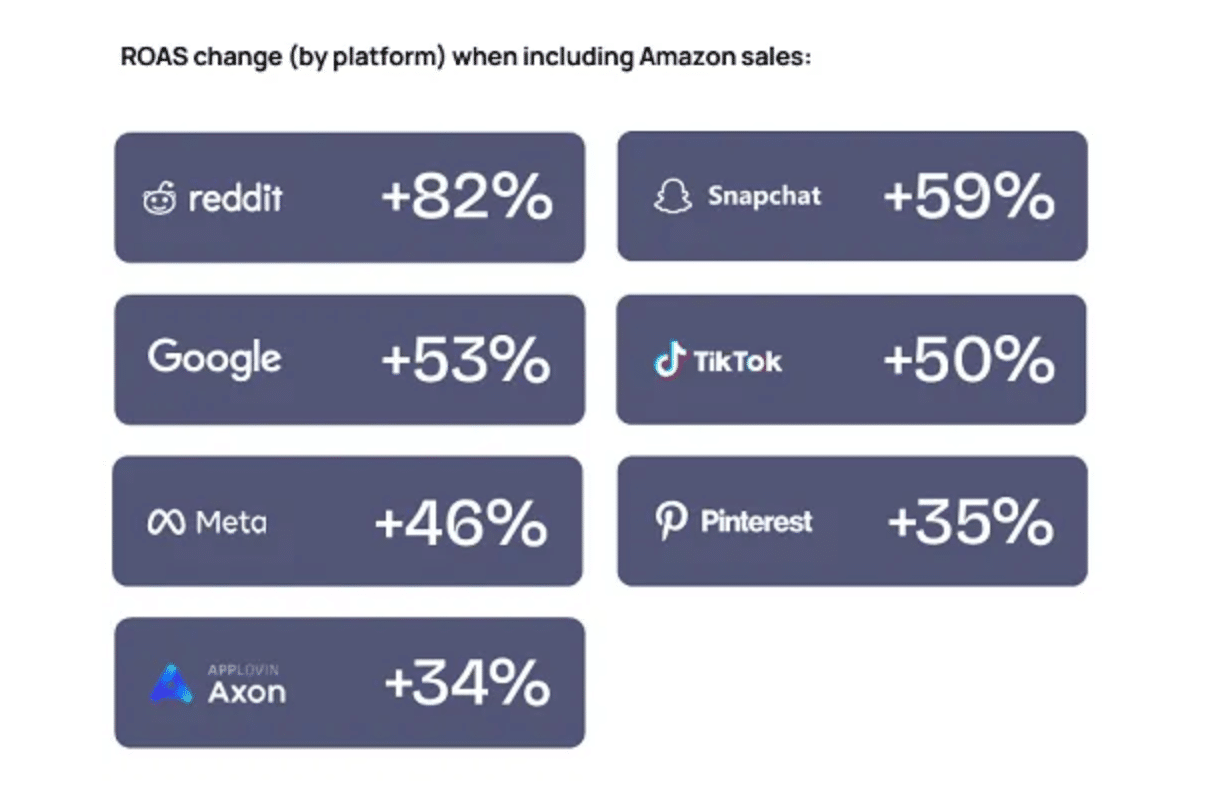

10. Reddit Wants to Help Grow Your Business

Reddit is quickly becoming a major player in the PPC scene, and this new report will help you capitalize on that growing audience.

After announcing that its ad revenue increased 74% YoY, Reddit released a 13-page guide designed to help brands connect with their preferred audience on the platform. Highlights include:

- The 5 types of people you’ll find on Reddit

- Trending topics and how they’re being discussed in different industries

- Insights on how business owners found their communities

- Case studies on how businesses converted Reddit fans into paying customers

- Ideas on how to create ads that Reddit users will enjoy

This report is a wealth of information for businesses already advertising on Reddit or even considering taking the plunge. You can download the full report for free here.

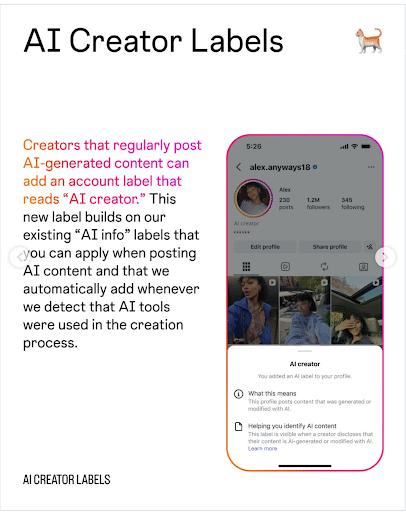

11. Instagram Starts Labeling AI-Generated Content

Instagram wants you to be able to trust what you see on its platform. That’s why it introduced AI Creator labels this week.

In the announcement, Instagram explained: “This new label builds on our existing ‘AI info’ labels that you can apply when posting AI content and that we automatically add whenever we detect that AI tools were used in the creation process. … In instances when content is labeled as ‘AI info’ and posted by a labeled AI creator, the ‘AI info’ label would appear on the content instead of the ‘AI creator’ label.”

This isn’t a push to stop creators from using AI. In fact, Meta is probably one of AI’s biggest fans. But it is a push to stop the erosion of trust caused by creators posting AI-generated content without telling people it’s AI.

Weekly Homework

- Explore Etsy’s new in-ChatGPT app to see if it could work for you.

- Audit your SEO strategy to ensure that you’re doing the most you can for Bing searchers.

- Talk to your web development team to ensure your site is built for both humans and AI agents.

- If you have a WordPress site, run a diagnostic test to ensure that you aren’t unintentionally blocking any crawlers.

- Download the Reddit report to learn how to advertise your business effectively on the open-forum platform.

- If you are in shopping or travel, explore the ways that Google Ad’s AI Max could improve your search ads.

Digital Marketing News 4/25/2026 to 5/1/2026

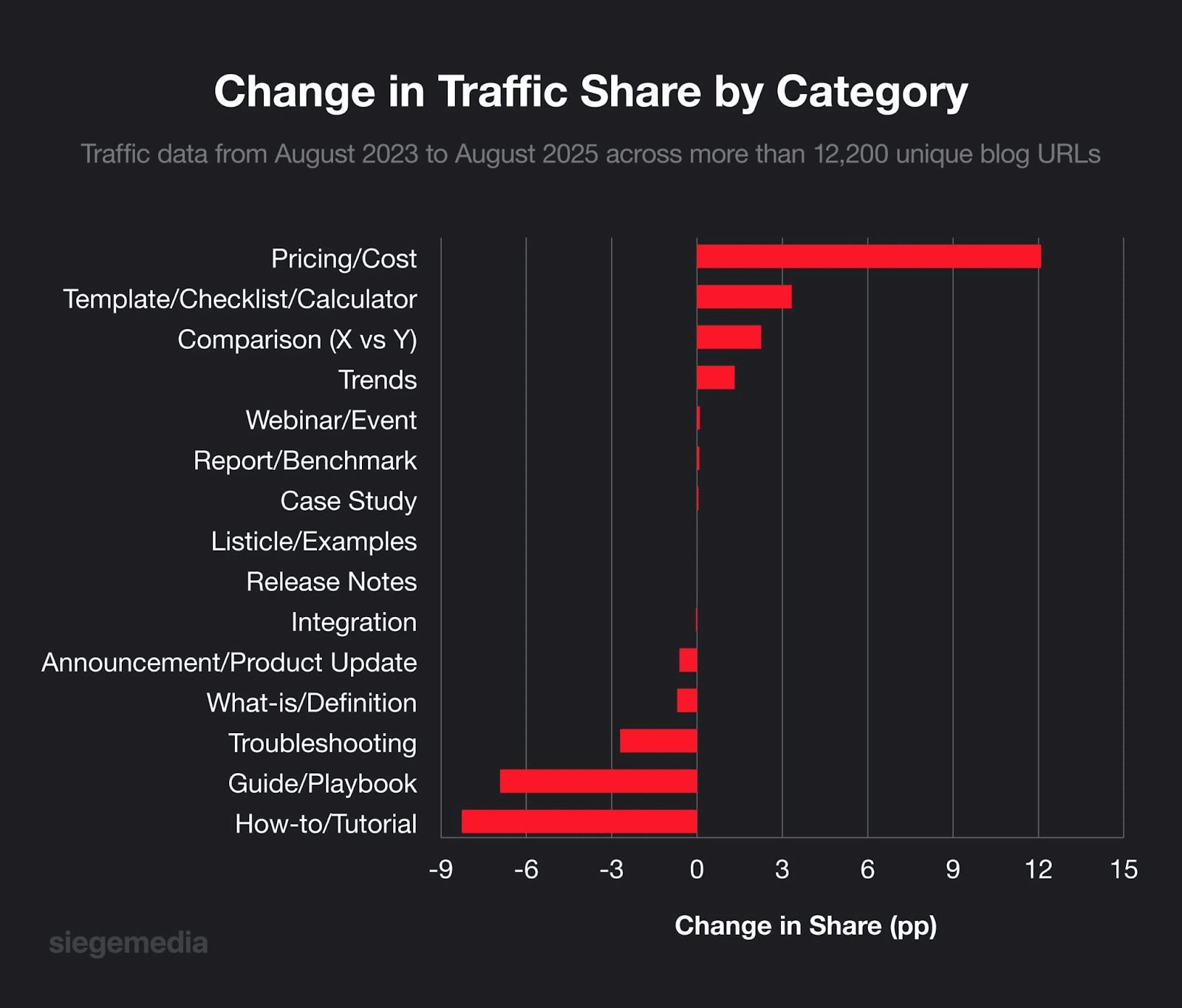

This week: Adobe acquires Semrush reshaping search visibility, AI Overviews cut organic clicks by 38%, and AI Overview CTR drops despite rising impressions.

Here's what happened this week in digital marketing:

1. Adobe Completes Semrush Acquisition, Signaling a New Era for Search and AI Visibility

Adobe has officially completed its acquisition of Semrush, marking a major shift in how brands approach search, content, and digital visibility.

The move brings together Semrush’s strength in search intelligence, like keyword data, competitive insights, and visibility tracking, with Adobe’s broader ecosystem of content, customer experience, and analytics tools. The bigger implication: discoverability is no longer a standalone function, it’s becoming fully integrated into the entire marketing lifecycle.

As AI-driven search and conversational interfaces reshape how users find and evaluate brands, the traditional path from search to website is changing. In many cases, users are getting answers, comparisons, and recommendations before ever clicking through, making visibility across AI platforms just as important as rankings in traditional search.

This acquisition reflects a broader industry shift toward what some are calling a multi-layered approach to visibility, combining traditional SEO with emerging strategies like generative engine optimization (GEO) and AI-driven discovery.

Key Takeaway: Search is no longer just about rankings, it’s about visibility across an entire ecosystem. Brands that connect content, data, and AI-driven discovery will be better positioned to win in the next phase of digital marketing.

2. Study Finds Google AI Overviews Reduce Organic Traffic By 38%

New research is shedding light on the real impact of Google’s AI Overviews, and the results could be a concern for marketers and publishers.

A randomized field experiment found that when AI Overviews appear in search results, organic clicks to external websites drop by 38%. At the same time, zero-click searches surged, with users increasingly getting answers directly on the results page instead of visiting other sites.

What’s particularly notable is that removing AI Overviews didn’t significantly change user satisfaction or perceived quality of results. In other words, users felt they were getting the same value, even without clicking through.

This reinforces a growing trend: search is becoming more self-contained, with AI summarizing information and reducing the need for users to leave the platform.

Key Takeaway: As AI-driven search continues to rise, marketers must adapt to a world where visibility, not just clicks, matters, and where being included in AI summaries may become just as important as ranking on page one.

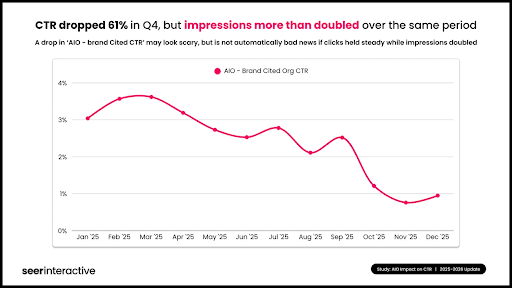

3. Brand-cited AI Overview CTR Fell 61% in Q4

According to a new report from Seer Interactive, brand-cited AI overview CTR fell a whopping 61% from Q3 to Q4.

While clicks may have dropped, impressions more than doubled in the same category over the same time period. The numbers also revealed that:

- Being cited by AIO still delivers +120% for both paid and organic CTR

- There is a 6-7% advantage for a brand to be cited in an AIO for paid CTR

- If a brand is not cited, organic CTRs declined by 67%

The study also revealed that these are the types of queries most likely to return an AIO:

- Comparison (X vs Y): 95.4%

- Review Queries: 86.3%

- Question (Who/What/Where/How): 85.9%

- Price/Cost/Buy: 83.4%

You can learn more about AIOs and search queries by reading the entire report here.

4. Bing Hits 1 Billion Users as Microsoft Strengthens Search Growth

Microsoft has announced that Bing has surpassed 1 billion monthly active users for the first time, marking a major milestone in its ongoing push to grow its search ecosystem.

Alongside this growth, search advertising revenue increased by 12% year over year, continuing a streak of double-digit gains. Microsoft also pointed to steady expansion of its Edge browser, which has gained market share for 20 consecutive quarters, helping drive more users into the Bing ecosystem.

While Bing still holds a relatively small share of the global search market, the growth highlights increasing engagement and the impact of Microsoft’s broader strategy, including AI integration and default search positioning within Edge.

Key Takeaway: Bing’s growth signals expanding opportunities beyond Google. Marketers should consider diversifying their search strategies as alternative platforms continue to gain traction.

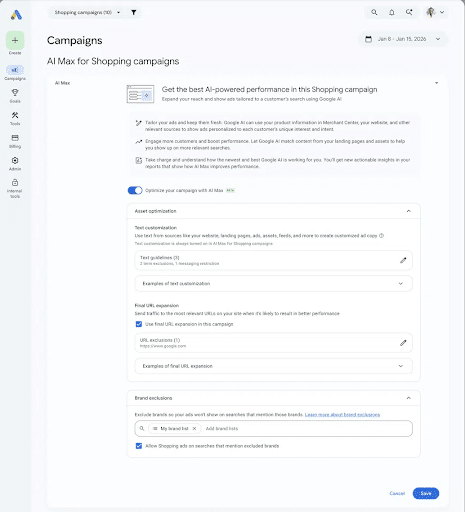

5. Google Expands AI Max Across Ads, Balancing Automation with Control

Google is continuing its push into AI-driven advertising by expanding AI Max across more campaign types, while introducing new tools to give advertisers greater control over how automation is applied.

AI Max is now rolling out beyond Search into Shopping and travel campaigns, allowing brands to reach users across more touchpoints, including conversational and exploratory queries that traditional keyword strategies often miss. For retailers, this means more adaptive ads powered by product data, while travel advertisers benefit from a more unified campaign structure.

At the same time, Google is addressing a key concern around automation: control. New features like “AI Brief” allow advertisers to guide messaging and targeting using natural language inputs, while updates to URL selection and compliance tools ensure campaigns remain aligned with brand and regulatory requirements.

Key Takeaway: As Google leans further into AI-driven advertising, success will depend on finding the right balance, leveraging automation for scale while maintaining enough control to protect brand messaging and performance.

6. New First-Party Shopper Data Comes to Google

Google is expanding its partnership with Albertsons Media Collective to enhance first-party shopper data on its apps.

In the agreement, Albertsons, a large food and drug retailer, will integrate its first-party shopper data directly into its Google and YouTube campaigns.

According to Google, “Unifying brand and shopper marketing in Display & Video 360 helps brands like Keurig Dr Pepper seamlessly optimize every dollar, managing commerce media with the same accountability and performance as their broader marketing strategy. By connecting Albertsons Companies’ data with the reach of YouTube and intelligence of Google AI, brands can drive ROI across the entire shopper journey.”

Since Google and YouTube play such a large role in most customer journeys, access to better first-party consumer data could be the key to boosting retailers' bottom lines as they reach new audiences.

7. WooCommerce Stores Can Now Sell Via YouTube Videos

Google and WooCommerce have partnered to enable WooCommerce stores to sell products through YouTube videos.

In the announcement, WooCommerce was quoted as saying, “Google for WooCommerce makes it simple to reach 2.7 billion shoppers on YouTube and automate your creative with AI.

YouTube Shopping is now a direct sales channel for WooCommerce stores. As search and discovery undergo a massive evolution, brands need to show up where their audiences are researching and buying. YouTube is the world’s second-largest search engine and the largest platform for researching products via video.”

The partnership allows WooCommerce users to connect their store to their YouTube channel using an extension and tag products in their YouTube videos and Shorts. Once tagged, those products will appear as shoppable cards while viewers are watching the videos and in the Channel Shopping tab.

It adds YouTube to the long list of places WooCommerce users can sell their products, including Display Ads, Gmail, Discover, Search, and Google Maps.

The announcement also shared that users will be able to access AI-powered ad creative across all Google channels, as well as Performance Max for service businesses.

If you are a WooCommerce user, this new partnership is definitely worth checking out.

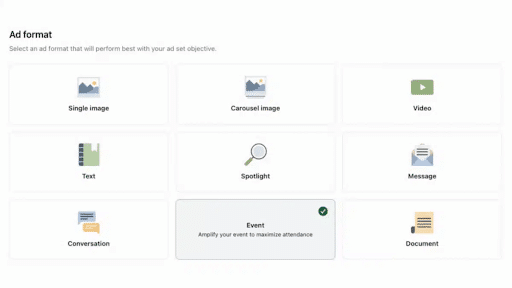

8. LinkedIn Opens Event Ads to External Platforms

LinkedIn is introducing Off-Platform Event Ads, giving marketers more flexibility to promote events while driving registrations directly to their own websites.

Instead of relying on LinkedIn’s native Event Pages, advertisers can now link Event Ads to external destinations, like webinar platforms, landing pages, or livestream sites, while still leveraging LinkedIn’s targeting capabilities. The result is a more streamlined, controlled user journey from discovery to registration.

This shift reflects a broader move toward giving marketers greater ownership over their data and conversion experience, without sacrificing the reach and precision of LinkedIn’s ad ecosystem.

Action Item: Test Off-Platform Event Ads to improve registration flow and data ownership, especially if you’re currently relying on third-party platforms or want more control over the end-to-end user experience.

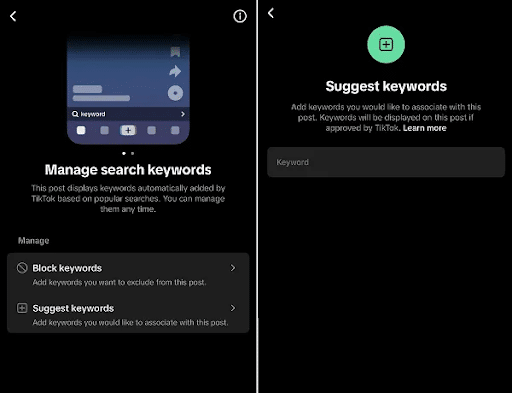

9. TikTok Creators Can Now Add Relevant Keywords to Metadata

TikTok now lets creators manually manage the keywords assigned to their videos, giving you more control over how your content gets discovered in search.

This update lets you suggest relevant terms or block those that don’t fit. For digital marketers, this is a low-key big deal: it's essentially SEO for TikTok, and it means you can actively guide the algorithm instead of just hoping it figures out what your content is about. Pair this with TikTok Trends to research what terms your audience is actually searching, and you've got a solid one-two punch for maximizing reach.

Just don't get too keyword-happy. TikTok still holds the final say on what sticks.

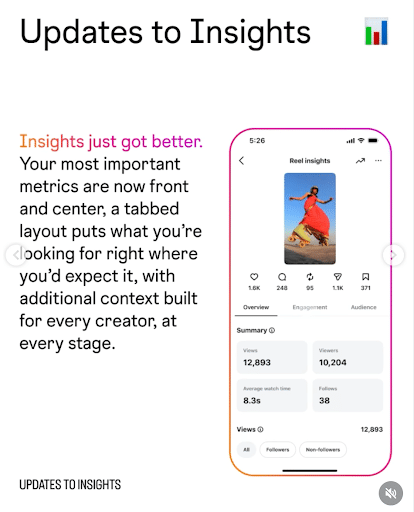

10. Instagram Improves Post and Reels Insights and Metrics

It’s been a busy week over at Meta. Instagram also announced some big updates to its content metrics, specifically for posts and Reels.

The update makes it easier for users to find key metrics, including engagement activity and audience demographics. It also added more insights, including share and skip rate percentage, which have become increasingly more important to brands and creators.

Unfortunately, the update does not include other important information, such as data on collab posts, trial reels, cross-posted content, and boosted content.

The update is Meta’s latest effort to keep creators and brands happy on the platform, which has seen a slight decline in the past few months.

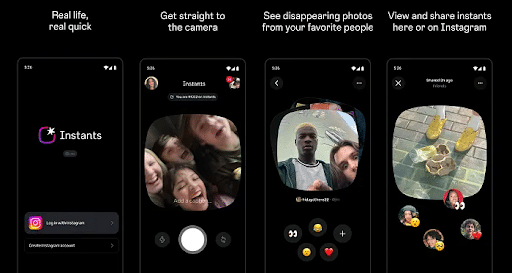

11. Meta Launches New Social Platform: Instants

There’s a new app in the Meta neighborhood: Instants.

The app, which was designed as a spin-off of Instagram, gives off Snapchat vibes. Users can share photos with friends that will quickly disappear. Those photos can also be shared directly to Instagram.

Instants isn’t the first time Meta has tried to replicate Snapchat. It follows a long line of other attempts, including Stories, which is now a major part of Instagram, Poke and Slingshot, which were both shut down in 2014, and Quick Updates on Facebook, which also had a very short shelf-life.

Whether Instants becomes a big hit remains to be seen, but it’s still important for marketers to keep an eye on potential new platforms.

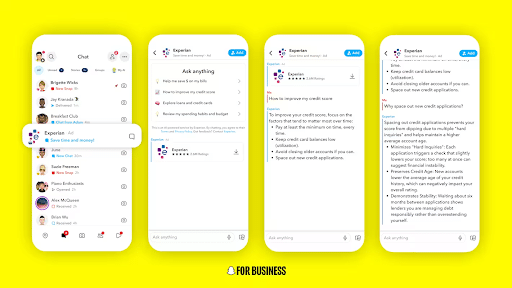

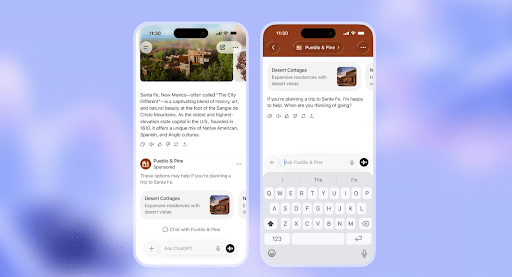

12. New Advertising Opportunity: AI Sponsored Snaps

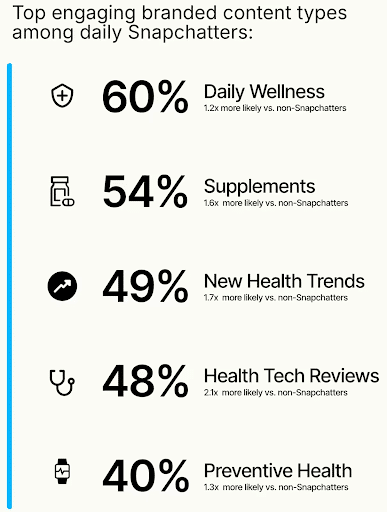

Snapchat launched a new kind of ad this week: AI Sponsored Snaps.

Building on the success of Sponsored Snaps, this new tool lets brands pair their ads with AI chatbots to create a more personalized connection with their audience.

The new chatbots will connect with consumers, driving full-funnel outcomes from the top of the discovery funnel through the end of the process. Snapchatters can use the AI bots to ask questions, get personalized recommendations and feedback, and explore what the brand has to offer.

The opportunity is kicking off with Experian, and if successful, will expand to other brands in the future.

13. “Ask YouTube”: Google’s Newest AI Experiment

Google is testing a new conversational search feature called “Ask YouTube.”

The tool allows searchers to ask YouTube a specific question and receive an AI-generated summary with cited videos and follow-up questions. The source videos are embedded in the responses, with links that open to timestamped sections.

The tool is currently available to US Premium subscribers who are 18 years of age or older and accessing Google on a desktop from now until June 8th.

14. OpenAI Crawl Activity Has Tripled

Since the August 2025 launch of GPT-5, OpenAI crawl activity has doubled.

This means OAI-Search Bot is now generating more traffic than its training crawler, GPTBot. This matters for digital marketers because it means that ChatGPT is increasingly pulling live web content to answer user queries, making your site's crawlability and AI visibility more critical than ever.

And here's the kicker: if you've only blocked GPTBot, thinking you're opting out of OpenAI's reach, you're still fully exposed to OAI-SearchBot, the bot that actually determines whether your content shows up in ChatGPT search answers.

If you’re measuring bot crawls, you need to be separating AI training crawls and AI search crawls to get the most accurate data.

15. Pinterest Forms CTV Partnership

Pinterest is jumping into the connected TV game. This week, Pinterest announced that advertisers can now expand their campaigns to consumers’ home TVs through a CTV partnership with tvScientific.

In the press release, Pinterest explained that, “tvScientific by Pinterest is the first ad platform to offer direct access to Pinterest’s high-intent audiences for CTV campaigns. Advertisers can use Pinterest’s commercial intent signals to reach audiences across premium inventory on the biggest screen in the house, while also leveraging tvScientific’s AI-powered optimization technology.”

With CTV ad spend reaching over $32 billion last year, it’s quickly becoming a major player in the digital marketing space. Making it more accessible to more brands through partnerships like this could open many doors, especially for small- to mid-sized businesses that may have been priced out of this opportunity in the past.

Weekly Homework

- Explore how to connect your WooCommerce store to your YouTube channel to create shoppable product cards that let consumers shop your site directly from your YouTube videos.

- Download Seer Interactive’s report on Google’s AIOs to see how you can improve your chances of benefiting from appearing in AIOs.

- Check out Pinterest’s new partnership with tvScientific to see if a CTV campaign might be in the cards for your brand soon.

- Add keywords to your TikTok metadata to see if it helps you increase your reach.

- Keep an eye on AI Sponsored Snaps. If this new ad type takes off, it could be a very lucrative way to interact with your consumers in a more personal way.

Digital Marketing News 4/18/2026 to 4/24/2026

This week: ChatGPT launches CPC ads and Ads Manager, AI search referrals surge with AEO impact, Google upgrades Demand Gen with view-through conversions.

Here's what happened this week in digital marketing:

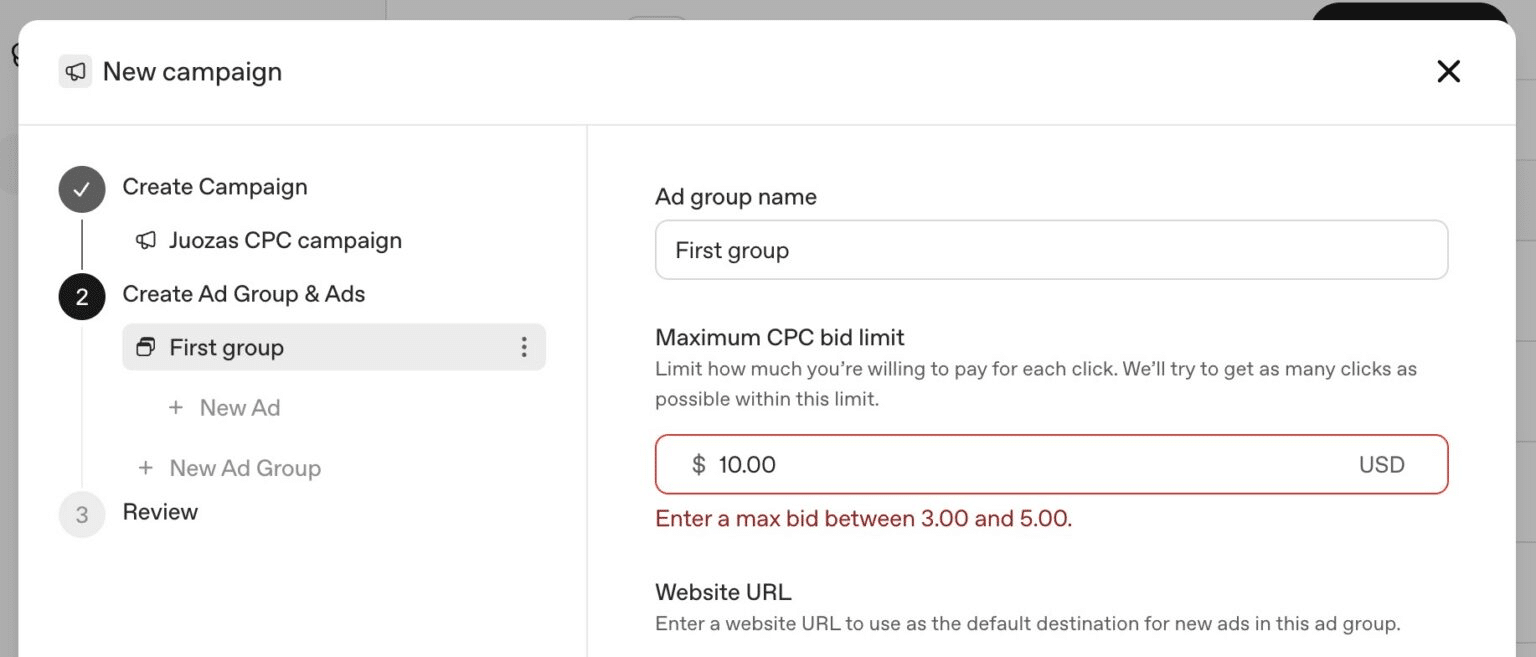

1. CPC Bidding Comes to ChatGPT

Advertisers can now advertise on ChatGPT by bidding by click, not just by impression.

In ChatGPT’s initial advertising pilot, advertisers would have to pay a flat rate per 1,000 impressions served – regardless of who clicked. Now, some advertisers are being offered the opportunity to bid per click. The price seems to range from $3 to $5.

Important to Know: This is only available to a select group of advertisers, but with OpenAI hiring its first advertising marketing science leader soon, we can expect ChatGPT Ads to continue to expand.

2. ChatGPT Tests New Ads Manager, Signaling Push Toward Scalable Ad Platform

OpenAI appears to be taking a major step forward in its advertising capabilities, with early tests of a new ChatGPT Ads Manager interface now surfacing among marketers.

The new dashboard is designed to give advertisers real-time control over campaigns—allowing them to launch, monitor, and optimize performance more efficiently. This marks a significant upgrade from the platform’s earlier, more limited reporting setup, which relied heavily on basic data exports.

At the same time, more brands are beginning to appear within ChatGPT, indicating a growing ad inventory and a clear move toward monetization at scale. The introduction of a dedicated Ads Manager suggests OpenAI is building toward a more mature, self-serve ecosystem similar to platforms like Google Ads and Meta.

While still in early stages, the evolution points to upcoming improvements in targeting, reporting, and automation, areas that will be critical for broader advertiser adoption.

Key Takeaway: ChatGPT is quickly evolving into a more robust advertising platform—marketers should start paying attention now as self-serve tools and ad capabilities continue to expand.

3. Study Reveals AI-crawled Sites Generate 320% More Human Traffic

A new study by Duda provides a clear view of how AI crawler activity is growing and what businesses need to do to get noticed.

The study analyzed over 858,000 sites to understand exactly how AI crawlers operate. They found these referral patterns:

- Total LLM referrals: 93,484 to 161,469 (+72.7%)

- ChatGPT: 81,652 to 136,095 (+66.7%)

- Claude: 106 to 2,488 (23x growth)

- Copilot: 22 to 9,560 (from near-zero)

- Perplexity: 11,533 to 13,157 (+14.1%)

Additionally, they revealed that crawlers are no longer just indexing, but also happening in response to real-time user queries, such as:

- User Fetch (real-time answers): 56.9% of all crawler activity, driven almost entirely by ChatGPT

- Training (model learning): 28.8%, split across GPTBot and other model crawlers

- Discovery (content indexing): 14.3%, distributed across multiple systems

- ChatGPT User Fetch volume: ~39.8 million visits

You can read the entire report here.

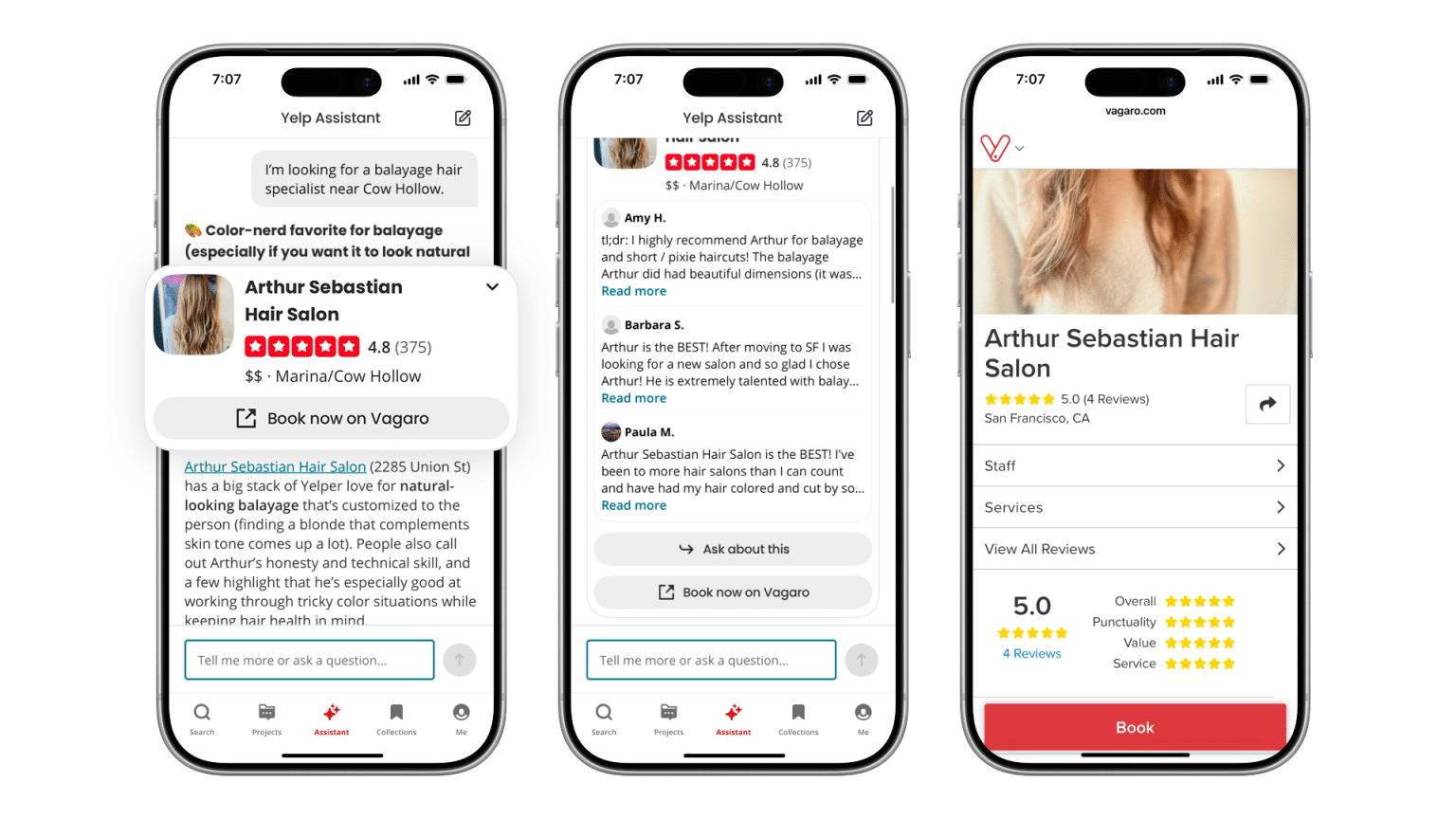

4. Yelp Introduces New AI Assistant

Yelp is a big player in the local SEO game – and it just got better.

In a conversation with TechCrunch, Yelp’s SVP of product, Akhil Kuduvalli Ramesh, said: “We would really like consumers to reconceive Yelp as a place where they can ask questions and get answers, not just that, but also complete the action. That’s Yelp reconceiving from a review platform to an answers and action platform. Some of the investments we’re making will be in that lane.”

The goal of this update and the others coming this year is to make the app more user-friendly. This new AI upgrade will help searchers:

- Ask questions

- Get restaurant reservations

- Order food for delivery

- Book service professionals

Yelp has always been a big deal in local SEO, but with these updates, more and more consumers will be turning to the app for recommendations on all kinds of things. If your business doesn’t have an updated Yelp page, you risk losing out on that traffic.

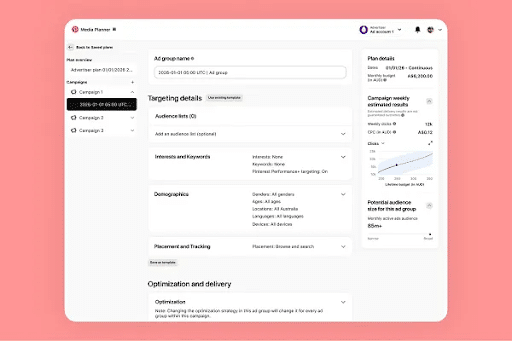

5. Google Upgrades Demand Gen to Accelerate YouTube Conversions

Google is expanding its Demand Gen capabilities with new features designed to drive faster conversions, especially across YouTube’s growing performance ecosystem.

The update integrates Demand Gen into Google’s Commerce Media Suite, allowing advertisers to leverage retailer first-party data, including product catalogs and conversion signals. This enables more precise targeting of high-intent shoppers across YouTube, Discover, and Gmail.

In addition, Google is introducing view-through conversion optimization, which prioritizes conversions that happen after a user sees an ad, not just clicks it. This shift reflects a broader move toward measuring impact in more passive, discovery-driven environments where users may not take immediate action.

Key Takeaway: As platforms like YouTube evolve into full-funnel channels, marketers should start optimizing beyond clicks, leveraging first-party data and view-based attribution to capture high-intent audiences more effectively.

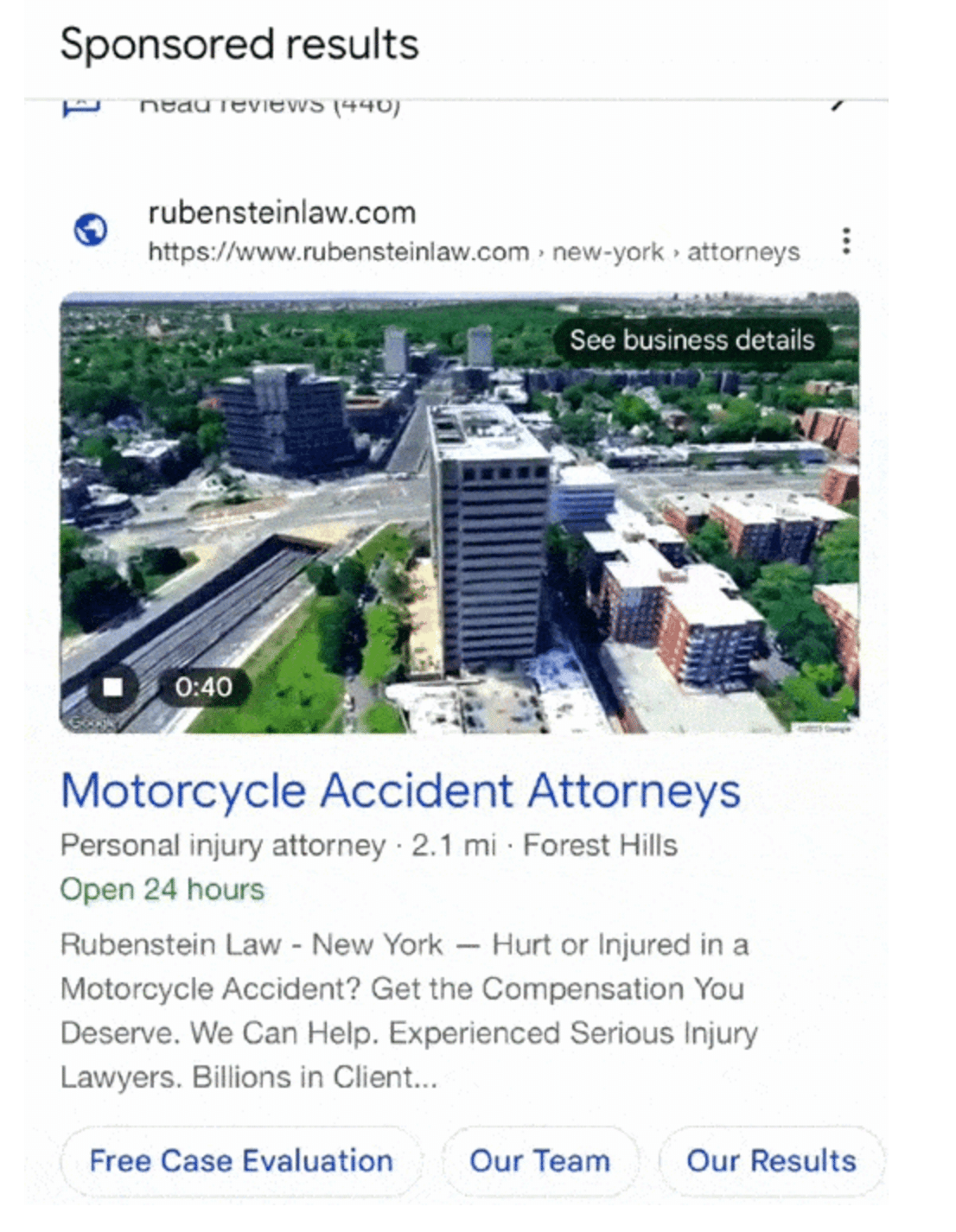

6. Video Content is Coming to Google Local Ads

The video content trend is expanding to Google Local Ads.

Video ads have been spotted within the local map pack. Instead of sticking to static listings or text-based ads, some advertisers are being given the opportunity to add more engaging video content.

Still in beta testing, the feature is only available to select advertisers in certain locations. You can check if you’re eligible by signing into your Google Ads dashboard and navigating to the Google Ads’ Location Manager.

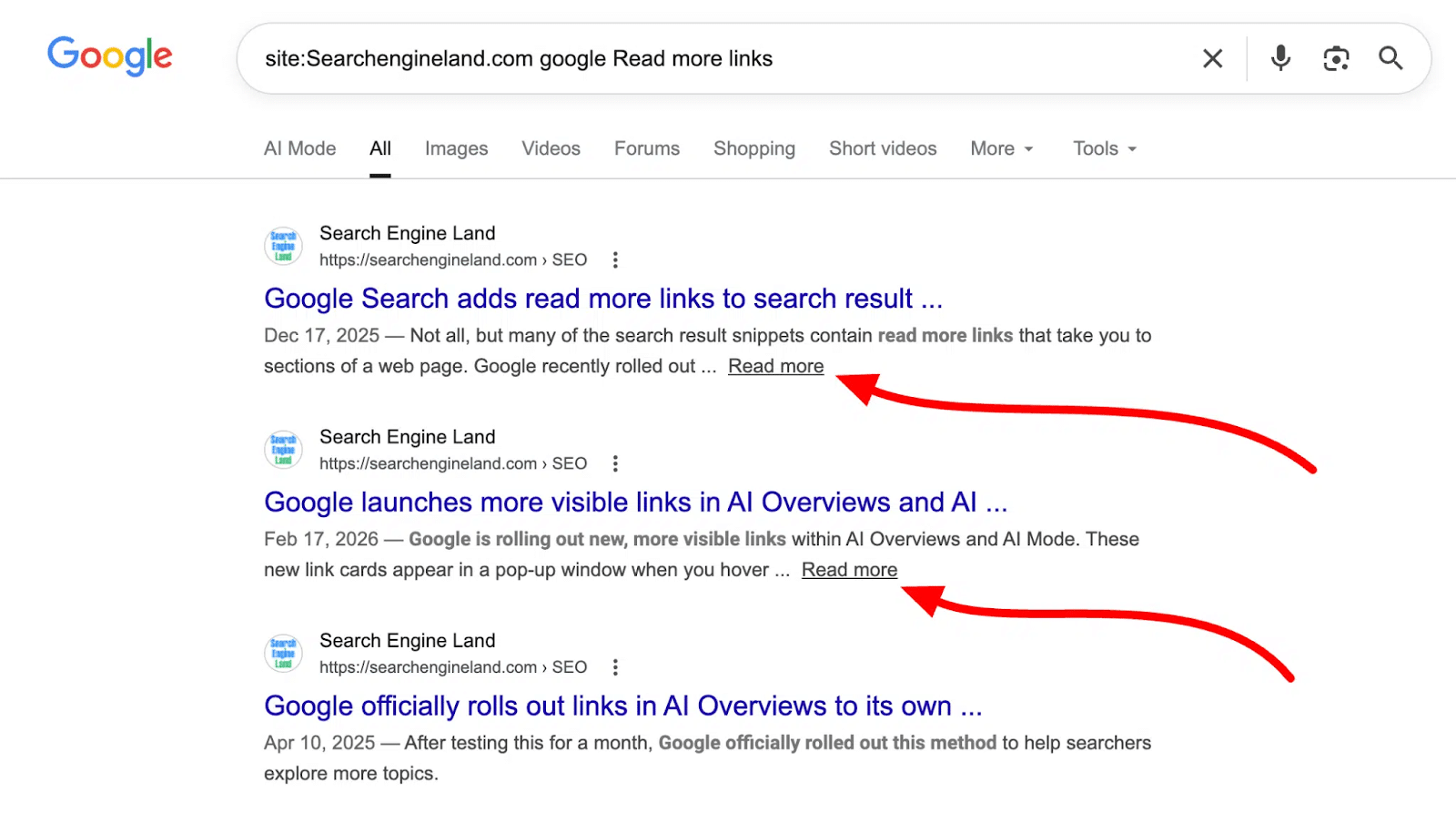

7. Google Publishes Best Practices for Read More Links

Google started adding “Read More” links in search result snippets within Google Search. Now, advertisers and digital marketers have some guidance on how to process that information.

Google published the best practices on its blog, noting three main ideas:

- Make your content immediately visible on the page, not hidden behind an expandable section or interface.

- Avoid using JavaScript to control the scroll position on the page.

- Don’t remove the hash fragment from the URL if you have history API calls or window.location.hash modifications on page load.

Why We Care: The “Read More” button is another opportunity to draw more eyes to your content. Make sure you follow the best practices to set yourself up for success.

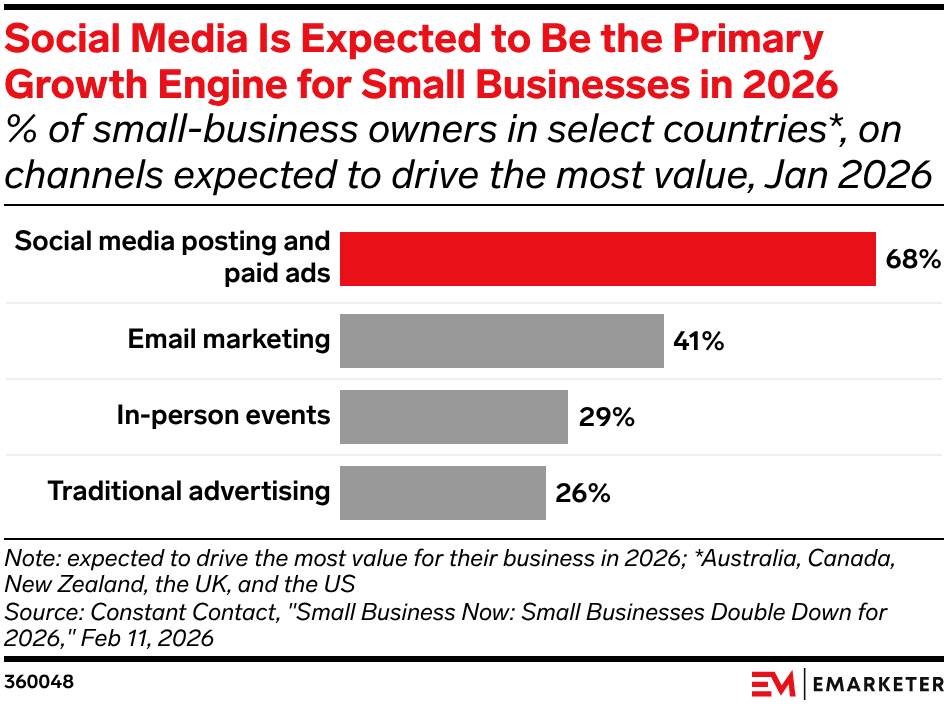

8. Social & Video Growing More Quickly than Search Ads

A new study by PwC revealed that, while search ads are still king, they aren’t growing as quickly as social media and video posts.

The report revealed that:

- Digital advertising revenue reached $294 billion, a 13% increase from last year

- Search saw an 11% increase

- Social media saw a 32% increase to $29 billion

- Digital video grew to $78 billion, a 25% increase